Introduction

Auditory localization has been researched for hundreds of years, in both animals and humans. One of the most widely studied animals is the barn owl, as this species is known for its exceptional localization ability. Why is this? The barn owl’s left ear is higher than its eye and points downward, while its right ear is lower than eye level, and points upward. While humans do not have this unique anatomical advantage, nor would most want to have it, there are many other anatomical and physiologic factors that contribute to our localization ability.

There are several reasons why auditory localization is important. Localization helps put us “at ease” in different listening situations, and in some instances, is critical for safety. Numerous occupations and recreational events require good localization for maximum performance. Localization also can assist in communication, especially when background noise is present. Quickly finding the target speaker in a group of talkers will assist with visual cues and speech reading. Finally, localization is also a component of auditory streams, the perceptual grouping of the parts of the neural spectrogram that go together. The goal of auditory scene analysis is the recovery of separate descriptions of each separate thing in the environment (Bregman, 1990).

Some aspects of auditory localization require bilateral processing, and in general, localization is enhanced with bilateral processing. The central auditory centers analyze the acoustic input for subtle differences in intensity, the spectrum of the signal, and timing. There are three primary areas of localization: the direction of a sound from around us, or horizontal angle; the elevation of a sound source, or vertical angle; and the distance of a sound source.

Basic Components of Localization

For determining the horizontal direction of a sound (e.g., from our left or right), the auditory system analyzes interaural time differences (ITDs; did the sound reach the right or left ear first?) and interaural level differences (ILDs), which are impacted by hearing thresholds and the head shadow effect (was the sound louder in the right or left ear?). Time differences are primarily used for low frequency localization, e.g., frequencies lower than ~800 Hz. Head shadow effects increase with frequency, and therefore loudness differences are the primary horizontal localization cue for frequencies above 1500 Hz.

Localizing the elevation of a sound source is determined from spectral shape cues. The sound spectrum is modified by the pinna, and these pinna effects are then compared with a set of directional transfer functions that we all have “internally stored”. The vertical azimuth that corresponds to the best-matching stored transfer function is selected as the origin of the sound.

There are several interrelating factors that assist us in determining the distance of sound source. The most obvious is that distant sounds are softer—this is especially helpful for well-known sounds. The sound spectrum also is used. A distant sound will be perceived as less brilliant and more muffled, because over distance, high frequencies are attenuated more rapidly than low frequencies. For sound with a known spectrum (e.g. speech) the distance can be roughly estimated with the help of the perceived sound. Reflection also assists in determining distance. The magnitude of the reflections alters the ratio between direct sound and reflected sound at the ear, which can provide an indication of distance.

The Effects of Hearing Loss on Localization

We have briefly reviewed how localization is achieved in individuals with normal hearing, but how does hearing loss impact the many localization cues? The most obvious is a reduction in audibility. We can’t localize what we don’t hear. Hearing loss tends to be more severe in the high frequencies, which will then impact the perception of the transfer function (vertical localization) and the magnitude of reflections (determination of distance). When the hearing loss is asymmetrical, level effects will also be altered. In addition to the audibility issue, distortions introduced by significant hair cell damage can alter the spectral analysis of the sound.

We also need to point out that the hearing-impaired listeners that we most frequently are working with are elderly. We know that with aging there is a central decline in auditory temporal processing. This can reduce the processing of auditory spatial cues, which in turn reduce localization ability. Studies examining the effects of aging on localization ability have shown a detrimental effect of age on spectral ripple detection (indicator for detection of spectral shape cues caused by the pinna) and changes in interaural phase differences (Neher, Laugesen, Jensen, & Kragelund, 2011). Interestingly, however, recently surveyed self-reported localization performance data (SSQ-questionnaire) did not reveal any differences for age (Olsen, Hernvig, & Nielsen, 2012).

The Effects of Hearing Aid Use on Localization

Given that audibility is an important component of localization, it might seem that simply providing audibility with hearing aids would restore localization to normal or near normal. This is sometimes true, but it is also possible for no improvement to be observed, or in the worst case, aided localization can be poorer than unaided performance. Kochkin (2010) reports that the data of MarkeTrak VIII shows that only 71% of hearing aid users are satisfied with hearing aid performance for “directionality” and only 15% are very satisfied.

There are several reasons why hearing aids do not always improve localization. As we’ve mentioned, some patients have cochlear distortions or cognitive deficits that will still be present. Also, most people fitted with hearing aids have had a hearing loss for several years, so their “internally stored” transfer functions may not be as sharp or accurate as they once were. There also are factors associated with the hearing aids themselves. The programmed gain may not be balanced very well, or in some cases a person with a bilaterally symmetrical hearing loss will be fitted with only one hearing aid—this could very likely make localization worse. Features such as compression, noise reduction and directional microphone technology alter the timing of the signal. Digital processing introduces a timing delay (often referred to as group delay), which could be of particular concern for open fittings, when both natural and amplified signals reach the ear.

One obvious negative aspect of hearing aid use for localization is the manner in which the microphone location of the hearing aid, or the hearing aid itself alters the natural pinna effect. This effect is the greatest for Behind-The-Ear (BTE) instruments, where the microphone is above the ear, and most all of the normal transfer function is absent. Research has shown that with deeply fitted Completely-In-the-Canal (CIC) instruments, vertical localization is similar to the open ear (See Mueller and Ebinger, 1997 for review). Completely-In-the-Canal instruments, however, are not as commonly fitted today, so our focus is on compensating for the lost pinna transfer function when BTE instruments are used.

Localization Assessment

It’s also important to consider how localization normally has been assessed with the hearing-impaired, both aided and unaided. In laboratory settings – listening environments with unnatural low noise and few reflecting surfaces – often single-source localization is used only in the horizontal plane (e.g. Neher et al., 2011; Akeroyd & Guy, 2011) or in both horizontal and vertical plane (e.g. Noble, Byrne, & Ter-Horst, 1997). Using this procedure, subjects are seated in the middle of a loudspeaker array and are asked to identify the loudspeaker they perceive to be the sound source. This test paradigm also can be used for investigation of horizontal source separation effects on speech understanding in background noise, in an effort to obtain localization abilities and speech recognition measures together. The results are then typically reported as mean error in degree, indicating the extent of deviation between response and target or percent of correct responses. Another example of this is the research of Keidser and colleagues (2009). In this study, the Siemens TruEar (a directional processing scheme implemented above 1 kHz) was compared to a directional scheme above 2 kHz, omnidirectional processing, and full directional processing of the hearing aid. The findings showed that there was a 4 to 8 degree better localization in the front/back dimension across stimuli with TrueEar than with any other microphone scheme after 3 weeks of adaption.

There are two limitations to the typical localization measures conducted in research laboratories. First, the extensive instrumentation required to make the precise measures that are necessary is not feasible for the average audiology practice. Secondly, it’s difficult to relate these laboratory measures to performance in the real world. For example, if you knew that your patient had a laboratory D-Score of 36.7 and confusions of 12%, is that good or bad? An alternative method is to assess localization ability in the real world using self-assessment inventories. These measures then allow for comparison of aided versus unaided, and also how aided performance relates to those with normal hearing.

Localization Study

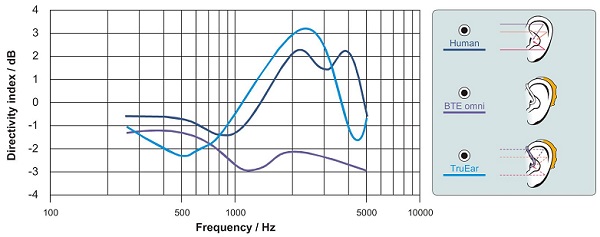

Recently, Siemens has developed a new product, the micon, which addresses many of the issues which impact aided localization. First, the product has the proven technology TruEar (Keidser et al., 2009; Chalupper, O’Brien, & Hain, 2009). This digital pinna compensation algorithm adds some directionality when the hearing aid is in the omnidirectional mode, which mimics the shadow and collection properties of the pinna, and improves localization when used with BTE products. Illustration of this is shown in Figure 1.

Figure 1. Directivity (as calculated by the Directivity Index) of the human ear compared to a standard BTE (closed earmold) with omnidirectional microphone and a BTE employing TrueEar processing according to Chalupper et al. (2009).

The micon also includes the following key features:

- e2e wireless connectivity (synchronized processing between hearing aids)

- An ultra-high bandwidth up to 12 kHz

- A new compression system that optimizes spectral content

- 48 channels of compression for more precise processing

The purpose of the present study was to evaluate the effects of this new technology regarding aided localization in the real world. The goal was not only to determine if aided localization was better than unaided, but also to compare aided localization to that of individuals with normal hearing.

Methods

This study examined the impact that new hearing aid technology would have on new hearing aid users regarding their ability to localize in real-world environments.

Participants

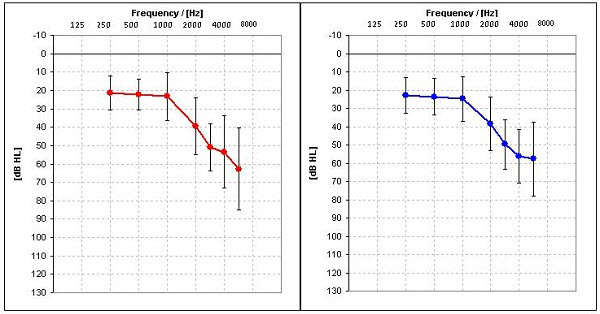

Twenty individuals participated in this study; 8 females and 12 males . The participants were all new users of hearing aids, between the ages of 31 to 87, with an average age of 66. The participants were selected to meet the criteria of a bilateral mild to moderately-severe, sensorineural hearing loss with hearing levels no better than 10 dB at 500 Hz and no worse than 75 dB at 3000 Hz. Symmetry between ears was within 20 dB for any given frequency. Mean audiograms for the right and left ears of the subjects are shown in Figure 2. Frequency-specific LDLs were also measured and later used for MPO selection in the programming software. Uniform data collection was followed after a completed consent form was obtained. Each participant was compensated with a $50 gift card.

Figure 2. Average hearing loss and the range of losses for right and left ears for 20 subjects.

Hearing Aids

The subjects were fit bilaterally with mini-BTE Siemens micon Pure receiver–in-the-canal style hearing aids. These hearing aids had 48-channel AGCi, low-level expansion, and 20-channel AGCo, with a frequency response extending to 12 kHz. The hearing aids had automatic directional technology with adaptive polar patterns, adaptive feedback cancellation, and they employed three different types of digital noise reduction (DNR) algorithms, which operate simultaneously and independently in the 8 channels. An additional new DNR algorithm, Directional Speech Enhancement also was activated (See Powers & Beilin, 2013 and Ramirez, Jons, & Powers, 2013 for review). As mentioned earlier, the Siemens micon also is equipped with TruEar technology. The hearing aids were programmed so that gain was not manually adjustable.

Localization Questionnaire

The self-assessment questionnaire used in this study was the Questionnaire for Disabilities and Handicaps Associated with Localization (DHAL; Noble, Byrne, & Lepage, 1994). This questionnaire has a total of 25 items, consisting of 14 questions regarding difficulties in everyday sound localization (localization disabilities) and 11 questions related to limitations and disadvantages caused by these disabilities (localization handicap). Two of the handicap questions (Items 21 and 25) involve the use of hearing aids, and those items were omitted for this study, as we also were interested in the unaided score. Each item on the scale is answered using a 4-point continuum: 1=Almost Never, 2=Sometimes, 3=Often and 4=Almost Always. The questions are worded so that Almost Always (“4” rating) would indicate excellent localization. The test is then scored either by averaging or by tallying the total points for all questions of the two areas. Maximum performance, therefore, for the Disability subscale would be 56 (14 x 4). Because we eliminated two of the 11 items from the handicap scale, maximum performance was 36 (9 x 4). In contrast maximum average for both subscales would be 4, indicating excellent localization abilities and least handicap, respectively. The scale is administered via pencil and paper.

Procedures

Prior to the fitting of hearing aids, all participants completed the DHAL questionnaire as it related to unaided localization (all participants were new hearing aid users). The questionnaire was reviewed with the patient to assure that they understood each question, and that all questions were answered.

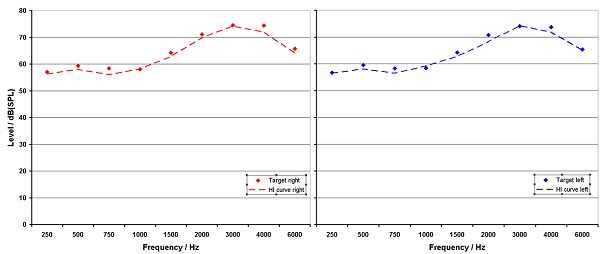

The Siemens micon hearing aids were then fitted bilaterally using Connexx 7 software. All default settings were used for special features, which were active for the real-world use. The hearing aids were fitted to the NAL-NL2 prescriptive method. Verification was then conducted using the Audioscan Verifit probe-mic system. Real-speech (the Verifit male speaker “carrot passage”) was used for the speech mapping application. Finetuning was conducted until real-ear output was at or near prescribed target for all frequencies through 6000 Hz. The resulting match to target for the right and left ear is shown in Figure 3.

Figure 3. Average NAL-NL 2 target and real ear output for right and left ears for 20 subjects.

Following the fitting of the hearing aids, the participants were instructed to use the hearing aids as much as possible in their everyday activities. No special instructions were given regarding localization. Following two weeks of hearing aid use, the participants returned and again took the DHAL questionnaire. This time they were instructed to answer the questions as they related to aided localization. They were not allowed to see their unaided rating when the aided portion was completed.

Results and Discussion

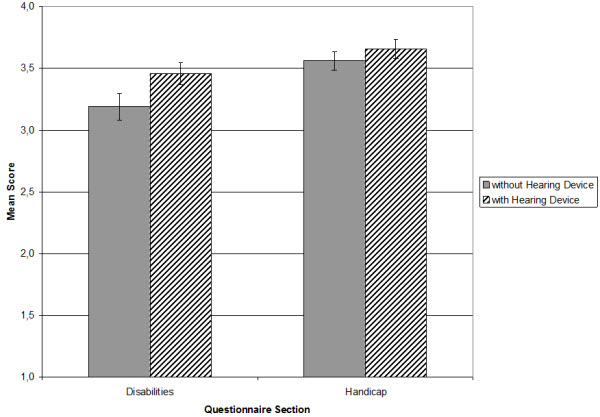

The purpose of this investigation was to determine the impact that the new hearing aid technology would have on real-world localization, assessed using the DHAL questionnaire. The aided versus unaided group mean findings are shown in Figure 4. While aided performance for the Disability subscale showed substantial improvement (paired t-test; p = .002), for the Handicap subscale, aided performance was only slightly better than unaided and this difference was not significant (p = .328). This is partially due to the fact that the unaided ratings were quite good—on average 3.6 (max = 4).

Figure 4. Average ratings for both scales of the DHAL questionnaire prior hearing aid usage and after 2 weeks of wearing.

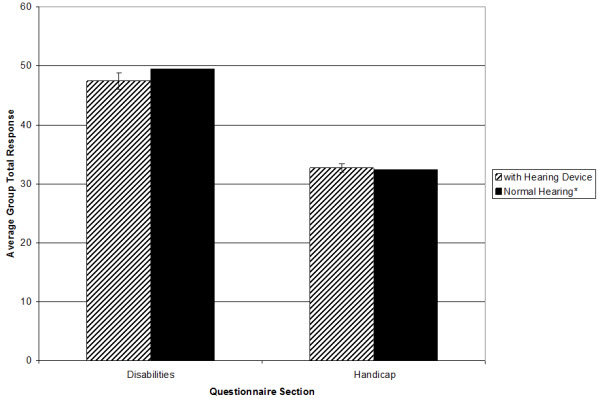

We also questioned how the aided findings compared to the scores of normal hearing individuals for this same scale. To make this comparison, we used the normative data of Ruscetta and colleagues (2005). Shown in Figure 5 are the aided findings from our study compared to the normative data of the Ruscetta research. As shown, our subjects, when aided with the Siemens micon, had localization ratings equal to those of normal hearing individuals.

Figure 5. Comparison of average group total response for aided performance revealed within this actual study and for normal hearing persons reported by Ruscetta et al. (2005).

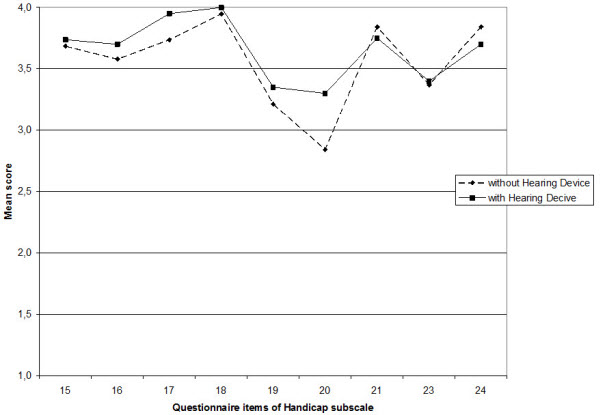

From a clinical standpoint, it is important to examine specific areas where the participants did or did not report substantial improvement in localization with hearing aids. For the Handicap portion of the scale, as indicated by the mean findings shown in Figure 6, average ratings tended to be relatively high even without hearing aids.

Figure 6. Average ratings for the items of handicap subscale.

Regardless, at least small differences in average scores were observed for most questions. For two items, aided average values were at or near maximum. These items were:

- Does difficulty telling where sounds are coming from lead you to avoid busy streets and shops? (item 17)

- Because of difficulties telling where sounds come from, is a visit to the shops something you don’t do by yourself?(item 18)

No improvement was shown by following three items:

- When sounds are mixed up or confused, does this cause you to feel confused or unsure about exactly where you are? (item 21)

- When sounds are mixed up or confused, does this cause you to lose concentration on what you were doing or thinking?(item 23)

- You are in a place where sounds seem mixed up and confused. You are by yourself. Do you feel a need to leave that place quickly to go to a place where you will feel more comfortable? (item 24)

This result could be expected because these items seem to refer to a general feeling about the listing situation and do not ask for differences between unaided and aided perception. However this issue is requested by item 20 that showed the greatest group improvement with hearing aids in the handicap portion of the scale:

- If you are in a busy place, such as a crowded shopping center or city street, do the sounds you hear seem all mixed up or confused? (item 20)

Aided improvement for this area is encouraging, as this is a commonly encountered situation for most hearing aid listeners. Moreover, this improvement was present after only two weeks of hearing aid use.

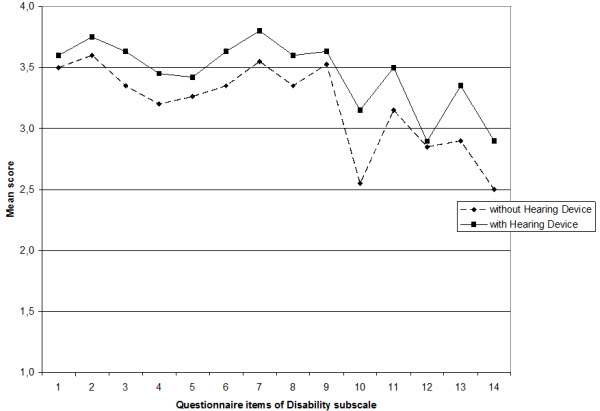

For the disability portion of the scale, group improvement was observed for all but one of the 14 items. The average ratings for the items on the Disability scale are shown in Figure 7.

Figure 7. Average ratings for the items of disability subscale.

The most significant improvement was for the following:

- In the street, can you judge how far away someone is, from the sound of their voice or footsteps? (item 10)

Another item that showed significant improvement was:

- If you have a problem telling where something is coming from, does it help if you move around to try to locate the sound? (item 14)

This is an interesting point because it is also known that active head movements help to recalibrate localization abilities (Hofman, Van Riswick, & Van Opstal, 1998). So restoration of audibility by wearing hearing aids at all enables the relearning of localization.

The one item that did not show any improvement with amplification was the following:

- You are standing on the footpath of a busy street. Can you tell, just from the sound, roughly how far away a bus or truck is? (item 12)

Interestingly, this item is quite similar to the item mentioned earlier concerning judging how far away someone is on a street, which showed the most group benefit. It is possible that this perceptual difference is caused by micon´s compression adjustments. For soft sound (person´s footsteps or voice) there is a more linear characteristic than for loud sounds (sound of a bus or truck) and it can be assumed that therefore together with the restoration of audibility the localization by the “internally stored” loudness distance function is less affected for soft sounds than for loud sounds.

Summary

In this research, we examined real-world localization using a self-assessment inventory with new users who were fitted bilaterally with the new Siemens micon technology. The results were encouraging. We found a significant improvement for localization (compared to unaided performance) for the Handicap subscale. Perhaps most importantly, our group aided localization performance was not significantly different from findings from a group of young normal-hearing individuals.

It is important to point out that our participants only used the hearing aids for two weeks. We know that spatial relations are learned; they are trained and calibrated by accurate spatial feedback of the visual system. An example of this is the research of Hofman et al. (1998). In this study, participants were fitted with earmolds that altered their spectral shape cues. Immediately after the fitting, the vertical localization performance for the participants dramatically decreased. However, there was a steady reacquisition of the localization abilities up to 6 weeks. It is therefore possible, that had our subjects continued to use their new hearing aids for a longer period, their localization performance would have further improved.

View Appendix A (pdf)

Acknowledgements

Dr. Weber would like to acknowledge Diane Erdbruegger, Reaghan Albert, Holly Erbaugh, and Leann Johnson for their contributions to this project.

References

Akeroyd, M. A., & Guy, F. H. (2011). The effect of hearing impairment on localization dominance for single-word stimuli. Journal of the Acoustical Society of America, 130 (1), 312-323.

Bregman, A.S. (1990). Auditory scene analysis: The perceptual organization of sound. Cambridge, Massachusetts: The MIT Press.

Chalupper, J., O’Brien, A., & Hain, J. (2009). A new technique to improve aided localization. Hearing Review,16(10), 20-26.

Hofman, P. M., Van Riswick, J. G. A. & Van Opstal, A. J. (1998) Relearning sound localization with new ears, Nature neuroscience 1(5), 417-421.

Keidser, G., O'Brien, A., Hain, J.-U., McLelland, M. & Yeend, I. (2009). The effect of frequency-dependent microphone directionality on horizontal localization performance in hearing-aid users, International Journal of Audiology, 48(11), 789-803.

Kochkin, S. (2010). MarkeTrak VIII: Customer satisfaction with hearing aids is slowly increasing. The Hearing Journal, 63 (1), 11-19.

Mueller, H. G., & Ebinger, K. A. (1997). Verification of the performance of CIC instruments. In M. Chasin (Ed.), CIC handbook (pp . 101-126). San Diego: Singular Publishing Group.

Neher, T., Laugesen, S., Jensen, N.S. & Kragelund, L. (2011). Can basic auditory and cognitive measures predict hearing-impaired listeners' localization and spatial speech recognition abilities? Journal of the Acoustical Society of America, 130(3), 1542-1558.

Noble, W., Byrne, D. & Lepage, B. (1994). Effects on sound localization of configuration and type of hearing impairment. Journal of the Acoustical Society of America, 95(2), 992-1005.

Noble, W, Byrne, D., & Ter-Horst, K. (1997). Auditory localization, detection of spatial separateness, and speech hearing in noise by hearing impaired listeners. Journal of the Acoustical Society of America, 102(4), 2343-2352.

Olsen, S. O., Hernvig, L. H., & Nielsen, L. H. (2012). Self reported hearing performance among subjects with unilateral sensorineural hearing loss. Audiological Medicine, 10(2), 83-92.

Powers, T. A., & Beilin, J. (2013, January 17). True advances in hearing aid technology: What are they and where´s the proof? Hearing Review. Retrieved from www.hearingreview.com

Ramirez, T., Jons, C., & Powers, T.A. (2013, February 18). Optimizing noise reduction using directional speech enhancement. Hearing Review. Retrieved from www.hearingreview.com

Ruscetta, M.N., Palmer, C.V., Durant, J.D., Grayhack, J., & Ryan, C. (2005). Validity, internal consistentcy, and test/retest reliability of a localization disabilities and handicaps questionnaire. Journal of the American Academy of Audiology, 16(8), 585-595.

Cite this content as:

Fischer, R., & Weber, J. (2013, April). Real world assessment of auditory localization using hearing aids. AudiologyOnline, Article #11719. Retrieved from https://www.audiologyonline.com/