From the desk of Gus Mueller

From the desk of Gus Mueller

"Knowledge is of two kinds; we know a subject ourselves, or we know where we can find information upon it."

Samuel Johnson, 1775

Okay, admit it. Each year you set aside a few professional articles or journals that you feel you really should read. You know, during a "break time" at work. The stack gets higher. You try taking some of them home, or maybe along with you when you travel, thinking that you can sneak in some reading during a quiet period. That doesn't work too well. After several months, a year or two, the stack becomes unwieldy, and the articles get "put away." Your desk is clean. You start a new stack.

If your interest lies with hearing aid articles, we might be able to help. First, a little history. Dating back to 2003, each year at the American Academy of Audiology (AAA) annual meeting, Catherine Palmer, Bob Turner and I reviewed what we considered to be the "top" hearing aids articles published the previous year. In general, because the audience was primarily clinicians, we tried to pick articles that might have some "Monday Morning" implications. We were told that our summaries relieved some of the guilt for the audiologists who had to send their "stack" to a new home. Our annual AAA convention review didn't happen this year, but Catherine and I put together a similar presentation for the annual spring audiology meeting organized by the AuD students at the University of Pittsburgh. Our Pitt presentation was recorded for AudiologyOnline and can be viewed here. But . . . there was so much good research to talk about, that we decided an expanded written version of our top picks of 2010 also would be helpful. And what better place to publish it than 20Q?

Our annual AAA convention review didn't happen this year, but Catherine and I put together a similar presentation for the annual spring audiology meeting organized by the AuD students at the University of Pittsburgh. Our Pitt presentation was recorded for AudiologyOnline and can be viewed here. But . . . there was so much good research to talk about, that we decided an expanded written version of our top picks of 2010 also would be helpful. And what better place to publish it than 20Q?

So, as you now know, our guest author this month at 20Q is Catherine Palmer, Ph.D., Associate Professor in the Department of Communication Science and Disorders at the University of Pittsburgh and Director of Audiology and Hearing Aids at the University of Pittsburgh Medical Center. Dr. Palmer has published extensively in several areas of audiology, and is a leading expert in evidence based practice. She currently conducts research in the areas of adult auditory learning following the fitting of hearing aids, and matching hearing aid technology to individual needs.

Catherine is also actively involved in the training of Ph.D. and Au.D. students at Pitt, and serves as Editor-in-Chief of Seminars in Hearing. She opened the Musicians' Hearing Center at the University of Pittsburgh Medical Center in 2003 and has focused a great deal of energy on community hearing health since that time.

Gus Mueller

Contributing Editor

July 2011

To browse the complete collection of 20Q with Gus Mueller articles, please visit www.audiologyonline.com/20Q

To view Dr. Mueller and Dr. Palmer's accompanying Recorded Course on this topic, please register here

1. Good to see the two of you. I didn't see your annual presentation on the program for the Academy of Audiology meeting this year. I thought maybe you stopped reading journals!

1. Good to see the two of you. I didn't see your annual presentation on the program for the Academy of Audiology meeting this year. I thought maybe you stopped reading journals!

Palmer: Oh no, we're still reading, or at least scanning, most every article we can find. It sounds like you're aware, that along with our colleague Bob Turner, we did a review of hearing aid research for many years at the Academy meeting. And yes, you're right, that didn't happen this year—but that didn't slow us down. We recently reviewed some of our favorite hearing aid articles from 2010 for an AuidiologyOnline recorded session which will soon be available for viewing, and you have 19 questions left for this 20Q feature on the same topic.

2. Great. Do you have the articles organized in any certain way, or should I just start asking random questions?

Mueller: We do have them organized, pretty much the same as always, so let me provide some background. Thanks to the students who work in Catherine's lab at the University of Pittsburgh, we managed to track down nearly 200 articles from over 20 audiology journals. Out of these, we picked some of our favorites, particularly trying to select articles that have some direct clinical applications. I should add that we only are reviewing articles related to traditional hearing aid amplification (e.g., no articles on Baha, middle ear implants, etc.). Then, we organized the selected articles more or less the way you would proceed through a typical hearing aid selection and fitting process:

- Pre-fitting assessment

- Signal processing and features

- Selection and fitting

- Verification

- Pediatric issues

- Validation and real-world outcomes

3. I'm ready to go! What can you tell us about pre-fitting assessment? I already do LDLs, the QuickSIN and the COSI—please don't tell me I have to do more testing?

Palmer: I'll leave that up to you to decide, but you know, more testing can be a good thing. One thing that we're always trying to determine is who will be a successful bilateral hearing aid user, or otherwise stated, we'd like to identify those individuals who are not good candidates. An article I liked on this topic was by Kobler and colleagues (2010), published in the International Journal of Audiology (IJA).

The authors wanted to identify tests that might separate individuals into groups of successful and unsuccessful bilateral hearing aid users. One way to do this is identify people who have become successful bilateral users and others who have rejected bilateral amplification and opted for unilateral use. They did this and they also included a control group of normal listeners. They included basic peripheral tests (thresholds, speech recognition, etc.) and also tests that would engage binaural processes including speech-in-noise and dichotic tests. The Speech, Spatial, and Qualities Questionnaire (SSQ; Gatehouse and Noble, 2004) also was included.

The two hearing aid groups had similar results for the peripheral hearing function tests. The unilateral amplification group showed significantly worse results in speech-in-noise and dichotic tests compared to successful bilateral hearing aid users. The spatial aspects within the SSQ were correlated to amplification preference. These results indicate that there are tests that we could employ that will help make an upfront decision about a recommendation for bilateral or unilateral use. The alternative is to fit bilaterally and work on a failure model meaning that we revert to a unilateral fitting if the bilateral configuration fails. One could imagine that the patient might be more comfortable with a data-driven approach to this decision (i.e., upfront testing). On the other hand, many clinicians have had the experience of fitting a patient bilaterally who by all indications should have been a unilateral user but derives benefit from the awareness of sound from both sides. Further examination of this topic will help with clinical decision making.

4. Interesting, and I do like data-driven decisions, but I'm just not too sure I have time to add dichotic testing to my pre-test battery. I'll think about it. What else do you have on pre-fitting testing?

Palmer: You might like the next study I selected, as it involves the QuickSIN, one of the tests that you said you're already doing. This study was senior authored by our own Gus Mueller, and published here on AudiologyOnline (Mueller, Johnson and Weber, 2010). One of the reasons I liked this article is that it looked at a question asked by many clinicians—"If I do several pre-fitting speech tests, am I really collecting new information, or pretty much measuring the same thing over and over?" The short answer is, you're probably measuring different things (if you pick the right tests), but I'll explain some of the details of the article.

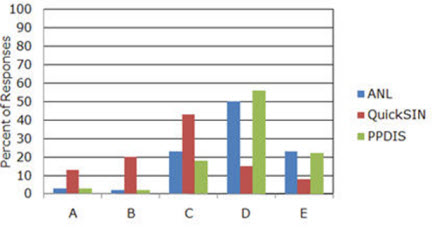

Mueller: Let me interrupt for a minute, Catherine. One of the reasons we conducted this study is that I had just completed a survey of the popularity of several different pre-tests, including the ones used in this study: the Acceptable Noise Level (ANL) test, the Performance-Perceptual Test (PPT) and the QuickSIN (see Mueller, 2010 for review of these three tests). At two different regional workshops for dispensing audiologists and hearing instrument specialists, I gave a 20-minute presentation for each one of the tests, describing its purpose, its administration and scoring, and how it could be used for fitting and counseling. At the end of each presentation, participants answered a question based on the test that had just been discussed. They had five possible choices, and the results are shown in Figure 1.

Figure 1. Distribution of popularity, as rated by dispensing professionals (n=107), of three speech-in-noise tests associated with hearing aid fittings. A=I already do this test routinely. B=I already do this test some of the time. C=Sounds good, and I'll probably start doing this test. D=Sounds good, but I'll probably never do this test. E= Don't think this test is worth the time investment (Mueller et al., 2010).

As you can see in Figure 1, even after my rather enthusiastic presentation, the survey results showed that while many of respondents believed that both the ANL and the PPT sounded like good tests, over 70% said that they would probably never use them. That encouraged me to question whether these tests do indeed provide unique information, or if their results can be predicted by other tests such as the more popular QuickSIN.

Palmer: Thanks Gus—helps to have the author of the article sitting next to me. So here are some details of the study. As you said Gus, you and your colleagues were interested in what pre-tests beyond the basic battery (pure-tone, immittance, word recognition) might give independent, useful information in the hearing aid selection and fitting process. Although you considered a long list of possibilities, you focused on the Quick SIN, ANL and the PPT which includes the measure of Performance-Perceptual Discrepancy (PPDIS). Most individuals are familiar with the QuickSIN, which provides the signal-to-noise ratio required for an individual to understand 50% of sentence material. The ANL provides a signal-to-noise ratio as well, but this is the signal-to-noise ratio that allows the noise signal to be "acceptable" to the listener while listening to speech. Some of the data related to ANL findings indicates that individuals who are "okay" with a small signal-to-noise ratio have a greater likelihood of being full-time hearing aid users. The PPT (PPDIS) is a relatively new test that compares the objective HINT measure to the individual's perception (rating) of how well he/she understands the sentence material in noise. The difference in these scores is the PPDIS.

Mueller et al. (2010) included 20 satisfied bilateral hearing aid users (47-83 years of age) in their study. All three tests were presented bilaterally in the unaided condition in the sound field. One loudspeaker was used to present the speech and noise for all three tests. A correlation was used to examine the potential associations among the scores. The results showed that test outcomes were not significantly correlated; they found that each test provided some unique information about the patient.

So, again we are in the position of having to determine which tests will provide the most valuable information in terms of the hearing aid selection process, with the understanding that most busy practices cannot add two or three new tests to their hearing aid selection process. These data combined with the data from the Kobler et al. (2010) study might convince a clinician to at least include speech-in-noise testing as a start. This could impact bilateral recommendations, recommendations for specific features (e.g., directional microphone technology) and assistive devices, as well as counseling regarding realistic expectations.

Mueller: I agree, Catherine. Our survey data shows that despite the known value, the majority of dispensing professionals do not conduct speech recognition-in-noise testing. While we're still on the topic of pre-fitting testing, there is an article I found interesting, which is a follow-up study on a topic we've talked about in our annual review a couple times before: plasticity and loudness discomfort measures. The article I'm referring to was by Hamilton and Munro, published in IJA. It's an expanded version of a preliminary paper by Munro and Trotter (2006) that we reviewed back in our 2007 presentation. The current findings aren't quite as dramatic as what they found before, but interesting nonetheless.

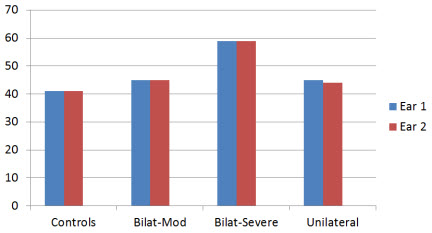

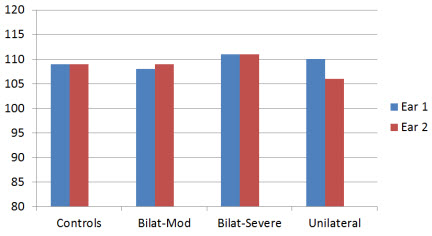

In this expanded study, they had four different groups: Controls (bilateral symmetrical hearing loss but no hearing aid experience); moderate bilateral symmetrical hearing loss fitted bilaterally; severe bilateral symmetrical hearing loss fitted bilaterally; and moderate bilateral symmetrical hearing loss fitted unilaterally. The mean hearing loss for the four groups is shown in Figure 2.

Figure 2. Average hearing thresholds for the four different groups for right (Ear 1) and left ear (Ear 2); aided (Ear 1) vs. unaided ear (Ear 2) for unilateral group (based on data from Hamilton and Munro, 2010).

What they found, was that the average LDL for the three bilateral groups was essentially identical for the right versus left ear. For the unilaterally aided group, however, there was a significant asymmetry—the aided ear had a higher LDL (see Figure 3).

Figure 3. Average loudness discomfort levels for the four different groups for right (Ear 1) and left ear (Ear 2); aided (Ear 1) vs. unaided ear (Ear 2) for unilateral group (based on data from Hamilton and Munro, 2010).

A secondary finding was that hearing aid use did not appear to alter LDLs when aided bilaterally. For example, the average hearing loss difference between the control and the bilaterally-severe groups was 17 dB (see Figure 2), but the difference in average LDLs was only 4 dB (see Figure 3)—an average increase no more than what you would expect from the hearing loss alone. This of course is good news for people fitting hearing aids.

5. Why do you say "good news?"

Mueller: Well, for some of us it seems logical that you would want to keep the maximum output of the hearing aids set "just right" (not too high, but also not too low) so that the residual dynamic range is maximized. The best way I know to do this is to measure the patient's LDLs and then assure that compression is adjusted so that maximum output is just below these values on the day the hearing aids are fitted. If we know that on average, bilateral hearing aid use doesn't alter LDLs, then this probably isn't something that we have to re-adjust on follow-up visits over the years following the fitting.

Palmer: Gus, didn't you say there was something about the Hamilton and Munro article that bothered you?

Mueller: I wouldn't say bothered, but I was a little puzzled as to why the average LDLs for all subject groups were as high as they were. The instructions that they used sound very similar to those of the Cox Contour Test, which then allows for a direct comparison with the LDL data of Bentler and Cooley (2001), which is in close agreement with the LDL data of Kamm and colleagues (1978). If we convert the Bentler and Cooley (2001) LDL data from 2-cc coupler (as displayed in their article) to HL, it appears that the subjects in the Hamilton and Munro (2010) study had average LDLs about 10 dB higher—for example, for an average 45 dB HL hearing loss, their subjects' average LDLs are around 108 dB HL versus ~99 dB HL for Bentler and Cooley. That seems high to me.

Palmer: Maybe the Brits just have tougher ears.

Mueller: Hmm. Hadn't thought of that.

6. Hey—you two can talk to each other after I've gone home! If you don't mind, I'd like to move on to signal processing and features, as that's always an interesting area of research.

Mueller: It certainly is, and I think you'll find this article I've selected particularly interesting. As you know, different manufacturers use different release times for their WDRC (slow versus fast) and many hearing aids allow you to adjust the release times for the WDRC to be either "slow" or "fast." Nobody is too sure who should get what, but some seem to believe that the patient's cognition ability could be used as a predictor. Simply stated, "faster thinking" people might be able to derive benefit from a fast release, while "slower thinking" people might be better with a slow release (or at least, not be able to pull out the potential benefits of fast release processing). While there is only limited data to support this notion, the 2006 Best Practice Guidelines of the American Academy of Audiology state:

"Fast-acting compression may not be suitable for patients with limited cognitive abilities (more prevalent in the elderly population). Fast compression time constants may be slightly beneficial for patients with normal and high levels of cognitive functioning."

The recent publication of Cox and Xu (2010) in JAAA, however, shows that it's just not that straightforward. These authors divided 24 individuals into two groups based on their cognition scores (five different cognition tests were administered). In a cross-over design (i.e, each subject "crossed over" from one condition to another, and at the conclusion of the experiment, all had experienced both conditions), they used hearing aids that had two different release times: fast=40 msec; slow=640 msec. The subjects were given speech recognition tests in the lab, and also used the two different compression time constant settings in the real world.

Cox and Xu's findings showed that the people with the better cognition scores did about the same for speech recognition for the short and long release times. The individuals with the lower cognition scores, however, performed significantly better with the short release time. In the real world, slightly more individuals favored long over short, but there was no significant relationship with this preference and the subject's cognitive performance. For example, the three people with the highest cognitive scores favored short, but so did two of the five people with the lowest cognitive performance. Finally, the APHAB showed no difference for long versus short release times, but interestingly, there was a trend for better APHAB scores for the release time that the subject preferred (be it long or short).

The bottom line from these data: On the day of the fitting, if you don't even know what WDRC release times you're selecting, you're still probably going to get it right about as often as the people who agonize over the decision.

Palmer: Great. I'm glad to know that I'm okay! That was an interesting article, and I have a couple articles from the University of Iowa regarding directional microphone technology that also have some thought provoking findings. The first is from Wu, and was published in JAAA.

Wu was interested in whether older and younger adults obtain and perceive comparable benefit provided by directional microphones on current hearing aids. This is an interesting question considering that many clinicians may debate about whether a younger child should have directional technology, but I'm not aware of a lot of clinicians thinking about any cut off for this recommendation based on advancing age for older adults. He included 24 hearing-impaired adults ranging in age from 36-79, who were fitted with switchable microphone hearing aids. These individuals were tested in the laboratory (HINT RTS for directional versus omnidirectional) and then wore the hearing aids in the field for 4 weeks where a paired-comparison technique (using both settings in any given listening situation) and a paper and pencil journal were used. The subjects were specifically trained to recognize situations where directional technology would be helpful, and also trained regarding how to position themselves to maximize benefit for these situations.

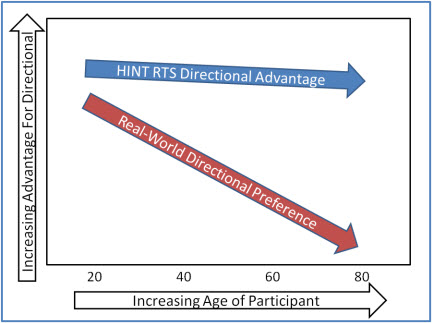

Figure 4 shows a simplified rendition of the data; a best fit line to the individual data points that Wu plotted for the two conditions.

Figure 4. Simplified illustration of the change in measured and perceived directional benefit for lab testing (HINT) versus real-world preference as a function of age (based on data from Wu, 2010).

Interestingly, age did not have a significant effect on directional benefit and preference as measured in the laboratory—HINT scores showed an average 4 dB or so advantage for directional across all ages. The field data, however, showed that older age was significantly associated with a lower perceived benefit for the directional setting.

Mueller: You say older age, but as I recall the significant preferences for directional start disappearing around age 60! Some of us don't think of that as "old."

Palmer: Relax, I didn't say old, just "older." Importantly, these data don't say that older individuals don't receive any benefit from directional microphone technology, but they do indicate that older individuals don't have the same impression of the benefit as younger individuals. This would be important information for the clinician in terms of counseling and in terms of responding to potential individual complaints that the directional microphones don't seem to be doing much (e.g., when the patient says "I don't really notice any difference"). In terms of counseling, the clinician may want to approach directional microphone technology for the older patient as something that may provide some assistance in certain noisy situations, but something that may not be particularly noticeable to the patient.

7. Hmm. Hadn't occurred to me that age would make that much difference. You said you had two articles on directional?

Palmer: I certainly do. I think you'll like this second one, too. It's from Wu and Bentler (2010), and published in Ear and Hearing.

In this article Wu teamed up with Bentler to examine the impact of visual cues on the benefit provided by directional microphone hearing aids in the real world. They hypothesized that the provision of visual cues would reduce the preference for directional signal processing and that laboratory audio-visual (A-V) testing would predict real-world outcomes better than auditory-only testing. They included 24 hearing-impaired adults in the study and compared microphone modes in everyday activities three times a day for 4 weeks using paper and pencil journals. They found no effect for visual cues on preference for directional signal processing (i.e., preference was not altered by the availability of visual cues). A-V laboratory testing, however, predicted field outcomes more accurately than did auditory-only testing. So just when you thought you were doing all the right testing, here is one more test you might want to include in your test battery.

8. Interesting that after 40 years we're still learning new things about directional hearing aids. What about other processing, like digital noise reduction, feedback suppression or frequency lowering?

Mueller: Not much on feedback suppression or DNR, but I did find a frequency lowering article for you from the NAL group in Australia, published in The Hearing Journal (O'Brien, Yeend, Hartley, Keidser, & Nyffeler, 2010). They examined two different hearing aid features, frequency compression and high-frequency directionality, and tested these features in three different ways: localization in an anechoic chamber, speech recognition in the lab, and using a real-world outcome measure—the SSQ. It was a cross-over design with 23 older adults (mean age 78 years); each subject experienced each condition (frequency compression "on" or "off") for eight weeks. Regarding their findings for frequency compression, it had no significant effect on localization (although very close to a negative effect; p=.052), and there was no significant interaction with time of use (individuals had word recognition testing conducted at week 1, 4 and 8). And the answer you're waiting for . . . there was no difference in their speech recognition performance for frequency compression on versus off. The findings from the SSQ also showed no difference when this feature was activated.

9. Okay, good to know. There are so many new features on hearing aids it's tough to keep up with the supporting background evidence.

Palmer: Interesting that you should say that, as two of the articles I've selected address that very issue. Meister, Grugel, Walger, von Wedel, and Meis (2010) published a survey of 143 hearing aid dispensers in Trends in Amplification. They created a discrete choice experiment, administered over the Internet, where they examined dispensers' view of 8 different hearing aid features. The features rated with the highest utility and importance were noise cancellation and directional microphones. The lowest importance was assigned to self-learning options. The dispensers' professional experience revealed a statistically significant influence on the assessment of the features. In other words, these were not necessarily data-driven opinions, they were much more commonly based on "what has worked for my patients" ratings.

There was another article published in Trends in Amplification by Earl Johnson and Todd Ricketts that looked at hearing aid features in a somewhat different way. This group wanted to develop and examine a list of potential variables that might account for variability in the dispensing rates of four common hearing aid features. They placed 29 variables in the following categories: characteristics of the audiologist, characteristics of the hearing aids dispensed by the audiologist, characteristics of the audiologist's patient population, and evidence-based practice grades of recommendations for the feature. They then mailed 2000 surveys examining 4 hearing aid features including: digital feedback suppression, digital noise reduction, directional processing, and the telecoil, relating to the categories identified above, to which 257 audiologists responded. A regression analysis was used to analyze the data.

Johnson and Ricketts reported that there was a clear relationship between the price/level of hearing aid technology and the frequency of dispensing that feature. There also was a relationship between the belief by the audiologist that the feature might benefit patients and the frequency of dispensing that feature. So, if you think it works, you're more likely to dispense it which makes sense. The results suggested that personal differences among audiologists and the hearing aids audiologists chose to dispense are related more strongly to dispensing rates of product features than to difference in characteristics of the patient population served by the audiologists. Simply put, there appears to be a "right" hearing aid for the audiologist independent of the patient. And this tends to make sense. Many of us have been in the position of seeing a patient with a stack of Internet printouts about a particular hearing aid, and we then explain that we would recommend a different hearing aid that does all the same things but that we are completely familiar with, so that we can do a great job of programming and fine-tuning it, and implementing correctly all the features it has available.

Interestingly, Johnson and Ricketts found that evidence-based practice recommendations were inversely related to dispensing rates of product features. So, the more you know, the more you question some of the use of some of these features.

10. Matching special features to the right patients does require some careful thought. Was there an article that provided guidance in this area?

Mueller: I have one for you—at least to give you something to think about. This review article is indeed about hearing aid selection, and was written by Hafter, and published in JAAA. If nothing else, it might have one of the most intriguing titles of the year: Is there a hearing aid for the thinking person? Among other things, Hafter reviewed the findings from Sarampalis and colleagues (2009), who showed that DNR processing might have some advantages for dual processing. As you might recall from our presentation from last year, the Sarampalis and colleagues study found that DNR "on" versus "off" assisted with verbal memory and reaction time when the subjects were engaged in a simultaneous difficult speech-in-noise recognition task.

Interesting findings, but before you get too excited, it's important to know that these were younger subjects with normal hearing. And also, other similar research hasn't shown findings quite as encouraging (Huckvale & Frasi, 2010). But the notion of looking at the effects of different hearing aid features related to cognitive processing, listening effort, listening fatigue, etc., seems to be a promising area of research. Ben Hornsby of Vanderbilt is doing some work in this area, and maybe I can convince him to stop by "20Q" for a visit sometime.

11. Sounds good. I'm one of those people who really believes in verification, so let's move on to that category. I'm anxious to hear what you came up with.

Mueller: You're probably thinking that we tracked down some articles on new speech-in-noise tests, some unique ways to set compression kneepoints, or some new ways to conduct probe-mic measures. There really weren't many articles published on those types of topics last year.

I know we promised that we would only talk about articles that have "Monday morning" applications, but I do have a couple articles related to verification that I'd like to mention just so you have them in your back pocket when you need them. The first of these was published in IJA by a team of well known European audiologists headed by Inga Holube (Holube, Fredelake, Vlaming & Kollmeier, 2010). It is a review of the development of the international speech test signal, which goes by the acronym ISTS. Now, I realize that some things that are labeled "international" really aren't all that international, but this really is. This signal is becoming more and more popular for probe-mic testing here in the U.S. In case this is the first time you're hearing about the ISTS signal, it was developed as part of the ISMADHA project (International Standards for Measuring Advanced Digital Hearing Aids) for the EHIMA (European Hearing Instrument Manufacturer's Association). The signal consists of concatenated speech of six female speakers; six different languages. The term "concatenated" has nothing to do with preparing wine for consumption. It simply means taking two or more separately located things, in this case small speech segments, and placing them side-by-side next to each other. The result is a non-intelligible real-speech signal that has all the most relevant properties of natural speech, yet it is not linked to a specific language.

The second article I'd like to mention also has to do with test signals that might be used for hearing aid verification. In Ear and Hearing, Gitte Keidser and colleagues from the NAL compared the spectra of nine different input signals available from different equipment (Keidser, Dillon, Convery & O'Brien, 2010). As you might expect, they found that the spectra are not all the same, with differences as large as 8 dB. Why is this important for the clinician? At one time (and maybe still today) there was a probe-mic system that allowed you to use one of three different speech or speech-like input signals (which had different spectra), but the displayed prescriptive fitting targets never changed when the input signal changed. They can't all be right!

This general issue is related to some of the work we've been doing at Todd Rickett's lab at Vanderbilt (Ricketts & Mueller, 2009; Picou, Ricketts, & Mueller, 2010). Years ago, when we primarily used the REIG for verification, with a measured REUG and REAG used for the calculation, the shape of the signal wasn't as important. Today, most dispensers using probe-mic measures for verification use the REAR, often referred to as "speech mapping" (Mueller and Picou, 2010). We've found that when conducting speech mapping, not only does there need to be a calculated match between the input signal and the ear canal SPL fitting targets displayed by the probe-mic system, but different systems may analyze the measured ear canal signal differently, which also may require the fitting targets to be altered.

12. Okay, I will keep those articles in mind, but how about something that relates a little more directly to my practice.

Palmer: One article that I thought had some great "food for thought" relating to clinical verification was the one by Sergei Kochkin, along with a big group of other authors—part of the MarkeTrak VIII findings (Kochkin et al., 2010). That article attempted to answer the age-old question: "Does following best practice verification guidelines lead to more satisfied hearing aid users?" Is that something we should talk about now, Gus?

Mueller: We could, but I think that article falls more in the validation/outcome measure category, so I have it on my list to talk about at the end. But I agree—it's great information, and does have direct verification implications.

13. I guess I'll have to wait. So, I see by your outline that the next category is pediatrics. What's new in that area?

Mueller: Historically, the pediatric hearing aid articles have been Catherine's area, (partly because she likes to show photos of her sons), so I see no reason to change things now.

Palmer: Gus is right, and for recent pictures of my boys (in South Africa on Safari) you are going to have to access our companion online talk. But for those who actually care more about the data, I'd like to first talk about a paper by Munro and Howlin from Ear and Hearing. As you know, when we're fitting hearing aids to infants and toddlers we are concerned with the RECD so we can be sure we are delivering the correct sound pressure level at the eardrum for each child based on evidence-based target recommendations. Just as a side note, the RECD is a concern in all of our diagnostic activities with infants and children as well.

This study compared RECD values between the left and right ear of 16 adults, 17 school-age children, and 11 pre-schoolers. All subjects had clear ear canals and normal middle ear function. The researchers used the subjects' existing custom earmolds in for measurement purposes (the signal was delivered to the ear via the earmold). They found that in 80-90% of the subjects, the difference between ears was less than 3 dB. The final recommendation from this group was that if RECD can only be measured in one ear due to patient cooperation, it is best to use this value for the other ear as well rather than using average published data. This should be a relief for clinicians who have managed to get the first RECD only to then have an unhappy baby on their hands. Stop there and use this value for the other ear as well when needed.

I'd like to mention the work by Stelmachowicz et al. (2010) in Ear and Hearing as well. Clinicians working with pediatric patients are faced with decisions about what advanced features to engage for these populations and which features are better left "off" while the child is developing speech and language. There are certainly conflicting opinions about these decisions at this point. As Dr. Stelmachowicz and her group always do, rather than just have an opinion on this, they collected data to answer the question.

They hypothesized that the use of digital noise reduction (spectral subtraction) might alter or degrade speech signals in some way. The concern being that although this might not significantly impact adults, it might impact children who are developing language skills. They included sixteen children with eight 5-7 year olds and eight 8-10 year olds. The children had mild to moderately-severe hearing loss and wore bilateral hearing aids with noise reduction processing performing independently in 16 bands. The test stimuli were nonsense syllables and words (in order to mimic learning of new items).

Their findings were actually consistent with previous findings with adults. On average the results suggested that the form of noise reduction (spectral subtraction) used in this study did not have a negative effect on the overall perception of nonsense syllables or words across these age groups. Also consistent with the adult studies, on average the noise reduction didn't have a positive impact on speech understanding either. Stelmachowicz and colleagues went on to analyze the data for individual subjects and found that some of the children were negatively impacted by the noise reduction and some were positively impacted. So although, on average, there is no significant impact in either direction, perception may be impacted for the individual child. Currently, we don't have a quick way to test this before making a signal processing recommendation for a specific child.

14. I'll keep that in mind. How about prescriptive fittings for kids? Didn't I hear that last year an entire journal was devoted to the results of the big DSL and NAL comparative study?

Palmer: You heard right. What you're referring to is several articles all contained in a supplement of IJA (International Journal of Audiology, 2010, 49 Suppl 1), but for our purposes here, I'll summarize what I think are the most important points. If you're interested, the entire protocols used by the Australian and Canadian group are presented in some of the papers included in that edition.

What everyone was most interested in was the large study designed to directly compare the use of the NAL-NL1 prescriptive fitting formula to the DSL [i/o] prescriptive fitting formula. A large group of children in Australia and Canada were included and were fit with both prescriptive methods over time. A variety of outcome measures were employed. The bottom line was that there were no significant findings for preference. In general, subjects needed a bit more gain than NAL provided in some situations and a bit less gain than DSL provided in some situations. Specifically, the results indicated that performance improved for soft sounds, sounds behind the listener, and with greater hearing loss with use of DSL and performance improved in noise with the NAL prescription.

15. That's all interesting, but neither the DSL or NAL algorithms are the same now as when that research was completed. So what do these results really mean?

Palmer: Good point. Although the results were just published last year, the data were collected several years ago. I suspect that in part, the results of this large study impacted the development of today's NAL-NL2 and DSL v5. Although the two fitting philosophies remain different, I believe it is safe to say that the final gain/output that is recommended for children with these two fitting strategies is more similar now than before.

16. I guess we've made it to our final category—validation and real-world outcomes. What do you have for me related to this topic?

Palmer: There were a couple that I liked. The first one is a paper written by Bill Noble, published in JAAA. He used the SSQ to examine real world benefits of bilateral hearing aid fittings, and bilateral cochlear implants. I'll just focus on the hearing aid part of the research.

As you know, even though we consider a bilateral fitting standard practice, there isn't as much real-world evidence supporting this as you might think. Noble conducted a retrospective study examining the SSQ data from a group of individuals fitted unilaterally (69 individuals) and a group of individuals fitted bilaterally (34 individuals). They obtained scores on the 49 SSQ items that are then grouped into ten subscales. Individuals using two hearing aids showed an advantage in a variety of challenging conditions where we would expect binaural hearing to be important. Consistent with the previous article reviewed by Gus, listening effort was examined through some of the SSQ questions, and using two hearing aids rather than one indeed provided a reduction in listening effort.

Considering the earlier study I reviewed by Kobler et al. (2010), some of the individuals originally fit with unilateral fittings may not be able to take advantage of these binaural cues. So, taken together, these two studies would suggest that it would be worthwhile to pre-identify who should be a bilateral hearing aid user and then encourage them in this decision based on the data from Noble (2010) that indicates that they will have improvement in important areas. In fact, the next study I'd like to discuss identifies those very areas.

This next study also examined real-world benefit from hearing aids and is from Louise Hickson and colleagues, published in IJA. First, there is a quote in this paper that I really like so I thought I'd share that:

"A hallmark of quality clinical practice in audiology should be the ongoing measurement of outcomes in order to improve practice."

We have spent a lot of time focused on verification in the past decade, but it is important that we don't forget about outcomes. Demonstrating outcomes is going to become more and more important in the current health care climate.

These authors sampled a very large group of individuals (1,653). They were trying to see what factors are associated with IOI-HA scores (Independent Outcome Inventory for Hearing Aids; Cox and Alexander, 2002). The idea being that then the clinician would know what factors to pay attention to in the clinic in order to improve outcomes. Seventy-eight percent used bilateral hearing aids and 81% used digital technology. The questionnaires included the IOI-HA, questions about satisfaction with hearing aid performance in different listening situations, hearing aid attributes, and clinical service.

A regression analysis was used to examine the data. A combination of factors explained 57% of the variance in IOI-HA scores. The higher IOI-HA scores were associated with aid fit/comfort, clarity of sound, comfort for loud sounds, and success communicating with one person, small groups, large groups, and outdoors. So, we now have data indicating that as long as we return hearing to "normal" and everything is comfortable, the outcome measures should be good!

17. That's an impressive sample size, but when I'm listening to you relate the results, it sort of sounds like things we already knew. What's the new message?

Palmer: You're right, this isn't really a new message, although it is nice to have solid data to support what we have all believed all along. I think two things to pull out of this are the great importance of physical fit/comfort and acoustic comfort (comfort with loud sounds). These are things we really can do something about for every individual and things that should be dealt with prior to the individual leaving the clinic. The other issues (clarity, success communicating in a variety of settings) will vary from patient to patient, and may require specific counseling.

18. Okay, that's helpful. You promised earlier that we would get back to that Sergei Kochkin article. I'm running out of allotted questions.

Mueller: Well now is the time. The Kochkin article I'm going to talk about is from the MarkeTrak VIII data, and was published in the Hearing Review (Kochkin et al., 2010). I'm not going to go into all the survey details, but the analysis that I'll be talking about is from 1,141 experienced and 884 newer users of amplification. Mean age was 71 years and mean hearing aid age was 1.8 years—all hearing aids were less than four years old.

As you know, most all MarkeTrak surveys assess satisfaction with amplification, but this survey added some questions regarding the hearing aid fitting process, which provided data to examine some potential interactions. The respondents were given a description of various tests that could have been administered during their fitting, verification or validation, and then they stated whether they received this testing or treatment. For the most part, the nine tests/procedures on the list were nothing more than what would be expected for someone following "Best Practice" guidelines—loudness discomfort testing, probe-mic, speech-in-noise, subjective judgments, outcome measures, etc. The procedures even including the most basic "hearing threshold testing in a sound booth." There also were questions related to auditory training and rehabilitative audiology.

19. I see where you're going with this. You could then determine if more testing leads to increased satisfaction, which is something I've always wondered about.

Mueller: Exactly. Kochkin measured overall success with hearing aids using a statistical composite of several factors, including hearing aid use, hearing improvement, problem resolution for different listening situations and purchase recommendations. To derive a total measure of success, a factor analysis was conducted on the seven outcome measures, resulting in a single index that was standardized to a mean of 5 and standard deviation (s.d.) of 2.

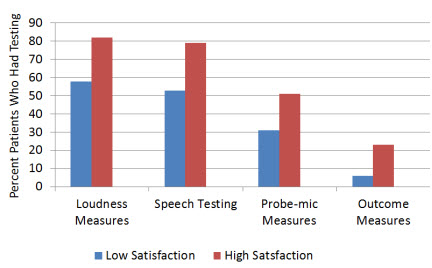

In order to examine the relationship between success and what testing was involved during the hearing aid fitting process, he compared the people who were>1 standard deviation above mean success (n=407) to those who were>1 s.d. below (n=331). To show you how this works, I've pulled out four tests/measures from the Kochkin data.

In Figure 5, adapted from Kochkin (2010), you see the results for loudness discomfort testing, probe-mic measures, speech recognition benefit testing, and an outcome measure—more or less basic things that are part of a standard verification/validation protocol. Notice that there is a greater probability for people who are in the "high success" group to have received all of these tests. For example, for the total sample, 68% of respondents received loudness discomfort testing, but 82% of the high success group received this testing.

Figure 5. Illustration of the relationship between hearing aid satisfaction and the testing conducted during the verification/validation process. The "Low Satisfacation" group were>1 s.d. below mean satisfaction; the "High Satisfaction" group were>1 s.d. above mean satisfaction (modified from Kochkin, 2010).

Now, with this type of retrospective study, we can not be certain that it was the additional testing that led to the increased satisfaction, but it's difficult to come up with a different conclusion that would be plausible. It seems rather unlikely that dispensers would first determine if their patients were "happy," and then conduct more testing if they were. I suspect, however, that in many cases it wasn't the testing per se that made the difference (e.g., the frequency response probably wasn't changed based on the results of the aided QuickSIN findings), but rather, armed with the information gathered from this testing, the dispenser became a better counselor. And by simply participating in the testing, the patients had a better understanding of the workings of hearing aids.

It's also important to point out that it was just not each individual procedure that was significantly related to satisfaction, the results revealed that there was a layering effect. Kochkin used a weighted protocol to allow for a comparison of the degree of tests administered to the level of success with hearing aids. As each additional verification measure was added, satisfaction increased incrementally; the relationship was quite dramatic. Check it out for yourself by clicking here.

20. That seems like a pretty good way to close out the hearing aid literature of 2010!

Mueller: You know, I think you're right. It sort of goes like this: You work a little harder and follow best practice guidelines and guess what? Your patients are more satisfied! What a concept. Who knows, maybe it will catch on.

References

American Academy of Audiology. (2006). Guidelines for the audiological management of adult hearing impairment. Audiology Today, 18(5).

Bentler, R., & Cooley, L. (2001). An examination of several characteristics that affect the prediction of OSPL90. Ear & Hearing, 22, 3-20.

Cox, R. M., & Xu, J. (2010). Short and long compression release times: Speech understanding, real-world preferences, and association with cognitive ability. Journal of the American Academy of Audiology, 21(2), 121-138.

Cox, R.M., & Alexander, G.C. (2002). The International Outcome Inventory for Hearing Aids (IOI-HA): psychometric properties of the English version. International Journal of Audiology. 2002 Jan;41(1):30-35.

Gatehouse, S. & Noble, W. (2004). The Speech, Spatial and Qualities of Hearing Scale (SSQ). International Journal of Audiology, 43(2), 85-99.

Hafter, E. R. (2010). Is there a hearing aid for the thinking person? Journal of the American Academy of Audiology, 21, 594-600.

Hamilton, A.-M., & Munro, K. J. (2010). Uncomfortable loudness levels in experienced unilateral and bilateral hearing aid users: Evidence of adaptive plasticity following asymmetrical sensory input? International Journal of Audiology, 49(9), 667-671.

Hickson, L., Clutterbuck, S., & Khan, A. (2010). Factors associated with hearing aid fitting outcomes on the IOI-HA. International Journal of Audiology, 49(8), 586-595.

Holube, I., Fredelake, S., Vlaming, M., & Kollmeier, B. (2010). Development and analysis of an International Speech Test Signal (ISTS). International Journal of Audiology, 49(12), 891-903.

Huckvale, M., & Frasi, D. (2010, June). Measuring the effects of noise reduction on listening effort. Proceedings of the Audio Engineering Society 39th International Conference, Hillerød, Denmark.

Johnson, E. E., & Ricketts, T. A. (2010). Dispensing rates of four common hearing aid product features: associations with variations in practice among audiologists. Trends in Amplification, 14(1), 12-45.

Kamm, C., Dirks, D.D., & Mickey, M.R. (1978). Effect of sensorineural hearing loss on loudness discomfort level and most comfortable loudness judgments. Journal of Speech & Hearing Research, 21(4),668-81.

Keidser, G., Dillon, H., Convery, E., & O'Brien, A. (2010). Differences between speech-shaped test stimuli in analyzing systems and the effect on measured hearing aid gain. Ear & Hearing, 31(3),437-40.

Kobler, S., Lindblad, A. C., Olofsson, A., & Hagerman, B. (2010). Successful and unsuccessful users of bilateral amplification: Differences and similarities in binaural performance. International Journal of Audiology, 49(9), 613-627.

Kochkin, S., Beck, D. L., Christensen, L. A., Compton-Conley, C., Fligor, B.J., Kricos, P.B., et al. (2010). MarkeTrak VIII: The impact of the hearing healthcare professional on hearing aid user success. Hearing Review, 17(4), 12 - 34.

Meister, H., Grugel, L., Walger, M., von Wedel, H., & Meis, M. (2010). Utility and importance of hearing-aid features assessed by hearing-aid acousticians. Trends in Amplification, 14(3), 155-163.

Mueller, H.G. (2010). Three pre-tests: What they do and why experts say you should use them more. Hearing Journal, 63(4), 17-24.

Mueller, H.G., Johnson, E., & Weber, J. (2010, March 29). Fitting hearing aids: A comparison of three pre-fitting speech tests. AudiologyOnline, Article 2332. Retrieved from the Articles Archive on https://www.audiologyonline.com.

Mueller, H.G., & Picou, E. (2010). Survey examines popularity of real-ear probe-microphone measures. Hearing Journal, 63(5), 27-32.

Munro, K. J., & Howlin, E. M. (2010). Comparison of real-ear to coupler difference values in the right and left ear of hearing aid users. Ear & Hearing, 31(1), 146-150.

Munro, K.J., & Trotter, J.H. (2006). Preliminary evidence of asymmetry in uncomfortable loudness levels after unilateral hearing aid experience: Evidence of functional plasticity in the adult auditory system. International Journal of Audiology, 45(12), 684-8.

Noble, W. (2010). Assessing binaural hearing: results using the speech, spatial and qualities of hearing scale. Journal of the American Academy of Audiology, 21(9),568-74.

O'Brien, A., Yeend, I., Hartley, L., Keidser, G., & Nyffeler, M. (2010). Evaluation of frequency compression and high-frequency directionality Hearing Journal, 63(8), 32,34-37.

Picou, E., Ricketts, T.A., & Mueller, H.G. (2010, August). The effect of probe-microphone equipment on the NAL-NL1 prescriptive gain provided to the patient. Presented at the International Hearing Aid Research Conference, Lake Tahoe, CA.

Ricketts, T.A., & Mueller, H.G. (2009). Whose NAL-NL fitting method are you using? The Hearing Journal, 62(7), 10-17.

Sarampalis, A., Kalluri, S., Edwards, B., & Hafter, E. (2009). Objective measures of listening effort: effects of background noise and noise reduction, Journal of Speech, Language & Hearing Research, 52(5),1230-40.

Stelmachowicz, P., Lewis, D., Hoover, B., Nishi, K., McCreery, R., & Woods, W. (2010). Effects of digital noise reduction on speech perception for children with hearing loss. Ear & Hearing, 31(3), 345-355.

Wu, Y.H. (2010). Effect of age on directional microphone hearing aid benefit and preference. Journal of the American Academy of Audiology, 21, 78-89.

Wu, Y. H., & Bentler, R. A. (2010). Impact of visual cues on directional benefit and preference: Part II--field tests. Ear & Hearing, 31(1), 35-46.