From the Desk of Gus Mueller

From the Desk of Gus Mueller

I think we all can agree that providing appropriate audibility of soft sounds is one of the most important goals when fitting hearing aids. In general, we are thinking about audibility of important speech sounds, in particular the higher frequencies, to improve speech understanding. But there is also another key reason for restoring audibility—something called the soundscape.

You probably don’t have many patients walking in the door with a request for soundscape enhancement, but restoring it for them is a service they certainly will thank you for later. If you’re not familiar with the term, a soundscape is the combination of the many sounds that make up our listening environment, sometimes referred to as acoustic ecology. It could consist of what we hear around us outdoors (birds singing, the wind blowing) or common indoor sounds such as the HVAC system, dishwasher, or the gentle purr of a wine cooler in action. We all have somewhat different soundscapes, which can relate meaning, comfort, emotion and memories. That is, if our soundscapes are audible!

We’ll be reminded in this month’s 20Q, that proprietary “Quick-Fit” algorithms are not very good at restoring audibility for soft sounds, even though their popularity seems to persist. Of course, it’s easy for us to fix this through careful real-ear verification, and while improving speech understanding usually will be our primary goal, soundscapes can be pretty important too.

With a focus on audibility, our guest author this month is Ron Leavitt, AuD, owner of the Corvallis Hearing Center, OR. Over the years Dr. Leavitt has held academic positions with the University of Arizona, Western Oregon University and Oregon State University. His has received the Outstanding Teaching Award from Beltone, and was named the Best Audiologist in Western US from Rayovac.

In 1988, Dr. Leavitt founded the Oregon Association for Better Hearing, and since that time he has conducted nearly 400 monthly seminars for the public on hearing healthcare research. Ron also has been involved in several applied clinical research studies, some of which related to the negative impact of not providing adequate audibility in hearing aid fittings. Read on to hear about the interesting findings that he shares with us in this month’s 20Q.

Gus Mueller, PhD

Contributing Editor

Browse the complete collection of 20Q with Gus Mueller CEU articles at www.audiologyonline.com/20Q

20Q: In Pursuit of Audibility for People with Hearing Loss

Learning Outcomes

After reading this article, professionals will be able to:

- Describe audibility issues that hearing aid users face.

- List tools that can be used to determine why hearing aid users are not reaching optimized hearing.

- Describe how functional near infrared spectroscopy (fNIRS) can be used to aid in audibility.

Ron Leavitt

Ron Leavitt1. Have you been on this pursuit of audibility for some time?

Yes. It all started early in my career when I became familiar with the work of David Pasco (1975). He and his colleagues at Washington University Medical School were very interested in aided audibility and specifically recommended that to optimize word recognition for our patients, we needed to achieve something he called a uniform hearing level or UHL. The essence of this UHL concept was to achieve aided soundfield thresholds of 20 dB HL between 500 and 4000 Hz.

Unfortunately, hearing aids of that era were not digitally programmable, and wide dynamic range compression had not yet become a standard method of processing the input signal. The end result of these hearing aid design limitations was that if you achieved anything near the 20 dB UHL soundfield thresholds, the resulting output for average and loud sounds was much too high, sometimes too high for almost anyone to tolerate.

2. Are things much better today?

Well, yes and no. The hearing aids of today certainly have the technology and programming capabilities that allow us to obtain appropriate audibility, even for most severe losses. We have well-validated prescriptive fitting methods to provide guidelines showing the path to optimum audibility. We also have probe-mic measures to ensure that the desired audibility is present based on the patient’s hearing loss.

3. You said yes and no. What’s the “no” component.

I can best answer that by describing a study we did a few years ago (Leavitt, Bentler and Flexer, 2019). In this study we looked at a total of 97 patients (176 hearing aid fittings) from 24 clinics throughout Oregon. These patients were experienced hearing aid users (mean age 75 years) who came to our clinic, but had been fitted elsewhere. Nine (9) of these facilities were staffed exclusively by hearing instruments specialists, and the rest (15) were in medical centers, otolaryngology clinics, and private audiology practices staffed by AuDs. These patients were using hearing aids from 16 different manufacturers. Probe-mic testing was conducted in our clinic using 50-, 60- and 75-dB SPL real speech inputs (male passage of Verifit). The deviations (rms error) from NAL-NL2 targets were then calculated.

4. That’s a lot of testing. What did you find?

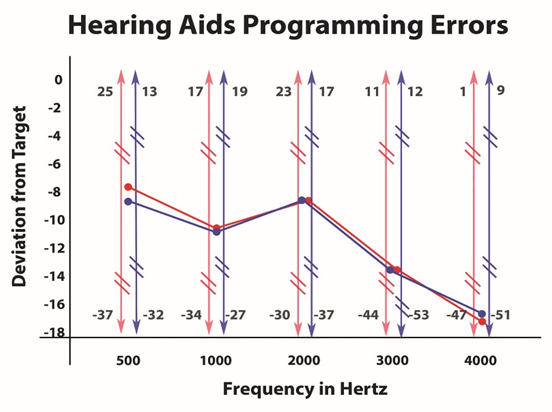

In short, a lot of people are using hearing aids that do not provide much benefit. Specifically, the most common fitting error observed was substantial under-fitting, particularly in the high-frequencies where the most contributory speech cues are located (for reference see the 2010 Killion and Mueller count the dots audiogram). As can be seen in Figure 1, these under-fitting errors were not small in magnitude averaging 10-17 dB below the NAL NL-2 target from 2000 Hz and above, with deviations as great as 30 dB or more in that same frequency range.

Figure 1. Deviations from the NAL-NL2 prescriptive targets based on the real-ear use-gain of 97 patients. (Courtesy of The Hearing Review)

When looking at these fitting errors, it would be reasonable to suspect that these 97 patients had severe or profound hearing losses, so that providing audibility in these critical frequency regions might not be possible. Such was not the case. The average patient in this review had a bilateral pure-tone average around 50 dB with a gently sloping configuration. In all, 97.7% of these hearing aid fittings failed to fall within the + 5 dB criteria that has been suggested by fitting guidelines. Of further concern, the fitting errors observed for the Big 6 manufacturers were equivalent to those provided by lesser known providers. One might hope that the newer hearing aids had less fitting errors than older hearing aids, but this was not true either. We also were curious if the fitting errors by audiologists would be less than the fitting errors observed for hearing instrument specialists—the degree of the mistakes for each group were equal.

5. You have me a little confused. You say we have probe-mic measures to verify audibility, yet nearly 98% of your patients were under-fit by more than 5 dB? How can this be?

The answer is very simple—I suspect that when most hearing aids are fitted, verification of audibility is not conducted. A survey by Mueller and Picou (2010) confirmed these suspicions. These authors showed that audiologist/dispenser self-reports regarding use of real-ear measures probably are inflated. These authors included a foil in their survey that asked how often hearing health care professionals were using a fictional test they termed the “Binaural Summation Index”. In this survey 21% of the audiologists and 28% of the hearing instrument specialists said they did this imaginary test at least sometimes, suggesting that the 59% of audiologists and 39% of dispensers who reported use of probe-mic measures were inflating the results. Moreover, even if we were to believe the Mueller and Picou original finding that 44% of hearing care providers routinely are conducting probe-mic testing, Mueller (2015) also points out that only 25% of the group surveyed reported that they are doing the testing to verify prescriptive targets. When this is taken into consideration, our match-to-target findings are not surprising.

6. In these data, you were looking at adults. What about the hearing aid fittings of children?

The findings are somewhat better, but not great. If you look at the data of McCreery, Bentler and Roush (2013) regarding hearing aid fittings for children, they found that only 45% of hearing aids that they evaluated in 15 U.S. states met their +5 dB target criteria. In that article, the authors noted that their data were somewhat optimistically skewed because of the finding from the University of North Carolina, where the majority of fittings met well-established prescriptive targets. Had these authors viewed the North Carolina data as an outlier the number of appropriate fittings would have been even lower than 45%.

7. You mention the importance of probe-mic verification, but don’t manufacturers provide default programs that optimize audibility?

You might think so, but unfortunately no. This has been reported in several studies over the years (e.g., Abrams et al, 2012; Leavitt and Flexer, 2012; Sanders et al, 2015; Amlani, Pumford and Gessling, 2017; Valente et al, 2018; Quar, Umat and Chew, 2019). In the Valente et al (2018) study for example, they report that for soft speech inputs, the manufacturer’s default program under-fits by an average of 7 dB at 2000 Hz, 15 dB at 3000 Hz and 21 dB at 4000 Hz, when compared to NAL-NL2 targets.

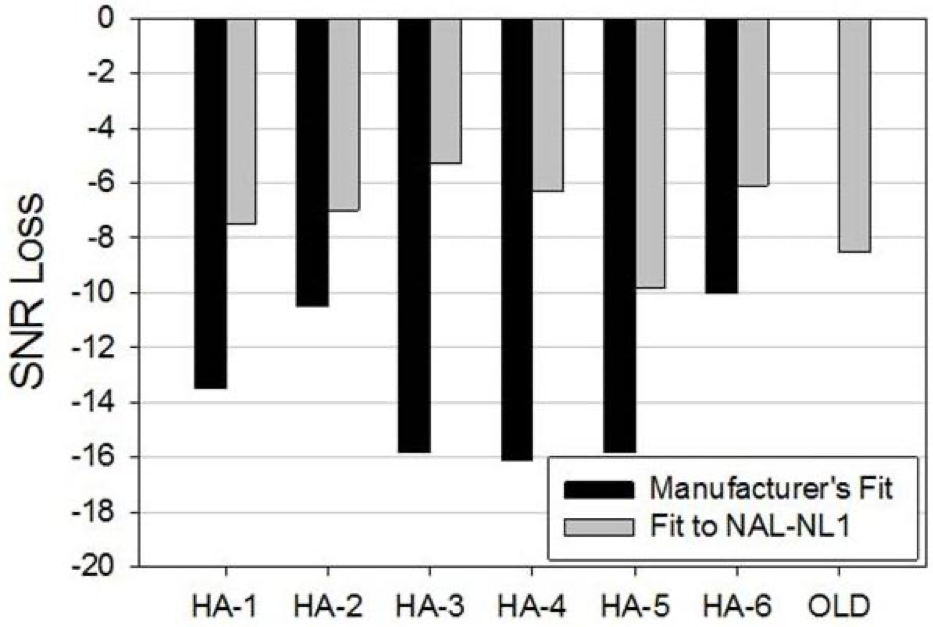

A few years back we set out to determine the extent to which these poor default fittings impact speech recognition. To do this we programmed the Big Six manufacturer’s premium level hearing aids using each manufacturer’s recommended proprietary algorithms. In addition to the premium level hearing aids, we also included one pair of analog programmable hearing aids with no directional microphones, no multichannel processing, and no noise reduction capabilities.

We first programmed each manufacturer’s premium level hearing aids using their best-fit algorithm and completed bilaterally aided QuickSINs. We then also reprogrammed these same premium-level hearing aids to NAL-NL-1 targets using real-ear verification and administered the QuickSIN a second time. We also tested the old analog pair of hearing aids, programmed to NAL targets.

8. If the NAL targets are really the best, then we’d expect better performance with this fitting. Did that happen?

It certainly did—to a greater extent than we even expected. When employing the Big Six manufacturer’s best-fit algorithms, the old analog hearing aids outperformed all these premium-level products. However, when programmed for optimum audibility as indicated by the NAL NL-1 real-ear verified target, five of the six premium level hearing aids provided significantly better QuickSIN scores. As can be seen in Figure 2, the mean bilaterally aided QuickSIN scores improved by as much 10 dB (HA-3), when the products were programmed correctly. This clearly shows that simply providing audibility provides an SNR improvement that can be larger than that obtained with sophisticated directional processing, and we know that without audibility, the benefits of directional technology will be reduced.

Figure 2. Mean QuickSIN findings for older analog instrument, premier hearing aids fitted to manufacturer’s proprietary algorithms, and premier hearing aids fitted to the NAL-NL1 prescriptive method.

9. That study was a few years back. Do you think that the manufacturer’s proprietary fittings have gotten better?

The evidence suggests these proprietary fittings are no better today than they were in 2012 when we published our original article. If you look at more recent studies (Leavitt, Bentler and Flexer, 2017; Valente et al, 2018; Folkheard, Bogatto and Scolie, 2020; Mueller, 2020) the same under-fitting problems noted in our previous publication are still apparent.

10. Are there other audibility issues that I need to be aware of?

Yes there are. Here is one to consider. When patients are fitted with hearing aids, and probe-mic verification is conducted, audibility is optimized using a speech signal that is presented from a meter in front of the patient. By contrast, in the real world this signal will be influence by distance, reverberation, other people or objects in the sound path and background noise. In short, this speech signal may be a very different signal when it strikes the microphones of the hearing aids. Measurement of these variables inspired the development of the Speech Transmission Index back in the 1970s (STI; Houtast & Steeneken, 1971). Because the STI is computationally intensive, simplified equipment has been used for this calculation, termed the Rapid/Room Speech Transmission Index (RASTI). This procedure uses an amplitude and frequency modulated (speech-like) sound sent from a transmitter to a receiver unit that measures what percentage of the modulated sound from the transmitter arrives intact at the receiver unit, which can be placed at numerous places around the listening area. The percent degradation of the speech-like signal reported by this technology relates directly to the problems faced by people with hearing aids and cochlear implants.

To assess the impact that a room environment has on speech signal audibility/integrity, a classroom of Western Oregon University college students was assembled with one empty desk in the room. The RASTI transmitter was set at the front center of the room at the Professor’s lectern. The RASTI receiver unit was then moved to every seat in the classroom by having the student in the test seat move to the one empty seat in the classroom. As we suspected in this relatively small classroom, the only place that we found 100% accurate transmission between transmitter and receiver was when the transmitter and receiver were within 6 inches of each other. This means that at greater conversational distances there are critical parts of the speech signal missing when the speech enters the hearing aids’ microphones.

11. What did you discover about other seating locations in this quiet university classroom?

By the front-row center of this classroom this speech-like signal had degraded to 83% of the original transmission. By the time the signal reached the back row in this small classroom, over half of the frequency/amplitude modulated signal had degraded. In other words, unless hearing aid users are willing to talk within 6 inches of each other to communicate, important elements of the speech signal might be lost. The greatest extent of this loss of audibility occurs for the very soft high-frequency sounds, so critical to speech perception in both quiet and noisy environments (Stelmachowicz et al, 2005). Going back to what I mentioned earlier, the point here is that when we have a perfect target fitting, showing output that optimizes audibility, we may not have sufficient audibility when the real-world effects of distance, reverberation and background noise are considered.

12. But we do have remote microphones that work well with hearing aids. Don’t they solve the issue that you are raising?

In theory, the answer is a definite yes, and there are decades of research that support this contention (see Wagener et al, 2018 for review). However, much like the optimism I once had for use of real-ear aided measures across our profession; non-use of remote microphones also has surprised me.

In our clinic we have several employees who are severely hard of hearing and life-long hearing aid users. The data logging of their instruments show that they rarely use their remote microphones, regardless of background noise levels. Specifically, data logging on all of these sophisticated hearing aid users shows less than 5% of the time they use their remote microphone.

13. How do these hearing aid users explain non-use of remote microphones in difficult listening environments?

One recurring answer is that they often do not need their remote microphone, and this explanation has some face validity since I have seen them in a variety of noisy settings functioning surprisingly well.

I think there is more to communicating, however, than just being able to minimally comprehend the message. For example, for these colleagues, it seems to take immense energy to achieve this speech perception in noise, and they readily admit to listener fatigue after a day of listening in such environments.

Unfortunately, not only is non-use of these microphones pervasive amongst these colleagues, but our data logging suggests they are the norm for most of our patients, who also seem to have around a 5% use rate, based on data logging. It is certainly possible that many of these patients are simply not in situations where the remote mic is needed, but my general feeling is that this technology is underutilized by patients, and probably not recommended as often as necessary by hearing care professionals.

14. What have you been thinking about lately regarding audibility?

As you may have noticed, there has been considerable interest in recent years regarding audibility and the central auditory system. A review article by Ratnanather, 2020, details the central anatomical/physiological problems associated with poor audibility. Other recent review articles also have pointed out what we have known for a long time—that we need to go well beyond the pure-tone audiogram, prescriptive methods, real-ear aided measures and examining input signal degradation. Contemporary audiology must reach beyond the cochlea.

15. How does this notion relate to hearing aid amplification?

While there are many articles on this topic in past years, I believe Glick and Sharma (2017; 2020), and Sharma (2021) recently have very effectively focused our attention on the importance of optimizing audibility for people with hearing loss, and the ramifications of failing to provide amplification in a timely manner. They have also offered hope for resetting the brain resource reallocation they report by appropriate intervention with well-fitted hearing aids. These authors also note that appropriate amplification intervention may result in a host of cognitive benefits (Glick and Sharma, 2020).

16. I don’t have the equipment to do the sophisticated testing of Sharma and colleagues. Are there behavioral tests that I could conduct to look at this association?

There is. Glick and Sharma, in both publications showed that those who obtained normal bilaterally aided scores on the QuickSIN were spared the unfavorable brain resource reallocation observed in those with untreated or poorly treated hearing loss. This finding prompted us to examine our clinic’s medical database, where we have over 5000 electronic patient records (Leavitt, Flexer & Clark, 2020).

17. Sounds interesting. What did you find?

We routinely enter the unaided and aided speech intelligibility index (SII) values of all patients who use hearing aids and/or cochlear implants. We also report the unaided and aided QuickSIN scores for all patients who use any form of amplification. In this way, it is easy to electronically locate those who have scored normally on the bilaterally aided QuickSIN. After seeing the 2017 article of Glick and Sharma, we began searching for those patients who achieved these normal bilaterally aided scores. We were able to identify 63 such patients who had been seen over a two-year period. Unfortunately, we had 831 patients in our database with equivalent unaided and aided SIIs who could not achieve normal bilaterally aided Q-SIN scores. These 831 patients also had real-ear verified matches to target, yet their bilaterally aided Q-SIN scores were not normal. So simply achieving an NAL NL-2 target cannot guarantee normal bilaterally aided scores on the Q-SIN, with resultant potential brain reset.

18. Were there patient factors that correlated with the poor QuickSIN scores?

We found that patient age, patient income, patient level of education, hearing aid age, level of technology and hearing aid features (beyond directional microphones) did not seem to explain those who had normal vs. abnormal bilaterally aided Quick SIN scores. In fact, the only consistent findings in this study were that all patients had good to excellent unaided word recognition in the better ear as measured by the recorded version of the Maryland CNCs, had unaided SIIs of 0.5 or better in the better ear and all hearing aids were programmed at or near an NAL NL-2 prescriptive target for 50-, 60- and 75-dB SPL speech inputs. However, these characteristics also apply to the other 831 patients who did not score normally on the bilaterally aided Q-SIN. .

In conclusion, I would say that while amplified audibility is an essential foundation of optimized speech perception in noise, there are other contributory variables that will be identified as research in this area progresses.

19. What other variables?

For example, I believe we will see audiologists performing more revealing imaging studies using a relatively new procedure known as functional near infrared spectroscopy or fNIRS. As noted previously, Glick and Sharma (2017, 2020) have related normal bilaterally aided scores on the QuickSIN to appropriate brain resource reallocation. Further, reports in the literature suggest that to maximize QuickSIN scores it is necessary to fill in the gaps of audibility (Leavitt and Flexer, 2012). Following the normal bilaterally aided QuickSIN/real-ear verified audibility logic, it could be argued that any hearing aid user who can score normally on the QuickSIN would have maximum activity in the auditory area of the brain when performing an auditory task. Such indirect diagnostic inferences are common in our profession. For example, air-bone gaps suggest some type of conductive pathology. Absent acoustic reflexes with normal hearing suggests some type of retro-cochlear pathology. These two tests do not, however, yield equivalent diagnostic precision as is obtained with more direct measurements such as multi-frequency immittance, CT scans or MRIs. The point is that a more direct measurement of the phenomenon of interest increases our confidence in the diagnosis. I see the fNIRS much like acoustic immittance. Both tests take us closer to what we really want to know than the inferential air-bone gaps or bilaterally aided QuickSIN scores. The fNIRS is a more direct measurement of the phenomenon of interest, namely, restoration of proper brain resource allocation. Additionally, fNIRS is usable with cochlear implant users as there is no magnetic activity associated with these scans, and does not require an immobile patient who may be allergic to contrasting dye or claustrophobic. It also forgoes the gooey head typical of the EEG. There are several reports in the literature where researchers are applying fNIRS to better understand speech perception and audibility.

20. What is your final message regarding your search for audibility?

Optimized audibility is the foundation of what we know about improved outcomes for individuals with hearing loss, whether discussing children or adults. I think we can do better. Even when we do achieve such real-ear verified aided audibility, we cannot be sure we have solved the unfavorable brain resource reallocation described by Glick and Sharma (2017; 2020). Beck et al (2018) also refute the idea that audibility is the only determinant of good speech perception in noise pointing out that in this country some 26 million people with normal hearing also note difficulty understanding speech in noise. As audiologists we must then use those tools available to us to go beyond any speech in noise test to determine why those relatively few patients who have real-ear verified audibility cannot achieve these scores. We have auditory brainstem response audiometry to help identify dysfunction in this area. We also have many tests that point to auditory processing problems. What we lack is a clinically feasible, direct measure of the brain’s activity in response to our rehabilitative interventions. Hopefully, more pervasive use of fNIRS (and other imaging techniques) combined with data relating such imaging studies to behavioral outcomes will illuminate the mystery of what is needed beyond audibility to optimize speech perception in noise for people with hearing loss.

References

Abrams, H. B., Chisolm, T. H., McManus, M., & McArdle, R. (2012). Initial-fit approach versus verified prescription: Comparing self-perceived hearing aid benefit. Journal of the American Academy of Audiology, 23(10), 768–778.

Amlani, A. M., Pumford, J., & Gessling E. (2017). Real-ear measurement and its impact on aided audibility and patient loyalty. Hearing Review, 24(10), 12-21.

Beck D. L., Danhauer J. L., Abrams H. B., Atcherson S. R., Brown D.K., Chasin M., Clark J.G., De Placido C., Edwards B., Fabry D.A., Flexer C., Fligor B., Frazer G., Galster J. A., Gifford L., Johnson C. E., Madell J., Moore D. R., Roeser R. J., Saunders G. H., Searchfield G. D., Spankovich C., Valente M., & Wolfe J. (2018). Audiologic considerations for people with normal hearing sensitivity yet hearing difficulty and/or speech-in-noise problems. Hearing Review, 25(10), 28-38.

Folkeard, P., Bagatto, M., & Scollie, S. (2020). Evaluation of hearing Aid manufacturers' software-derived fittings to DSL v5.0 pediatric targets. Journal of the American Academy of Audiology, 31(5), 354–362.

Glick, H., & Sharma, A. (2017). Cross-modal plasticity in developmental and age-related hearing loss: Clinical implications. Hearing Research, 343, 191–201.

Glick, H. A., & Sharma, A. (2020). Cortical neuroplasticity and cognitive function in early-stage, mild-moderate hearing loss: Evidence of neurocognitive benefit from hearing aid use. Frontiers in Neuroscience, 14, 93.

Houtgast, T., & Steeneken, H. J. M. Evaluation of speech transmission channels by using artificial signals. Acustica, 25(6), 355-367.

Killion M. C., & Mueller H. G. (2010). Twenty years later: A new count-the-dots method. Hearing Journal, 63(1), 10-17.

Leavitt R., Bentler R., & Flexer C. (2017) Hearing aid programming practices in Oregon: Fitting errors and real ear measurements. Hearing Review, 24(6), 30-33.

Leavitt R.J., Flexer C., & Clark N. (2020). Variables associated with attainment of normal scores on the bilaterally aided QuickSIN test. Hearing Review, 27(9), 18-21.

McCreery, R. W., Bentler, R. A., & Roush, P. A. (2013). Characteristics of hearing aid fittings in infants and young children. Ear and Hearing, 34(6), 701–710.

Mueller H.G. (2020) Perspective: Real ear verification of hearing aid gain and output. GMS Z Audiol (Audiol Acoust), 2:Doc05. DOI: 10.3205/zaud000009, URN: urn:nbn:de:0183-zaud0000096

Mueller, H.G. (2015, May). 20Q: Today's use of validated prescriptive methods for fitting hearing aids - what would Denis say? AudiologyOnline, Article 14101. Retrieved from https://www.audiologyonline.com

Mueller H.G. & Picou E.M. (2010). Survey examines popularity of real-ear probe-microphone measures. Hearing Journal, (63)5, 27-32.

Pascoe D. P. (1975). Frequency responses of hearing aids and their effects on the speech perception of hearing-impaired subjects. The Annals of Otology, Rhinology, and Laryngology, 84(5 pt 2 Suppl 23), 1–40.

Quar, T. K., Umat, C., & Chew, Y. Y. (2019). The Effects of manufacturer's prefit and real-Ear fitting on the predicted speech perception of children with severe to profound hearing loss. Journal of the American Academy of Audiology, 30(5), 346–356.

Ratnanather J. T. (2020). Structural neuroimaging of the altered brain stemming from pediatric and adolescent hearing loss-Scientific and clinical challenges. Wiley interdisciplinary reviews. Systems biology and medicine, 12(2), e1469.

Sanders J., Stoody T., Weber J., & Mueller H.G. (2015). Manufacturers’ NAL-NL2 fittings fail real-ear verification. Hearing Review, 21(3), 24.

Sharma A. (2021). 20 Q: Harnessing neuroplasticity in hearing loss for clinical decision making. AudiologyOnline, Article 27826. Available at www.audiologyonline.com

Stelmachowicz, P. G., Pittman, A. L., Hoover, B. M., Lewis, D. E., & Moeller, M. P. (2004). The importance of high-frequency audibility in the speech and language development of children with hearing loss. Archives of Otolaryngology--Head & Neck Surgery, 130(5), 556–562.

Valente, M., Oeding, K., Brockmeyer, A., Smith, S., & Kallogjeri, D. (2018). Differences in word and phoneme recognition in quiet, sentence recognition in noise, and subjective outcomes between manufacturer first-fit and hearing aids programmed to NAL-NL2 using real-ear measures. Journal of the American Academy of Audiology, 29(8), 706–721.

Wagener, K. C., Vormann, M., Latzel, M., & Mülder, H. E. (2018). Effect of hearing aid directionality and remote microphone on speech intelligibility in complex listening situations. Trends in Hearing, 22, 2331216518804945. https://doi.org/10.1177/233121651880494

Citation

Leavitt, R. (2021). 20Q: In pursuit of audibility for people with hearing loss. AudiologyOnline, Article 27848. Available at www.audiologyonline.com