Learning Outcomes

After this course learners will be able to:

- Describe the 4 audiological claims supporting Phonak Marvel.

- Describe the patient benefits of each feature in Phonak Marvel.

- Describe the audiological improvements in Phonak Marvel.

Introduction

Thank you for joining us for this presentation on Phonak Marvel. Today we are going to be looking at the research supporting our newest product line, Audeo Marvel. In addition, we're going to discuss the positive experiences our patients are having, as well as the research that's gone into these devices.

First, we're going to look at what makes Marvel different and special, not just in comparison to other manufacturers, but also why it's special for us. A lot of research and development has gone into the formulation of Marvel. Today, we will explore the audiology and research behind Marvel and the rationale behind the decisions that we've made as we move into the future.

The Four Pillars of Marvel

There are four main technology areas, or pillars, that set Marvel apart. These four pillars are:

- Clear rich sound

- Connectivity

- Rechargeability

- Smart apps

Based on market feedback, we have improved these four areas so that we're able to offer a seamless solution for you and your patients.

At Phonak, alongside cutting edge technology that delivers better hearing performance, ease of use is also an important factor. By listening to the needs of hearing care professionals and their clients, we placed a lot of importance on designing a portfolio of hearing aids that are innovative and easy to use. We have made the following updates:

- Improvements for rechargeable handling

- Better usability of the hearing aid push button

- Better handling of the hearing aid housing and battery doors

- Optimized performance in wind and noise

- Better client experience of the TV Connector and Remote app

These are just some of the things that were taken into consideration to make sure that clients can feel confident in handling their hearing aids after leaving the hearing care professional’s office.

Marvel Form Factors

Figure 1 shows our Marvel form factors. We're already shipping our Audeo M-312, as well as our Audeo M-R. In February of 2019, you will have access to T-Coil models, and the rechargeable T-Coil model will be available in the fall of 2019.

Figure 1. Marvel form factors.

It's important to note that Binaural VoiceStream Technology (BVST) is back and available on all technology levels from 50 and up. Binaural VoiceStream Technology allows our hearing aids to take a full audio bandwidth signal from one side and stream it to the other side. BVST is used in programs like speech in loud noise, speech in 360, and speech in wind. In addition, all of our form factors and technology levels offer direct streaming to Android, Apple, and other Bluetooth devices at level 4.2 or above. Furthermore, we offer rechargeability at every level of technology. Every model is also enabled with Roger Direct. In other words, there is a built-in Roger receiver if your patient wants to take advantage of that Roger technology. One caveat is that they won't be able to activate the Roger receiver until fall of 2019, but the technology is in there. The 312T and the 13T are not out yet, but I wanted to highlight that those are the only two models that have a programming socket to connect to programming cables. Otherwise, all of the other models are going to be fit wirelessly with Noahlink wireless.

Audiology at the Heart

Audiology is at the heart of everything we do at Phonak. It's the starting point of all of our conversations, and that's because we've always had a goal to enable people to thrive socially and emotionally. We believe that better hearing leads to better communication and that communication plays a central role in every person's life. We have a saying at Phonak that Marvel is love at first sound. I'm going to describe to you why we have been hearing that mantra from patients ever since the launch of Marvel.

Phonak ensures clear, rich sound through all stages of the hearing journey. From the first time a patient is fit with Phonak Marvel, throughout everyday life and in tough, real-life situations. The result of our technology is better sound quality and speech understanding, while also reducing the effort required to listen in noise.

With Marvel, we deliver on all fronts:

- Exceptional sound quality from the first fit

- Better speech understanding in noise

- Reduced listening effort in noise

- Top-rated streamed sound quality

Exceptional Sound Quality from the First Fit

Comfort and sound quality are two important key attributes to a good first fit. In 2017, we wanted to test the first fit acceptance and everyday sound quality of our most current technology at that time, which was Belong. A study was conducted in Oldenburg, Germany in 2017 with 20 first-time hearing aid wearers with mild to moderate hearing loss. We used Audeo B90-312 hearing aids, as well as a competitor's product.

The default settings of each manufacturer's hearing aids were used. The test environments included the first fit in a fitter's office to rate sound quality and then went to a shopping mall to rate sound quality, followed by a two week home trial. The patient feedback that we received was that in the fitter's office, the Phonak products sounded too sharp and too shrill, but in any other situation outside of the fitter's office, the Phonak devices were better received.

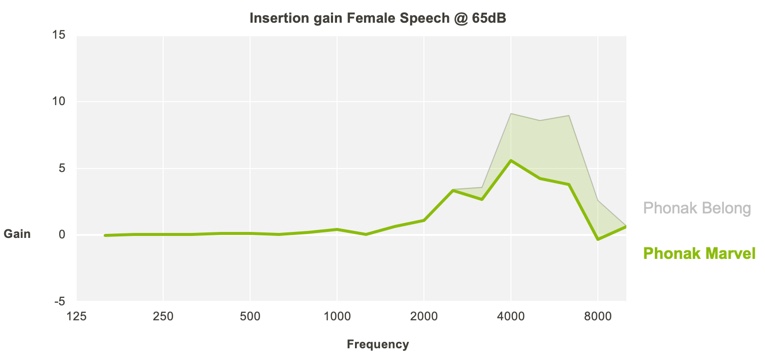

Using the information and insights gained from that study, we changed the fitting formula of Marvel, specifically for first-time users. Figure 2 shows the insertion gain for Marvel and Belong. With Marvel, the gain curve has been relaxed for frequencies above 3 kHz in order to reduce reported shrillness, while maintaining excellent speech understanding and audibility.

Figure 2. Insertion gains Marvel and Belong.

In April of 2018, a second study (also at University of Oldenburg) was carried out to examine the new Marvel pre-calculation in comparison to the original used with Belong. Evidence gathered from this study, along with other Oldenburg studies, provided insight into whether we're taking our technologies in the right direction for our patients. The intent of one such study was to look at first fit acceptance. We wanted to put Marvel devices to the test against Belong (the current product at that time). We wanted to see how Belong and Marvel hearing instruments performed in both the fitter's office, as well as in a more challenging listening environment: a shopping mall.

Our participants were 20 first-time hearing aid wearers with mild to moderate hearing loss. We wanted inexperienced users so that we could specifically assess that first fit experience. The hearing aids were all set at the default settings. We applied 80% of target gain for first-time users. We used the new pre-calculation with Marvel with those relaxed highs, versus the old pre-calculation with Belong.

In a fitter's office, we had the patients rate both devices in terms of loudness, shrillness, naturalness of own voice, and the fitter's voice. Then we took them to the shopping mall and had them rate again both the Belong and Marvel for loudness, shrillness, naturalness, listening effort and subjective speech understanding. After that, we performed speech intelligibility tests with both Belong and Marvel in quiet and in noise.

Study Results

With regard to loudness, the participants were more likely to rate the Marvel device as appropriate for both their own voice, as well as the fitter's voice. According to recent MarkeTrak data, this is a key factor in first fit acceptance. Participants also rated Marvel devices as being less shrill than Belong for their own voice, as well as the fitter's voice. This translates into less work and fine tuning on your end, and a greater likelihood that the patient will have a positive experience from the moment that she puts on the devices.

We also wanted to be sure that with Marvel there would be no compromise in terms of speech intelligibility. One concern was that if the hearing aid is not as shrill, the patient may not be getting enough high-frequency information which is needed for audibility and clarity. To address the issue of speech intelligibility, we conducted speech testing in both quiet and in noise and found no significant difference between Belong and Marvel. Marvel performed just as well in both situations. In fact, the patients were happier with Marvel's sound quality. In summary, this research shows that with Marvel, you can have that excellent first fit acceptance as well as the speech intelligibility that you need.

We also conduct testing of these devices in our own facility called Phonak Audiology Research Center (PARC). Some of the spontaneous exclamations from our subjects include comments like:

- My voice is clear. It's perfect. No echo, very nice. Not tinny at all.

- Very clear - sounds good.

- Sounds natural.

- It's a "10".

- I don't notice I'm wearing hearing aids.

Why are we getting comments like these? At Phonak, we know that many factors go into attaining exceptional sound quality, not only in quiet but also in noise. We also know that the ability for the hearing aid to transition seamlessly between listening environments is incredibly important.

Better Speech Understanding in Noise

We wanted to provide evidence that our hearing aid features are working in every environment. One of the tools that we use in complex listening environments (i.e., speech understanding in noise) is StereoZoom. We wanted to see how StereoZoom is working behind the scenes to provide real-life objective benefit for your patients.

StereoZoom

StereoZoom uses Binaural VoiceStream Technology (BVST). It's used in our Marvel devices in a number of different programs, one of them being Adaptive StereoZoom or what's also called Speech in Loud Noise (SPiLN). BVST, the StereoZoom algorithm and the directional microphone technology all work together to improve speech intelligibility, especially in challenging listening environments. We already have studies showing that StereoZoom is effective. We have 10 published studies showing the benefits of StereoZoom, many of which can be found on PhonakPro under our Evidence tab. We will discuss a couple of these studies in today's presentation.

Reduced Listening Effort in Noise: StereoZoom

One of the factors that we wanted to address in combination with our StereoZoom technology was to determine the effect of StereoZoom on our patient's ability to listen with reduced effort. Our patients often have to struggle to hear in many situations. We know that hearing and processing sound not only requires the ears, but it also requires the brain. We wanted to see if the hearing aids would be able to reduce the amount of effort that the patient needs to hear and understand that speech signal. Listening effort is an important dimension in hearing aids because if we can reduce the amount of effort and time our patients spend decoding the signal, we can free up cognitive resources for other opportunities.

One of the reasons that we wanted to research the impact of our technologies on listening effort is that our competitors have already been actively engaged in this research area using measurement techniques such as pupillometry to show the benefit of their products. We spent a great deal of time researching the best way to get an objective measurement using proven measurement techniques to research the benefit of StereoZoom.

To test StereoZoom, we recruited a total of 20 subjects (12 women and eight men). The median age was 70.9 years old. Participants were experienced hearing aid users with mild to moderate hearing loss. The participants had no neurological deficits and no cognitive impairments.

Each participant was fitted with two hearing aids: Phonak Audeo B90-312 and the premium competitor's device.

There were three EEG listening study protocols:

- The Audeo B90-312 with StereoZoom

- The Audeo B90-312 with Real Ear Sound (the Control Condition; more of an omni set up than StereoZoom)

- The competitor device at default settings

In addition, there were two listening conditions: one with a good signal-to-noise ratio (SNR +7 dB) and one with a poorer signal-to-noise ratio (SNR +3 dB). The noise signal was a diffuse cafeteria noise at a constant level of 65 dB SPL played via loudspeakers with the listener positioned as shown in Figure 3.

Figure 3. Listener position during noise signal.

In this study, the speech material used was taken from the OLSA sentence matrix test material. The participants heard two sentences and then they answered either which names, which numbers or which objects they heard. Based on the sentences, a word recall task was developed. The recall task had to be completed after every second sentence, and the answer was given via a touchscreen. For each of the five words per sentence, there were 10 possible answers to choose from.

To fully understand the impact StereoZoom had on the listening effort, we examined performance against the control condition (Real Ear Sound) and a top competitor in the 65 dB noise environment (cafeteria). We looked at three things:

- Response accuracy on a word recall task under different noise conditions

- Brain EEG activity recorded during a word recall task under different noise conditions

- Subjective impressions after word recall task on dimensions of listening effort, noise annoyance, and memory effort

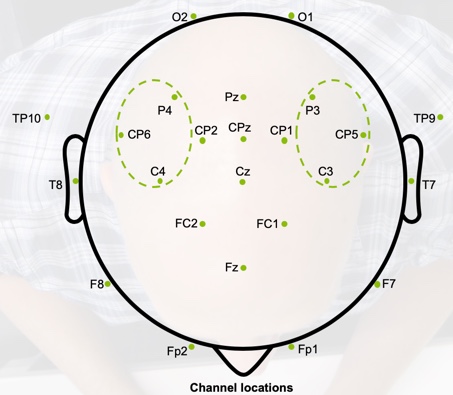

The brain activity was recorded from a custom made elastic EEG cap worn while the participants were listening to the sentences. Figure 4 shows a 2D representation of those EEG caps. The electrodes of interests that we were looking at are circled in green, and they're positioned over the left and right temporoparietal cortex. The focus was placed on those particular electrodes because they were part of the alpha band. The alpha band is known to play a role in memory and attention.

Figure 4. 2D representation of EEG cap worn during testing.

Subjective responses were collected using a 13 point scale (from effortless to extremely exhausting). Participants were asked to rate their:

- Memory effort: How effortful was it to memorize the words?

- Annoyance: How annoying did you perceive the background noise?

- Listening effort: How effortful is it to understand that speech?

Study Results

The performance measures used in this study were:

- Noise annoyance

- Response accuracy

- EEG data

- Listening effort

- Memory effort

Noise annoyance. Subjects rated noise annoyance significantly lower when using StereoZoom versus a more omnidirectional configuration with Real Ear Sound. Subjects rated noise annoyance with StereoZoom lower than when listening to the competitor across both poor and good listening situations.

Response accuracy. When listening with StereoZoom versus real ear sound (the control condition), the response accuracy was 5.8% better with StereoZoom in the challenging listening condition. This indicates that StereoZoom is objectively performing in background noise. We did not see a significant difference in response accuracy between the conditions of StereoZoom and when using the competitor's devices. However, what's important to note is how much listening effort it required for participants to reach the same response accuracy with different devices. We're going to address that shortly.

EEG data. Brain activity with StereoZoom was lower than with the control condition across both poor and good listening conditions. In other words, subjects used less brain activity when listening with StereoZoom. Less activity in that alpha band means less listening effort involved, which translates to more resources that can be dedicated to participating in the conversation. As compared to our competitor, brain activity with StereoZoom was significantly lower than the competitor across both poor and good listening conditions.

Listening effort. Listening effort with StereoZoom, as you would expect, was lower than with the control condition across both poor and good listening conditions. Again, listening effort with StereoZoom was lower than the competitor across both conditions as well.

Memory effort. Memory effort with StereoZoom was lower than the control condition. In other words, it's easier for the subject to hold that signal in memory when using StereoZoom. Again, as compared to the competitor, memory effort was lower with StereoZoom across both poor and good listening conditions.

In summary, although response accuracy was no different between StereoZoom versus the competitor, the effort that it took to get there was much different when using StereoZoom. Against the competitor, memory effort was rated subjectively less, listening effort was less, noise annoyance was less, and there was less EEG activity, meaning that the brain did not have to work as hard to understand that signal. The subjects reported it to be easier to listen and remember words, and that shows the opportunity that StereoZoom has beyond speech intelligibility. When your patients don't have to work so hard to hear the signal from the hearing aids, there are potentially more cognitive resources available for interaction and engagement, and hopefully, that patient is less tired at the end of the day.

Behavioral Changes with StereoZoom

StereoZoom has subjective and objective benefit. However, we wanted to find out if using StereoZoom leads to behavioral changes in real life, specifically in social settings. In order to determine this, we conducted another study intended to evaluate the benefit of the StereoZoom feature in Audeo hearing aids in a speech in loud noise situation.

Overall, the study was divided into three phases:

- Phase 1: In the first phase, the goal was to estimate the listening effort contrast of StereoZoom to other directional settings (UltraZoom, Fixed Directional, and Real Ear Sound.)

- Phase 2: For Phase 2, a reasonable contrast setting (fixed directional) was then used alongside Video Analysis and head tracker information to test whether StereoZoom positively changes the communication behavior of hearing aid users in a realistic loud environment.

- Phase 3: During the third phase, we looked at the subjective ratings of StereoZoom versus a directional setting in noisy environments.

Phase 1

There were 24 subjects that participated in phase one (12 male and 12 female). The mean age was 74.3, and all subjects were experienced hearing aid users. For this study, Audeo B90-312 hearing aids were used, and slim tips were used to ensure good acoustic coupling.

We used our proprietary fitting algorithm (Phonak Adaptive Digital) at a 100% target (i.e., an inexperienced user setting). We turned SoundRecover off, and we turned adaptive features off. We used four different programs:

- P1: Real Ear Sound (RES)

- P2: Fixed Directional

- P3: UltraZoom (UZ)

- P4: StereoZoom (SZ)

With these four programs, we went from more of an omnidirectional mode with Real Ear Sound, all the way to the most directional with StereoZoom.

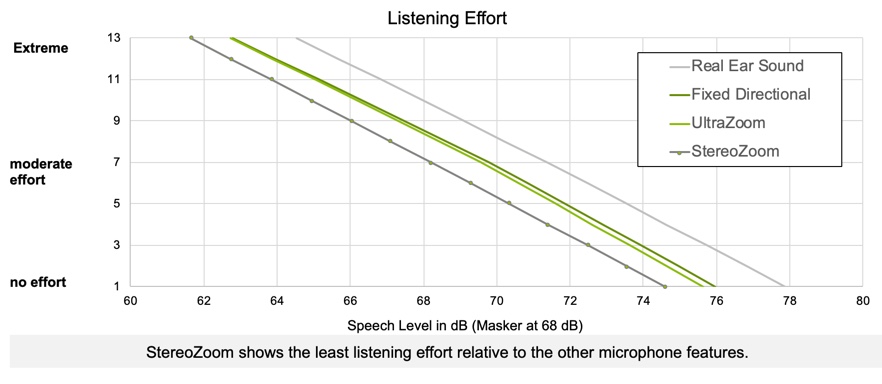

Subjects performed listening effort tests in a virtual supermarket scenario. They used an adaptive scaling method for this subjective listening effort. The supermarket noise signal was presented at a constant level of 68 dB SPL, and the signal to noise ratio was varied by changing the speech level. There was a training run, and then each subject performed one complete run for each hearing aid condition, for a total of four runs overall.

Figure 5 shows the results of the listening effort readings using the ACALES procedure across 24 subjects. StereoZoom shows the least listening effort relative to the other microphone features. Overall, we observed a 22% reduced listening effort with StereoZoom versus fixed directional.

Figure 5. Listening effort with StereoZoom vs. other microphone features.

Phase 2

Subjectively, participants are perceiving less listening effort with StereoZoom. But does that matter? We wanted to determine whether it makes enough of a difference to lead to a change in behavior.

As a result of the screening measures, we decided to compare StereoZoom to the Fixed Directional setting in the main study. The SNR region of +3 dB to +7 dB is especially important as this corresponds to normal for conversation in real life conversation in noisy situations.

In Phase 2 of the study, we invited 10 subjects with a good benefit in listening effort to participate in a group discussion. Their communication practices and behaviors were documented analyzed using video recording. With small caps on the head of each participant, sound recordings were collected, and participants' head rotation was tracked.

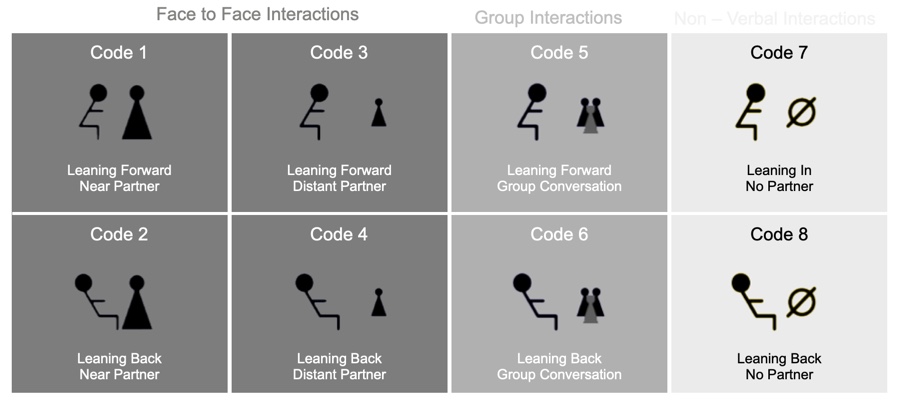

The video recordings of the group discussion were evaluated using a fixed annotation scheme. This took place offline. The rater had the possibility to pause and rewind the video. This increases the accuracy of the evaluation. For the annotation, 8 codes were used to document body language changes (Figure 6):

- Code 1: F-t-F DP_N PR_N = Face to Face interaction with the near direct communication partner and forward leaning body position.

- Code 2: F-t-F DP_N PR_D = Face to Face interaction with the near direct communication partner and backward leaning body position

- Code 3: F-t-F DP_D PR_N = Face to Face interaction with the distant direct communication partner and forward leaning body position

- Code 4: F-t-F DP_D PR_D = Face to Face interaction with the distant direct communication partner and backward leaning body position

- Code 5: Group PR_N = Group interaction with forward leaning body position

- Code 6: Group PR_D = Group interaction with backward leaning body position

- Code 7: Nonverbal PR_N = Non-speech interaction with forward leaning body position

- Code 8: Nonverbal PR_D = Non-speech interaction with backward leaning body position

Figure 6. Codes to document body language.

When observing the actual participants during their discussion, the raters were looking to see which directions the subjects were leaning when speaking to direct partners, during group conversation, or during non-verbal interactions.

We tested everyone with fixed directional, and then we tested everyone with StereoZoom. There were two different groups. We wanted to see what the differences were between those different strategies. There were a total of 645 interactions that were annotated, which was divided into 345 interactions in session one and 290 in session two. In the end, results showed that participants communicated more with StereoZoom. In fact, with StereoZoom, subjects were 15% more interactive, which is a statistically significant difference.

Phase 3

In phase three, 15 subjects rated both their overall preference and loudness preference for StereoZoom versus Fixed Directional based on the listening environment in the room where group discussions were conducted. With fixed directional, the loudness was higher compared to StereoZoom. In other words, StereoZoom was beneficial in reducing a certain amount of noise for the subjects. Their loudness ratings with StereoZoom came closer to an acceptable rating as compared to fixed directional.

In summary, when patients use StereoZoom, their listening effort was reduced by 22% as compared to Fixed Directional. This led to a 15% increase in participation in a group discussion within a noisy environment. Furthermore, people were observed as being more relaxed in that conversation, as evidenced by their increased tendency to lean back. In addition, they spoke more often in the group discussion environment, which suggests that they felt more engaged. This is evidence that StereoZoom is making our patients' lives easier, allowing them to communicate and participate in the world around them in a more seamless manner.

Top-Rated Streamed Sound Quality

Lastly, we wanted to take a look at sound quality in terms of our streamed signal. With Marvel, it's all about connectivity. How does our streamed signal compare with our competitor's streamed signals? Watching TV is a regular activity enjoyed by hearing aid wearers, and TV watching is rated as one of the top four situations where it is important for hearing aid wearers to hear well (MarkeTrak 9, 2015). On average, our patients are watching 3.5 hours of TV a day. We want to make it a good experience for them.

Our AutoSense OS™ 3.0 program (our blending program in all of our hearing instruments) is used in an acoustic way. There are some programs within AutoSense OS™ 3.0 that are blended: speech in noise, calm, comfort in noise and comfort in echo. Then there are some programs within AutoSense OS™ 3.0 that stand alone and are dedicated to those particular environments: speech in loud noise, speech in a car, and music. Patients love the sound quality of this. As hearing health care providers, you are better able to fine-tune a patient's listening experience. If they are having difficulty in the car, you can go in and target that car program.

Now, we are taking what we have done with AutoSense OS™ 3.0 and translating it to our streaming program. We're not only able to classify acoustic input signals, but we're also able to classify our streamed signals into streamed speech and streamed music. Is the signal that they're listening to speech-dominant, or is it music-dominant? If they told you they are having an issue whenever they listen to their favorite song on Spotify, and it sounds too trebly or there's too much bass, you can go in and adjust that particular setting for that patient. We're giving you some more control.

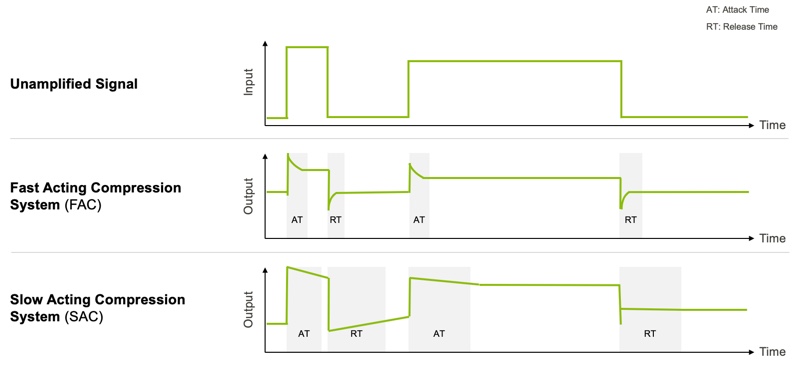

Adaptive Compression speed

We're also using adaptive compression speeds in our streamed program, as well as our acoustic AutoSense OS™ 3.0 program. This is something that we've done since the launch of Naida B and Sky B. We launched something called Adaptive Phonak Digital Contrast, which is another fitting algorithm that you can take advantage of in global tuning. At that time, we were advocating for its use in people with severe to profound hearing loss, but we went back and looked at the research and there is a benefit even for people with mild amounts of hearing loss with specific inputs.

Behind the scenes with Marvel, we have activated an adaptive compression speed for any signal coming into the hearing aid that is adaptive (speech in noise, speech in loud noise.) Anytime that that patient needs more temporal cues and more spectral cues, we've activated a slower acting compression.

As shown in Figure 7, when you're looking at the difference between signals (unamplified, fast acting compression, and slow acting compression), with slow-acting compression, we're preserving some of those natural qualities of the signal as opposed to reducing some of those natural peaks and valleys. We're preserving those so that the patient can have better access to the contrast between those two sounds. We are doing this not only in AutoSense OS™ 3.0 at this time but also in our streamed programs.

Figure 7. Compression speed based on listening environments.

With slow compression, we are able to preserve the difference in sound levels between the ears. In that way, we can maintain localization cues. We can improve speech intelligibility by preserving intensity and modulation differences with the speech, and we also preserve the temporal and spectral envelope of that speech cue. We're taking that adaptive compression speed, as well as the classification of streamed signals, and we wanted to compare the sound quality of that streamed signal with Marvel to what is the ideal streamed sound quality.

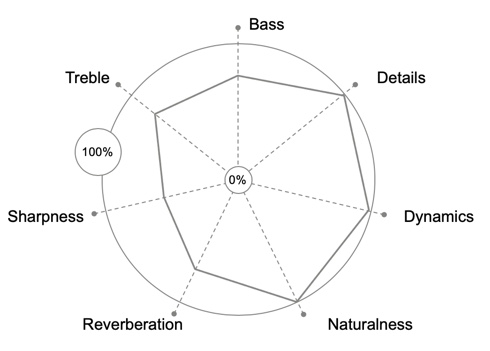

We completed a study in Denmark at a research facility called SenseLab. At SenseLab, they have trained listeners, both with hearing loss and without hearing loss, whose job it is to classify auditory signals and rate their quality. A group of hearing-impaired listeners was asked to come up with a benchmark for the ideal streamed signal and to determine how much of each of these attributes needed to be present when listening to that signal. Figure 8 shows the plot that they came up with.

Figure 8. The ideal profile.

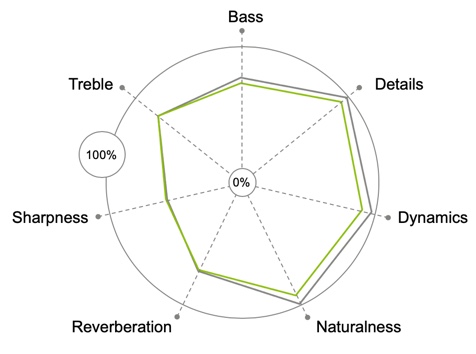

We wanted to see how Marvel compared to that ideal profile. Figure 9 shows how Marvel compared. You can see the green on top of the gray here. Marvel's profile was extremely close to what that ideal signal was. The ideal profile is characterized by balance, treble, and bass. You want a little bit of reverberation, you want some sharpness, and you want a high level of dynamics, details, and naturalness. And that's what you find with Marvel.

Figure 9. Phonak Audeo Marvel profile.

If you haven't already, I encourage you to listen to these devices for yourself and put them to the test. Listen to a podcast, listen to a movie clip, listen to whatever genre of music is your preference. You will hear that the sound quality is incredible. Not only that, but as a fitter, you have the ability to change the sound quality the way that that patient wants it to be. Once they're streaming something, it will automatically go into that program.

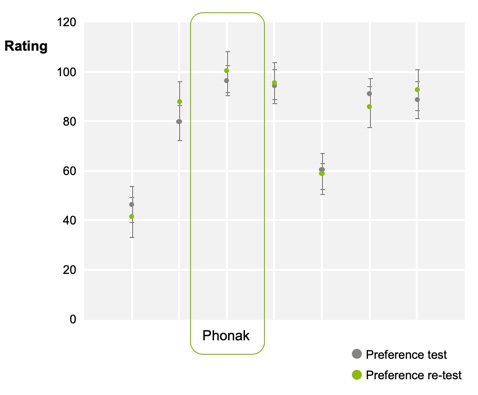

Not only did we want to see how the streaming sound quality compared to the ideal, but we also wanted to see how we stack up against our competitors. We put Phonak Marvel to the test against six other competitors (Figure 10). Phonak did come out on top.

Figure 10. Phonak streaming quality vs. competitors.

It is worth noting that music and streaming are subjective. Probably not 100% percent of your patients are going to rate Phonak as the top streamed sound quality, but on average we're seeing Phonak come out on top. The subjectivity of music and streaming are why we give you the flexibility to make those adjustments for your patients.

Summary and Conclusion

In conclusion, Marvel is rated among the most preferred devices by hearing aid users for streaming sound quality. Furthermore, Marvel has a sound profile that closely matches the "ideal" for streaming media. Additionally, AutoSense OS™ 3.0 results in high preference ratings for a variety of media, including speech, speech in noise (SPiN) and music. With Marvel, we hope your patients are experiencing love at first sound through all stages of the hearing journey. Phonak's goal is that your patients have not only the best first fit experiences with these products, but also that they are hearing the world around them with a clear, rich sound.

In summary, Marvel is packed with a number of world's first innovations.

- Standard Bluetooth Classic: Marvel can connect to anything that has that Bluetooth signal (4.2 and above), devices that are typically from 2007 and more current. This includes more than just smartphones. You can connect to iPads, laptops, smart TVs, anything that has that Bluetooth connection.

- Rechargeable Without Compromise: You also have rechargeability with connectivity at this point. And remember that it comes at every level. So 30, 50, 70, 90. See you really have a lot of options for even your patients who need something at more of a value.

- RogerDirect: All of our devices have a built-in Roger receiver. Starting in the Fall of 2019, depending on your patient's needs, you can select the microphone that works best for your patient and connect to that Roger receiver. This will involve a firmware upgrade that will be available to them at that point. We will be conducting additional training once this rolls out. Keep in mind that any of the devices that you purchase now have that capability.

- Remote support integrated in Target: This feature will be available in February, and you will be offered more training at that time. Our remote support is face to face. You'll be able to see that patient, and they'll be able to see you. This is all done on your end through Target and on their end through the app. Any of the changes that you make through that app are going to be live. You can fine tune in real-time and ask them in real time if the changes are making a difference. If not, you can continue to fine tune from there.

- AutoSense OS™ 3.0: This is the most current version of AutoSense OS™ 3.0 with streaming, how we can break apart those streamed signals.

- Direct Streaming to iOS and Android: As long as that patient has a device that is 4.2 Bluetooth enabled and above. If you have any questions about whether or not your patient's device is compatible with Marvel, at the time of that appointment I would encourage you to go to bluetooth.phonak.com and the name of the device they're planning on connecting to. That website will indicate whether the app compatible and the phone are compatible.

- Real-Time Remote Support (access available in February).

- Hands-Free Phone Calls: At this point, we are the only manufacturer to offer truly hands-free phone calls. With Marvel, you do not have to hold that phone up to your mouth to speak into it. Your patient's voice is being picked up by the microphone of the hearing aid and then sent to the person on the other end of the phone call. There have been many improvements made to the sound quality of that patient's voice, ever since the release of Audeo B-Direct. If you have questions on that, please reach out to your Phonak clinical trainer and they'll be happy to walk you through that.

Citation

Floyd, E.. (2019). Clear, Rich Sound. Understanding the research supporting phonak audeo marvel. AudiologyOnline, Article 24426. Retrieved from https://www.audiologyonline.com