Today's topic is the latest developments in wireless CROS (contralateral routing of signal) and BiCROS (binaural contralateral routing of signal) solutions. Our program today will begin with information about binaural hearing and the central role of binaural hearing for understanding speech, especially in noisy and spatial listening environments.

Then I will speak about the effects of single-sided deafness (SSD) in situations that cause the most difficulties. I will explain the CROS/BiCROS concepts, and how we can utilize these systems to solve, at least in part, those problems related to SSD. I'll discuss which audiogram configurations make patients candidates for CROS/BiCROS solutions. From there I will switch gears over to the patient's perspective, discuss challenging listening situations that might be solved with a CROS/BiCROS hearing system, and alternatives to the classical hearing-aid based CROS solution. Finally, I will discuss the digital wireless solutions that have recently been introduced and the fitting aspects of those devices.

Binaural Hearing

Nature has given us most of the sensory organs twice. We have two ears, we have two eyes, and we even have two nostrils. This enables the brain to process two signals on each sense and opens up the spatial perception in vision, hearing, and even smell. Binaural hearing uses both ears. The brain will always have access to two similar, but slightly distinct, signals. By comparing these signals and finding out the differences and similarities, the brain can send all the right impulses for directional and spatial hearing.

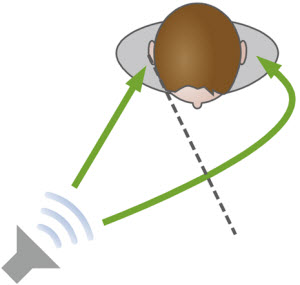

Let's consider a situation in which there is a sound source directed toward the right ear of a person (Figure 1). The sound, as you can see, is picked up by both ears, but the signal path is slightly different. The signal will arrive at one ear earlier, since the signal path to the far ear is slightly longer and therefore there is a time lag between the two ears. This causes an interaural (between the ears) time difference which will be just a few microseconds. You can calculate the difference by taking the distance divided by the speed of sound.

Figure 1. The pathway of sound and interaural lag associated with a sound presented towards one side of the head.

With the diameter of a typical head, the maximum difference that occurs if the sound is presented straight to one side is about 700 microseconds. The brain is amazingly able to analyze these small time differences, and we can hear changes in the direction of sound as small as about 10 microseconds.

So on one hand, we have a slight interaural time difference, and then we have another effect where head is shadowing the sound. Due to this head shadow effect, sound will be attenuated at the far ear, creating an interaural level difference. The maximum level difference, if we place the sound source at the side of the head, will be about 15 dB. That does not sound like very much, but in terms of signal-to-noise ratio, we know that gaining 15 dB is quite significant. Of course, blocking the sound and attenuating the sound is frequency dependent, because it concerns the refraction of sound and the relation between the diameter of the head and the wavelength of the sound. Basically, the attenuation of sound due to the head will only occur at high frequencies. Consequently, the 15 dB level difference will mainly occur in the high frequencies, and will be much smaller at low frequencies where we have very long wavelengths of sound. If we measure the difference for broadband signals, like a speech signal, then we will have about 6.5 to 7 dB interaural level difference. Because the interaural level difference is frequency dependent, the sound spectrum will actually be changed, and the listener will experience a small difference in the perception of the sound.

In total we have three different aspects of binaural hearing: summation, squelch and localization. The brain will use all three of these to determine the position of a sound source. Binaural hearing and spatial perception are the foundation for the ability to analyze and resolve complex spatially-distributed listening situations. Imagine a party or a concert where you have several sound sources. When you locate and distinguish which sounds are coming from where, you can then select one source to listen to and concentrate on it. As you might imagine, this is the basis of following a conversation and understanding speech in noisy situations. Binaural hearing means that the brain is comparing two similar signals, therefore requiring two equally-working ears. The ears do not have to be perfect, but symmetrical function is important. So what happens if one ear is significantly poorer than the other?

Single-Sided Deafness

If one of the ears has a severe or a profound hearing loss or very poor speech intelligibility, we can say it is a deaf ear resulting in SSD. Details of the many audiologic and medical conditions that can lead to SSD are beyond the scope of today's discussion. However, in-depth readings from other experts in this area are available, some of which can be found on AudiologyOnline. The focus here will be to consider the effects of SSD.

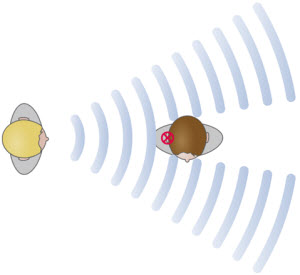

Suppose that a patient has SSD and is confronted with a speaker on the side of the deaf ear (Figure 2). The speaker will send out sound waves which will only be heard in the better ear. The sounds of the speaker will not, however, be picked up by the deaf ear and due to interaural level differences, the better ear will hear this sound attenuated by about 6.5 or 7 dB.

Figure 2. Effect of speech directed to a deaf ear in the case of single-sided deafness.

This means that speech alone will be softer resulting in slightly poorer speech intelligibility. If the signal is that of the speaker's voice alone, simply turning up the volume of a hearing aid or asking the person to raise her voice by an additional 6.5 dB will compensate for the attenuation due interaural level differences. What happens, however, if we add a noise source to the situation or, even worse, if we add the noise source to the side of the better ear? (Figure 3)

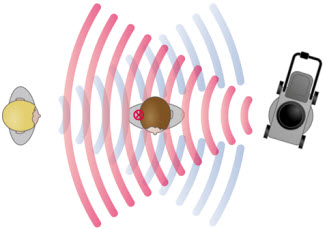

Figure 3. Single-sided deafness with speech presented to the deaf ear and background noise on the side of the good ear.

Here we have a sound source such as a lawnmower at full intensity, on the side of the good ear. Increasing the difficulty of this situation, the speech arriving at the good ear from the dead ear side will also be attenuated by about 6.5 dB. Patients experiencing SSD will have severe problems with speech intelligibility in this situation. At the same time, because one ear is working normally, the overall loudness of the situation may be perceived as normal. There is a contrast between normal loudness perception of the overall signal and the speech intelligibility that has deteriorated on this poorer ear. In other words, the overall signal is perceived as loud enough but not clear. In addition, SSD prevents the brain from using the cues of interaural differences because they are non-existent now. The brain is not able to locate the sound source which is a necessary part of resolving this complex listening situation. In addition to the obvious loss of sound awareness on the poorer side, there is an increased difficulty of poor spatial and directional hearing.

Consequences of SSD

There are several other consequences of SSD in addition to listening performance problems including social, cognitive, emotional and psychological concerns. Examples of social consequences would be, if a patient was at a dinner party with family with people talking from two sides, or was at a business meeting with several people talking at the same time, or sitting in a car with a speaker on the poorer side. In these social situations, the patient would have some difficulty with speech intelligibility. Adding street noise or background noise to these situations will compound the problem possibly preventing speech from being intelligible at all. Even more difficulties would be introduced if the speech and noise are moving such at a child's birthday party where the correct direction of sounds would not be heard due to compromised localization.

Cognitive, emotional, and psychological effects can also occur. SSD can cause confusion for some people when some situations create little difficulties while others create extreme difficulties. Experiencing problems in one listening situation and not experiencing those problems in others can be very conflicting. With SSD, people may feel they do not have a constant hearing loss.

This hearing loss might be embarrassing because it is an "invisible" impairment to outsiders even though for the individual it is anything but invisible. Persons with SSD seem to have differing degrees of difficulty based on the listening environment, hearing well in some situations and poorly in others Because of this, their peers might understand the problem even less than they would with a bilateral hearing-impaired person where communication difficulty might be more obvious when occurring under all listening situations. Attempting to explain this can be confusing, embarrassing and difficult.

As a result of the confusion and embarrassment, clients may experience feelings of shame when they misunderstand or respond inappropriately during a communication situation. They might be annoyed by having continuing difficulties in everyday life or in many listening situations, and they might feel a little helpless because they simply miss important signals without having a second chance to solve or to clarify the issues. Consider, for example, a train station, which is very reverberant and very noisy. When a change of the platform is announced, the person with SSD might miss the message or misinterpret what was said without the opportunity to ask for clarification or have a second chance to hear the information, which can create frustration. On the other hand, this person might still have situations where you hear well with normal loudness perception and therefore might not see themselves as a candidate for a hearing aid or CROS solution because of your normal ear or your ability to hear well in some situations.

The CROS Concept

How can we address this problem where a person is unable to hear signals from one side? Imagine again a person with SSD and the talker at the poorer side. What can be done to improve communication in this situation? One hearing solution is a CROS system. Sound directed to the poorer ear will be picked up using a microphone and then routed to the contralateral side and delivered to the better ear (Figure 4). This is the basic concept behind CROS solutions.

Figure 4. CROS concept with speech directed to poor ear and sound transmitted to better ear.

This, of course, can be especially beneficial if we think about noisy situations where the noise source is directed to the better ear. Audibility of the signals from the poorer side will be dramatically improved utilizing a CROS solution. If the better ear does not have normal hearing but is suitable for amplification, a microphone can be added to that side as well. In this case, we have a hearing aid configuration on one side (i.e., the better ear) and an additional microphone on the poorer side. This configuration is called a BiCROS. The name is somewhat contradictory, because only one side is routing the signal contralaterally.

CROS Indications

To determine CROS candidacy, consider the audiogram. A CROS candidate has a severe to profound hearing loss on one side and a normal hearing ear on the other side. A profound hearing loss is not typically suitable for a conventional hearing instrument. If the loss were any better than profound on the poorer ear, a regular hearing aid fitting might be more appropriate.

There might be reasons other than SSD selecting a CROS hearing aid. There might be medical contraindications against conventional hearing aids, such as malformations of the ear canal or atresia. Other reasons for choosing A CROS system might include non-acceptance of conventional hearing aids on the poorer ear. Because there is no usable hearing in this ear, speech intelligibility may be very poor compared to the better ear. This type of fitting assumes normal or near normal hearing with adequate speech intelligibility in the better ear.

If the better ear does have a hearing loss that meets the indication for a hearing aid, this would be an appropriate candidate, audiologically speaking, for a BiCROS fitting which would amplify the signals originating on the better side in addition to the signal from the deaf side.

BiCROS Indications

BiCROS candidacy determination includes some of the same considerations as the CROS fitting.. The poorer ear is a dead ear with no usable hearing and is not suitable for hearing instruments. However, for BiCROS, in the better ear there is a mild to moderate hearing loss that meets the criteria for a conventional hearing instrument fitting. In a BiCROS arrangement, the hearing loss on the better ear should not be so severe that we would not expect good hearing aid benefit. The ear should be easily aidable.

Patient Considerations for CROS/BiCROS Candidacy

Let's turn the perspective to the patient. Individuals with SSD can be pretty demanding patients because of high expectations for hearing aids due to the better ear. For example, they might compare the sound quality of the aided signal to that of their better ear. Therefore, it is essential to provide the best possible aided sound quality since the patient might not be able or unwilling to tolerate negative impacts of hearing aids, such as circuit noise or distortion, interferences with other devices or handling problems. There might also be an unwillingness to accept these issues due to their lifestyle or occupation or they might simply be very demanding with their expectations.

To create good motivation towards amplification and to demonstrate potential benefit to the patient, it is important to select those patients who are experiencing significant problems with their SSD. Otherwise, they might be disappointed at not having enough perceived benefit from a technical device. They should be in need of improvement in speech intelligibility for a number of listening situations, especially in background noise. Additionally, there are situations where spatial hearing is important, such as in traffic where there is a need to hear the direction of the cars and safety signals. In general, the patient must have the wish to reduce their listening effort in significant listening situations and want relief from auditory fatigue in daily life.

Benefits of CROS/BiCROS

There are certainly benefits achievable from the CROS or BiCROS solution. These devices can eliminate the head-shadow effect by amplifying the signals received on the unaidable side. Benefit in complex listening situations such as meetings, walking side-by-side, driving a car, and listening in restaurants can also be achieved by improving the signal-to-noise ratio (SNR). This is especially significant if the signal originates from the poorer side and the predominant noise source is on the better side when the head acts as a baffle attenuating the higher frequencies. These higher frequencies will be picked up by the microphone, transmitted to the better side and then delivered to the better ear. Because signals from the poorer side are also heard, signal audibility from both sides is restored.

Because signals from both sides are being received and amplified at the better ear, care must be taken to balance the signals arriving from both sides. Normally the signals from the poorer side will be slightly amplified, but you must be cautious not to over-amplify signals. With a CROS fitting, you will have subtle sound differences if you have the same signal on both sides, because the signal from the poorer side will go through the amplification. Many CROS clients report that they develop a sort of pseudo-stereophony, where they can distinguish sounds from both sides by using subtle sound differences between the signals picked up by the CROS microphone and those signals coming directly to the better ear. Since all the signals are delivered to one ear, this will not be true stereophony, because people will still not hear with both ears. They can, however, develop certain abilities in directional hearing which we call pseudo stereophony.

Methods of Signal Transmission

Transcranial transmission

The key point in the CROS solution is the signal transmission from the poorer ear to the better ear, and there are several ways to achieve that. One way is to transmit the sound transcranially through the bones in the skull. It has been proposed to use high-power hearing aids in the deaf ear and turn up the volume high enough to stimulate bone conduction that can transmit the sound to the contralateral cochlea. To my knowledge, this has not been widely employed because it is a very ineffective way to stimulate bone conduction. A very high input level is needed to achieve significant bone conduction, so of course, your poorer ear must be completely deaf because otherwise the patient would not be able tolerate such high levels of sound. Furthermore, the system with gain outputs high enough to create bone conduction will be very prone to feedback.

A more technical and current approach to the transcranial CROS is a bone-anchored system such as the Cochlear BAHA or the Oticon Ponto. With these systems patients have to undergo a minor surgical procedure to set the abutment in the skull for the devices. Disadvantages to this procedure include the obvious risk of infection from the implanted screw at the abutment site. From a cost perspective the surgery and bone anchored systems costs much more than a conventional hearing aid or a conventional CROS solution. The cost-benefit ratio might not be advantageous for these kinds of bone-anchored systems over a CROS solution. To my knowledge, there are no proven audiological benefits of these systems over a conventional CROS solution.

Wired transmission

A third solution which has the most widespread use is the "classical" CROS with a wired connection between the transmitter microphone on the deaf ear and the hearing aid on the better ear (Figure 5). The wire is an open wire, but you can make it a little more elegant by mounting the wire and the hearing aids to a pair of glasses, where the wire might be a little bit more protected (Figure 6).

Figure 5. Classical hard-wired CROS system with a visible wire between the transmitter and receiver.

Figure 6. CROS system hard wired into a pair of eyeglasses. Note the hearing aid on the left arm and the microphone unit on the right temple. The wire is mounted within the frame.

The eyeglasses solution is much more discreet than the wire running between the two ears. The cable is better protected than in the classical wired CROS solution, and the whole system is cosmetically much more attractive than a classical solution (Figure 6). However, a severe drawback to the eye glass solution is that hearing is always linked to vision. Loss, discontinued use or need for repair of either system will result in the loss of the other system as well.

Wireless transmission

The latest advancement in CROS technology is analog or, most recently, digital wireless transmission of the signal, avoiding the wire between the two units. With the wired systems, an audio shoe is required connecting the wire to the hearing aid as an audio input. The added hardware and connections reduce system durability and increase need for repairs. In addition to the unappealing cosmetics of this type of system, having two units with a cable between them can also be a bit inconvenient with the added risk of accidents where the cable gets tangled or breaks. You can plan on routine cable replacements with wired systems. However, wired systems are a robust and generally inexpensive solution, and can even be retrofitted in some cases to existing hearing aids. An existing behind-the-ear (BTE) hearing aid on one side that has an direct audio input, can be retrofitted to a BiCROS solution by adding the microphone on the other ear.

To avoid the wire, a wireless analog radio transmission between the systems using any of the well-known wireless transmission technologies like amplitude modulation (AM) or frequency modulation (FM) can be utilized. Systems available with analog wireless technology typically use a few-hundred kilohertz transmission frequency.

The obvious advantage of wireless analog transmission is that you do not need a cable or an audio shoe. For some systems the transmitter can be housed in an in-the-ear (ITE) custom shell, however, the antenna limits the size of the system. Some of the systems might use a behind-the-ear (BTE) or ITE on the transmitting side, which may be cosmetically appealing to some users.

There are a number of other disadvantages to the use of wireless analog transmission systems. First, there is a high risk of interference and distortion by other electromagnetic fields in the direct environment. Examples include computer monitors, fluorescent lights, mobile phones or security systems. All alternating electromagnetic fields can interfere with these systems. There is also a higher power consumption compared to a wired CROS solution due to the energy needed to drive the radio transmission. Since there is no wireless link and no encoding, any receiver can pick up all the radio signals from any other transmitters. Consequently, if two systems are close to each other, they can mutually interfere and both listeners could possibly hear signals from both transmitters.

audifon via

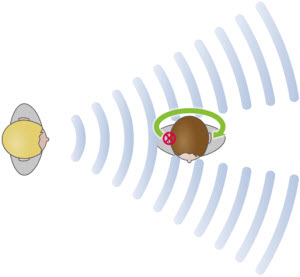

audifon has introduced a new system using digital wireless transmission technologies, housed in cosmetically pleasing BTE configuration on both the transmitter and the receiver ear. The audifon via uses the well-known Near-Field Magnetic Induction technology (NFMI). via is available in the latest binaural hearing systems, and enables real time broadband signal transmission with very low power consumption (Figure 7).

One small disadvantage of a digital transmission is that the incoming signals have to be coded and then decoded on the other side. This digital transmission process will introduce a slight time delay for the signal transmitted to the other ear. This delay is non-existent in analog or hard-wired systems. To deal with this digital transmission time delay, the audifon via system has added a unique feature called "sound resync", which slightly delays the signal on the receiving side to resynchronize the signals from both sides. That is especially helpful in a BiCROS configuration. Imagine a signal coming from the front, which will arrive at both ears at the same time. When the system digitally transmits the sound from one ear to the other, they will no longer occur at the same time in your receiving system, the transmitted signal will be slightly delayed. The "sound resync" feature synchronizes both signals so that they arrive at the better ear simultaneous again.

Figure 7. Digital wireless transmission between transmitter and receiver units. This transmission can happen synchronously with audifon's sound resync feature.

The advantages of this system are numerous. First, the features that are available from other audifon binaural hearing systems are available on the via devices as well. An added function is the "easyclick" feature that synchronizes program switching and volume settings on both sides with the click of one button on just one side which is especially helpful in the BiCROS configuration. An additional advantage of digital wireless transmission is that there is no need for a cable or audio shoes or special eyeglass constructions. The digital sound transmission produces superior sound quality that is resistant to interference with other systems and is much more stable than in the analog amplitude-modulation wireless systems. In a CROS/BiCROS configuration, the transmitter and receiver devices are paired as a binaural hearing system, with a secure, encrypted transmission. Therefore, even if there are two systems operating in close proximity they will not interfere with each other. Each system will only receive signals from its own transmitter. Encrypted transmission is only possible with a digital system.

NFMI results in less energy consumed from the hearing aid batteries. Systems using 2.4GHz technology, for example, might have significantly higher power consumption than hearing aids using alternative technologies. Due to the low radiation power, NFMI systems can be used worldwide without any registration or regulation required.

One potential disadvantage to digital wireless transmission is the time delay introduced by the digital transmission of the signals. This delay, as previously mentioned, is not an issue in the audifon via system due to the sound resync feature.

CROS/BiCROS Fitting Strategy

When considering the fitting strategies for wireless CROS/BiCROS solutions, we first have to consider the microphone on the deaf ear. Typically, this microphone will be in a BTE configuration, which means that the microphone will not be placed in the ear canal or at the eardrum where sound is naturally received, but rather on the top or back of the pinna. In a hearing aid or CROS configuration, we must compensate for this microphone location or microphone location effect (MLE) by emulating the open ear gain.

Next, the head-shadow effect (HSE) must be considered. Transmitting the signal to the better side means crossing over or around the head and then amplifying the signal at the better ear to compensate for the MLE, Care must be taken not to over amplify the transmitted signal when compensating for the microphone location effect. On the one hand we might introduce a small amount of amplification to distinguish the signal from the poorer side but not enough amplification to make the poorer side better than the good ear.

Obviously, there must be a means of delivering the sound to the better ear. In a CROS or BiCROS solution the receiver will be linked to the ear by some sort of earmold or other means. We have to be very cognizant of the acoustical changes that can occur when placing an earmold in an ear canal, from occlusion, venting and attenuation of environmental sounds. This can be referred to as the hearing aid configuration effect, which must be compensated for as well. If there is a closed earmold, the open ear gain and the normal acoustical environment at the better ear must be restored. While the use of open-ear fittings significantly improves CROS and BiCROS fittings, closed earmold fittings are often necessary and their effect considered.

For the BiCROS fitting strategy, many similar considerations should be taken into account such as the microphone location effect, the head-shadow effect, transmission of the signal to the better side, and balancing both sides. In addition to compensating for the transmission configuration on the poorer side, in a BiCROS consideration we also have to consider the hearing loss on the better ear. A BiCROS fitting is a combination of a unilateral hearing aid fitting at the better ear with a CROS signal linked into its signal path. Again, it is important to balance the signals on both sides, but modern systems can typically accomplish this using a volume control, if there is a VC available on the instrument. This has been a brief introduction to each of these strategies which together comprise a CROS/BiCROS fitting. In-depth discussion regarding each of these strategies is beyond the scope of this course but will be covered in upcoming audifon e-learning courses.

Summary

The most important message of this discussion is that today's CROS and BiCROS fittings are a convenient solution for clients with SSD, but also for those with very asymmetric hearing losses where conventional amplification is not possible. Many issues that are inherent in wired CROS and BiCROS arrangements are solved by wireless configurations. Digital wireless solutions further improve the configuration and performance options of the system as a whole, solving many of the problems inherent to analog wireless solutions. A completely digital wireless transmission system can include features that provide the proven benefits of traditional hearing systems such as directional microphones, noise reduction, phase cancellation and others. Additional features of the audifon via system includes synchronous easy clicks between devices, advanced digital wireless processing, and the sound resync feature for resynchronizing signals between ears make today's wireless CROS/BiCROS systems effective options for SSD.

Further Reading on CROS/BiCROS

Dillon, H. (2001). Hearing aids. Stuttgart, Germany: Thieme.

Hayes, D. (2006) A practical guide to CROS/BiCROS fittings. AudiologyOnline, Article 1632. Retrieved October 1, 2011 from: www.audiologyonline.com/articles/article_detail.asp?article_id=1632

Hill, S. L., Marcus, A., Digges, E.N.B., Gillman, N., Silverstein, H. (2006). Assessment of patient satisfaction with various configurations of digital CROS and BiCROS hearing aids. Ear, Nose and Throat Journal 85(7), 427-430.

Taylor, B. (2010). Contralateral routing of the signal amplification strategies. Seminars in Hearing, 31(4), 378-392.

Voelker, C. C. J., & Chole, R.A. (2010). Unilateral sensorineural hearing loss in adults: Etiology and management. Seminars in Hearing, 31(4), 313-325.

Cutting the Wire: What's New in CROS/BiCROS Technology?

December 12, 2011

Related Courses

1

https://www.audiologyonline.com/audiology-ceus/course/auditory-wellness-what-clinicians-need-36608

Auditory Wellness: What Clinicians Need to Know

As most hearing care professionals know, the functional capabilities of individuals with hearing loss are defined by more than the audiogram. Many of these functional capabilities fall under the rubric, auditory wellness. This podcast will be a discussion between Brian Taylor of Signia and his guest, Barbara Weinstein, professor of audiology at City University of New York. They will outline the concept of auditory wellness, how it can be measured clinically and how properly fitted hearing aids have the potential to improve auditory wellness.

auditory

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Auditory Wellness: What Clinicians Need to Know

As most hearing care professionals know, the functional capabilities of individuals with hearing loss are defined by more than the audiogram. Many of these functional capabilities fall under the rubric, auditory wellness. This podcast will be a discussion between Brian Taylor of Signia and his guest, Barbara Weinstein, professor of audiology at City University of New York. They will outline the concept of auditory wellness, how it can be measured clinically and how properly fitted hearing aids have the potential to improve auditory wellness.

36608

Online

PT30M

Auditory Wellness: What Clinicians Need to Know

Presented by Brian Taylor, AuD, Barbara Weinstein, PhD

Course: #36608Level: Intermediate0.5 Hours

AAA/0.05 Intermediate; ACAud inc HAASA/0.5; AHIP/0.5; BAA/0.5; CAA/0.5; Calif. SLPAB/0.5; IACET/0.1; IHS/0.5; Kansas, LTS-S0035/0.5; NZAS/1.0; SAC/0.5

As most hearing care professionals know, the functional capabilities of individuals with hearing loss are defined by more than the audiogram. Many of these functional capabilities fall under the rubric, auditory wellness. This podcast will be a discussion between Brian Taylor of Signia and his guest, Barbara Weinstein, professor of audiology at City University of New York. They will outline the concept of auditory wellness, how it can be measured clinically and how properly fitted hearing aids have the potential to improve auditory wellness.

2

https://www.audiologyonline.com/audiology-ceus/course/vanderbilt-audiology-journal-club-clinical-37376

Vanderbilt Audiology Journal Club: Clinical Insights from Recent Hearing Aid Research

This course will review new key journal articles on hearing aid technology and provide clinical implications for practicing audiologists.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Vanderbilt Audiology Journal Club: Clinical Insights from Recent Hearing Aid Research

This course will review new key journal articles on hearing aid technology and provide clinical implications for practicing audiologists.

37376

Online

PT60M

Vanderbilt Audiology Journal Club: Clinical Insights from Recent Hearing Aid Research

Presented by Todd Ricketts, PhD, Erin Margaret Picou, AuD, PhD, H. Gustav Mueller, PhD

Course: #37376Level: Intermediate1 Hour

AAA/0.1 Intermediate; ACAud inc HAASA/1.0; AHIP/1.0; ASHA/0.1 Intermediate, Professional; BAA/1.0; CAA/1.0; Calif. SLPAB/1.0; IHS/1.0; Kansas, LTS-S0035/1.0; NZAS/1.0; SAC/1.0

This course will review new key journal articles on hearing aid technology and provide clinical implications for practicing audiologists.

3

https://www.audiologyonline.com/audiology-ceus/course/61-better-hearing-in-noise-38656

61% Better Hearing in Noise: The Roger Portfolio

Every patient wants to hear better in noise, whether it be celebrating over dinner with a group of friends or on a date with your significant other. Roger technology provides a significant improvement over normal-hearing ears, hearing aids, and cochlear implants to deliver excellent speech understanding.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

61% Better Hearing in Noise: The Roger Portfolio

Every patient wants to hear better in noise, whether it be celebrating over dinner with a group of friends or on a date with your significant other. Roger technology provides a significant improvement over normal-hearing ears, hearing aids, and cochlear implants to deliver excellent speech understanding.

38656

Online

PT60M

61% Better Hearing in Noise: The Roger Portfolio

Presented by Steve Hallenbeck

Course: #38656Level: Introductory1 Hour

AAA/0.1 Introductory; ACAud inc HAASA/1.0; AHIP/1.0; BAA/1.0; CAA/1.0; IACET/0.1; IHS/1.0; Kansas, LTS-S0035/1.0; NZAS/1.0; SAC/1.0

Every patient wants to hear better in noise, whether it be celebrating over dinner with a group of friends or on a date with your significant other. Roger technology provides a significant improvement over normal-hearing ears, hearing aids, and cochlear implants to deliver excellent speech understanding.

4

https://www.audiologyonline.com/audiology-ceus/course/easy-to-wear-hear-for-39936

Easy to Wear, Easy to Hear, Easy for You…Vivante

Your patients can benefit with a broader portfolio and range of style choices so they can choose the best model for their needs. We will review why Vivante is easy to wear, easy to hear, easy for you, focusing on sound performance, connectivity and personalization. Be ready to open up to a wide world of choice.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Easy to Wear, Easy to Hear, Easy for You…Vivante

Your patients can benefit with a broader portfolio and range of style choices so they can choose the best model for their needs. We will review why Vivante is easy to wear, easy to hear, easy for you, focusing on sound performance, connectivity and personalization. Be ready to open up to a wide world of choice.

39936

Online

PT60M

Easy to Wear, Easy to Hear, Easy for You…Vivante

Presented by Kristina Petraitis, AuD, FAAA

Course: #39936Level: Intermediate1 Hour

AAA/0.1 Intermediate; ACAud inc HAASA/1.0; BAA/1.0; CAA/1.0; IACET/0.1; IHS/1.0; Kansas, LTS-S0035/1.0; NZAS/1.0; SAC/1.0

Your patients can benefit with a broader portfolio and range of style choices so they can choose the best model for their needs. We will review why Vivante is easy to wear, easy to hear, easy for you, focusing on sound performance, connectivity and personalization. Be ready to open up to a wide world of choice.

5

https://www.audiologyonline.com/audiology-ceus/course/real-ear-measurements-the-basics-40192

Real Ear Measurements: The Basics

Performing real-ear measures ensures patient audibility; therefore, it has long been considered a recommended best practice. The use of REM remains low due to reports that it can be time-consuming. This course will discuss the very basics of REMs.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Real Ear Measurements: The Basics

Performing real-ear measures ensures patient audibility; therefore, it has long been considered a recommended best practice. The use of REM remains low due to reports that it can be time-consuming. This course will discuss the very basics of REMs.

40192

Online

PT30M

Real Ear Measurements: The Basics

Presented by Amanda Wolfe, AuD, CCC-A

Course: #40192Level: Introductory0.5 Hours

AAA/0.05 Introductory; ACAud inc HAASA/0.5; AHIP/0.5; ASHA/0.05 Introductory, Professional; BAA/0.5; CAA/0.5; IACET/0.1; IHS/0.5; Kansas, LTS-S0035/0.5; NZAS/1.0; SAC/0.5

Performing real-ear measures ensures patient audibility; therefore, it has long been considered a recommended best practice. The use of REM remains low due to reports that it can be time-consuming. This course will discuss the very basics of REMs.