Editor’s Note: This text course is an edited transcript of a live seminar. Download supplemental course materials here.

Greetings and welcome to the presentation today, entitled Fit to Optimize Audibility or Patient Preference: A Review of the Evidence. We are going to be talking about two different fitting philosophies, including some germane studies related to each one of these philosophies. We will look at some of the similarities and differences on how those studies relate to the patient with these two different fitting philosophies. We are also going to look at some of the points that are experienced both by clinicians and patients related primarily to the initial use of amplification, and then at the end we will look at how we can bridge these two different fitting approaches with something called an automatic adaptation manager.

Hearing Aid Fitting Approaches

Fitting philosophies are kind of like tastes in music. That is, there is really no right answer. Everyone has different tastes in music. Sometimes music might seem a bit strange or out there for one person, and another person may have a taste that seems boring and mundane to others. Sometimes I think these personal tastes in music can reflect your core personal beliefs. When it comes to a hearing aid fitting, there is what I call the “one-question litmus test” to see what your fitting philosophy is. That clinical question is, “When it comes to the success of your patient, what do you consider most important?” Here is how I think this relates to musical tastes.

There are two different answers to that question, neither being right or wrong. One answer is that you want immediate patient acceptance, “I want my patient to like it from day one. It is very important when they walk out today that they are happy and accepting of the hearing aid and the way it sounds.” A second approach that focuses on long-term benefit is, “Initial use might be challenging, but if they stick with it a while, their frustration with things sounding too loud and too sharp will be rewarded by getting more long-term benefit from the instruments.”

When it comes to relating this to musical tastes, I think the immediate-acceptance model is really about bubble gum pop music. Who better to exemplify that than Katy Perry? Her music is easy to listen to, easy to like right away, easy to pound your feet to the beat and may be considered ear candy to most people. On the other hand, I would say a band like Radiohead exemplifies the long-term benefit approach, meaning, you might not get them right away. It takes a little longer to appreciate them, but if you stick with it for a while, they start to make a lot of sense and it is really interesting music. After all, with a band like Radiohead that has two drummers, what is not to like? I think that they are a good example of how when you stick with something, you can get some long-term benefit. So we have the Katy Perry approach, which is immediate acceptance, and we have the long-term benefit approach which in my opinion is best exemplified by Radiohead. That is my fun musical analogy for the day.

In terms of prescriptive fitting approaches, let’s talk about the similarities between the approaches. If you think about it, regardless of your fitting philosophy, you either want the patient to be happy from day one, or you expect them to have to stick with it a while to get long-term benefit. At the foundation of each is the use of a prescriptive fitting formula. If you are someone who subscribes to the belief of long-term benefit, then you would likely be using some type of an independently-derived fitting formula that is designed to optimize audibility and comfort of sounds. Of course, we have several independently-validated generic formulas. The two best examples are the family of formulas that come from the National Acoustic Laboratories (NAL) group in Australia and the Desired Sensational Level (DSL) group in Canada. We will go into more detail on that as we move through the next hour.

On the other side of things, if you subscribe to the immediate acceptance philosophy, you still might be relying on some type of a fitting formula, but very often these fitting formulas are proprietary in nature. There is some derivative of the generic formula that the manufacturer uses to match a target, but those targets are not to restore audibility and comfort; they are primarily there for immediate patient acceptance. Often times these proprietary targets have considerably less gain than their independently derived cousins.

Let’s look a bit more closely at the immediate-acceptance approach. Remember, if this is the philosophy that you subscribe to, then it boils down to giving the patient what he wants on day one. Make sure that he is accepting and happy when he walks out the door. If you subscribe to this belief, you still can use a prescriptive fitting target to make sure things look good on paper, but remember that the manufacturer’s prescriptive target is going to undershoot prescribed gain to maximize audibility by as much as 20 dB in the high frequencies. Some studies that were published almost 10 years ago showed that these proprietary formulas which are used to optimize patient acceptance often have between 5 and 20 dB less gain relative to the generic formula, especially in the high frequencies.

If you compare that to the long-term benefit approach, which is to give the patient what he needs to maximize audibility and comfort over the long-term, these are the types of formulas that are going to restore audibility and comfort for soft, average and loud sounds. If you are a believer in giving your patient long-term benefit, then you should be using at least one of the independently-derived prescriptive formulas, because this is exactly what they were intended to do- optimize audibility and comfort across different input levels. As I already mentioned, the DSL and NAL family of targets have evolved over time are designed to do that. No matter your fitting philosophy, each one of those approaches has a few minor drawbacks that are important to note.

Drawbacks

Some of the drawbacks associated with the long-term benefit approach that all of us deal with from time to time is that when we match these targets in our clinic, especially for an inexperienced user, they often complain that things sound too harsh, too sharp, or even too loud for the simple reason, perhaps, that they have not heard those sounds in such a long time. Of course if we stick to that method and ask our patient to wear it a while and get used to it, we know from our own experience that this can sometimes lead to the patient not wearing the hearing aids. The hearing aid is in the drawer too much, or the patient has lower-than-expected benefit simply because they never fully acclimated to the sound that we are trying to give them. Manufacturers listen to their customers, who are the hearing aid fitters, and over the last decade or so, manufacturers’ first fit or acclimatization managers now attempt to address this issue by providing their patient with less than optimal gain in the beginning with the hopes of easing the patient into the proper amount of gain over time.

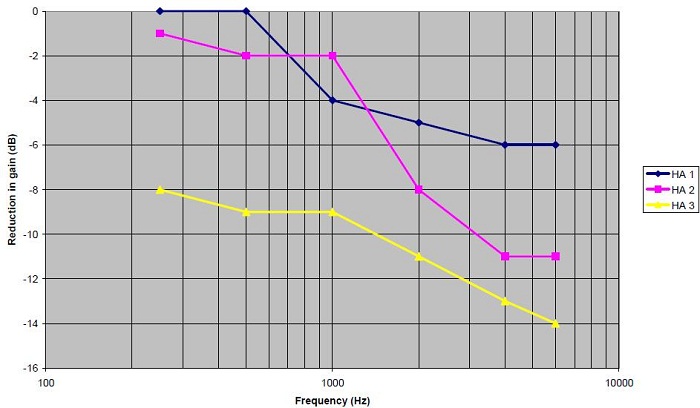

In Figure 1, you see an example of three different adaptation managers in hearing aids which attempt to get at this concept of easing the patient into the proper amount of gain. What you can notice on this slide is that all three of the hearing aids reduce gain by a certain amount. Hearing aid #3 in yellow reduces gain from the prescribed target by 8 to 14 dB in the high frequencies, hearing aid #2 reduces gain by 10 to 12 dB in the high frequencies, and hearing aid #1 is only about 6 dB in the high frequencies.

Figure 1. Gain reduction for soft inputs in three different hearing aids with adaptation managers for a moderate, sloping hearing loss.

The amount of attenuation from the prescribed independent target really does vary across manufacturers. Typically these adaptation managers are not automatic, meaning that the patient has to come into the office for the hearing aid fitter to tweak the hearing aid over time to match the prescribed target. My point is that there is quite a bit of gain reduction with these adaptation managers, and it does vary across manufacturers. In most cases, with a few exceptions, these adaptation managers do not automatically increase gain over time. They rely on the patient coming into the office, which can be an inconvenience for the patient, and also from a business standpoint for the clinician, as those appointments can take up a lot of time getting the user to a point where audibility is restored. Those are some of the drawbacks associated with the long-term benefit approach.

What about the first-fit acceptance approach? There are some pretty serious drawbacks related to this approach. You can pat yourself on the back when the patient walks out the door on day one, or maybe even on day 30, if they are happy with the way the hearing aids sound. But is the patient receiving the proper amount of gain for any lost speech cues that need to be made audible? Of course, the end result can be the same as it is for someone who is given too much sound, but if they are not given adequate sounds to restore audibility, that could lead to lower-than-expected benefit because they are not hearing things. Of course the end result would be that the hearing aids end up in the drawer and they are not being used, which does not benefit anyone. The end result with either one of the fitting approaches would be nonuse and lower-than-expected benefit.

Starting Point Matters

What I really want to get into next is that your starting point makes a tremendous amount of difference to the fitting. There is one study that really illustrates this point well. We will get into some of the nuts and bolts of the study in just a moment. But the main point here is that what the patient walks out the door with, as far as amplification is concerned, is where they end up staying for various reasons.

One study that illustrates the point well was published a couple of years ago in the Journal of the American Academy of Audiology (Mueller, Hornsby, & Weber, 2008). There were 22 participants in this study. They took this group of participants, fit them with a trainable hearing aid, and then they counterbalanced the group and fit them 6 dB above the NAL-NL1 target for a period of time. They took another part of the group and they fit them 6 dB below the NAL-NL1 target. They altered the starting point for each group. Then they looked at the users’ preferred gain and their overall satisfaction with those ratings about two weeks after they were fit with that particular amount of gain.

The results are very interesting. For the group that started 6 dB below the NAL target, the mean and the median data show that the group ended up being 5 dB below the NAL target. What this really tells you is that patients have the tendency to train around the starting point. If they started at -6 dB, they ended up on average at -5 dB. There were a few people that participated in the study that were above 0 dB, but there were also a significant number that went below -10 dB. So there was a lot of individual variability, which I think is important to note. Oftentimes we look at the averages in studies, but in clinical practice we know the patients that lead to the most frustration are those individual outliers. In this particular data set, there are definitely outliers.

The same group that started 6 dB above the NAL target had, on average, a tendency to train above the NAL target. So they may have started at +6 dB and, on average, they ended at +5 dB. The bottom line is that the starting point matters, and patients in this particular study (Mueller et al.., 2008) had a tendency to train around that starting point. If you think about it from a practical standpoint, if your philosophy is immediate patient acceptance and you start off well below the NAL prescribed target, patients are not going to train themselves up to where they really need to be. They are going to stay well below that target, which can be problematic when we are looking for long-term benefit, which would come from the restoration of audibility and comfort. I think this is a really interesting study that merits some consideration.

Another part of the study included a satisfaction rating, which is another component of the relationship between starting point, audibility, and satisfaction. One thing to note is that their starting satisfaction level in relation to the ending satisfaction actually went up a little bit. For those that were somewhat satisfied in the beginning, for both groups, they actually ended up being a little bit more satisfied when it was over. This shows you that even for people that start well above or well below the prescriptive target, their overall satisfaction has a tendency to go up a little bit.

I wanted to point out one other thing. There was a tendency for those that were very dissatisfied in the beginning, especially for the group that started 6 dB above target, for their dissatisfaction to be reduced a little bit. That shows you an example of how giving them an opportunity to get used to the amplification has some credence that that might actually be occurring. This was an interesting study on a number of levels.

To summarize this study, participants had a tendency to train around the initial starting point. Again, if you start well below or well above target, patients have a tendency to stay there. There were a lot of individual differences in preferred gain. Oftentimes, we focus on the mean, but it is the individuals that are meaningful when we are working in a clinic. The last point is that when starting gain was +6 dB above the target, participants were less satisfied with loudness compared to when the starting point was -6 dB. That is a little bit of data showing when you start below the starting point, overall satisfaction is probably a little bit better.

Prescriptive Fitting Approaches

I would like to take a deep dive into some of the prescriptive fitting approaches that we use. Just to remind everyone, prescriptive fitting approaches have been around for a long time. The first one that I know about is Sam Lybarger, who is a very influential person in our field. He was the first person to talk about this idea of a half gain rule (Lybarger, 1963), which provides about half the amount of gain for the pure-tone threshold. In today’s world, there are really two different types of independently-derived prescriptive formulas. We have the loudness normalization approach and we have the loudness equalization approach.

What are the differences between normalization and equalization? For many of you, this may be somewhat of a review, but I think it is important to do nevertheless. The role of loudness normalization is to restore loudness perception at each frequency. This means that the low frequencies get as much gain as the higher frequencies. The whole idea here is to provide the listener with a hearing impairment with the same overall perception of loudness as someone who has normal hearing. Some good examples of loudness normalization prescriptive approaches are the original DSL, the Fig6 formula which was developed several years ago by Mead-Killion, and the IHAFF (Independent Hearing Aid Fitting Forum) formula developed about 15 years ago, which was a normalization formula. It is important to note that these fitting strategies in their original forms are not used as much clinically these days. I do not know of any current probe-microphone equipment or manufacturers that use those in their fitting formula selections. They are certainly not very popular these days, and they are not very easily obtained commercially either. That is the normalization approach.

We can compare that to the equalization approach. The idea here is that we want to equalize the perception of loudness over a range of frequencies. From a practical standpoint, that means we do not give low-frequency sounds as much gain because we are trying to equalize loudness across the entire spectrum. This is the case with loudness for those with normal hearing. Typically, equalization formulas apply slightly less gain, especially in the low frequencies, relative to the normalization formula.

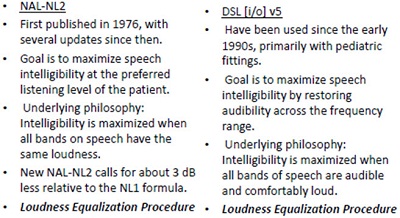

Some examples of equalization formulas would be the newer DSL i/o version 5, which is for adults, the newest version from NAL, NAL-NL2, and also one that comes from Brian Moore which is the CAMEQ-HF. Those are three examples of equalization. The two most popular are the NAL family and the DSL family, which are compared in Figure 2. I am going to spend a little bit of time talking in more detail about these two. Both of these formulas have been around for a long time, especially the NAL, since the mid-70s. The goal, of course, is to maximize speech intelligibility at the preferred listening level of the patient. The underlying philosophy is to maximize intelligibility when all the bands of speech have the same loudness. We know that NAL-NL2 calls for about 3 dB less gain relative to the NAL-NL1 formula. This is a loudness equalization procedure.

Figure 2. Comparison of history and features of NAL-NL2 and DSL i/o v5.

The goal and underlying philosophy with DSL i/o version 5 is very much the same thing as NAL-NL2. Even though the original DSL was a loudness normalization procedure, if you dig around a little bit and read some of the newer reports from the DSL group, they call their version 5 formula a loudness equalization procedure. So over time, both of these formulas have become very similar.

Only loudness equalization formulas are used clinically. There are independently-derived or generic formulas that are available today, and we know that many manufacturers have developed their own formula in an attempt to maximize patient acceptance, rather than maximize audibility and overall comfort of speech.

I wanted to spend a few minutes talking about a study that was published by Johnson and Dillon (2011), comparing differences in gain and compression in the NAL-NL2 and DSL multiband i/o version 5 formulas on speech intelligibility scores. It is very interesting, and I would encourage you to read it. They included nine different audiograms of varying degrees that were programmed into the formula. There were sensorineural, mixed and conductive hearing losses.

They also included insertion gain data for four different types of hearing loss using the two fitting formulas. Between about 1,000 and 3,000 Hz, the prescribed insertion gain is similar, within about 5 dB or so, for these sensorineural type hearing losses. There were significant differences in the high frequencies, especially for the CAMEQ. Remember the CAMEQ formula is really the only one that I know of that is specifically designed for wideband hearing aids that go out beyond 6,000 Hz. It calls for a significant amount of gain and looks at gain for extended-bandwidth devices. There were some large differences in the low frequencies for various reasons as well.

This study also looked at differences in compression ratios that were prescribed. Johnson and Dillon (2011) evaluated the differences in compression ratios are at 500 and 2,000 Hz for five different hearing losses and three different fitting formulas. These are two very important frequencies that we look at when we fit hearing aids. For the most part, those compression ratios ranged from about 1.2 to about 2.3 or 2.5, which were not huge differences at those two frequencies. My point here is that, especially for NAL-NL2 and the DSL, which seem to be the most popular, there is very little difference between the prescribed gain and the prescribed compression ratio.

What about loudness? The aided loudness was averaged for those five different types of sensorineural hearing losses using a 65-dB input signal. The take-away point is for the two most popular formulas today, NAL-NL2 and DSL i/o, the overall aided loudness rating is virtually identical. There was a pretty significant reduction in overall loudness when patients are fitted with the NL2 relative to the NL1. There is about a 3 to 4 dB difference in overall perception of loudness with the NL2 relative to the NL1. Again, there is very little perceptual difference of loudness on the part of those five difference sensorineural hearing losses when you use DSL and NAL-NL2.

Johnson and Dillon (2001) looked at the speech intelligibility index (SII) in this study, which is a calculation looking indirectly at audibility to determine how much speech should be intelligible to the listener. The study goes into a couple of different calculations of the SII, but the main point here is that when audibility is restored using any one of these fitting formulas, speech intelligibility is up around 100%. The bottom line is that all of these fitting formulas (CAMEQ2-HF, DSL i/o, NAL-NL1 and NAL-NL2) accomplish the same thing at the end of the day, which is improvement in overall speech intelligibility.

Finally, there were just a few more things to mention on this particular study. When looking at the difference between NL2 and DSL targets for seven hearing losses, the reversed slope, mixed and conductive hearing losses showed the biggest differences in prescribed insertion gain between the DSL and the NAL. For example, the reverse-slope hearing loss called for more gain in DSL relative to NAL-NL2. On the other hand, there was a lot less gain required from the DSL than for NAL-NAL2 for conductive and mixed losses. That would tell you possibly that the DSL is not looking at an air-bone gap in their fitting formula. If you are fitting someone with a significant air-bone gap, perhaps you might stick with the NAL-NL2 formula to account for that.

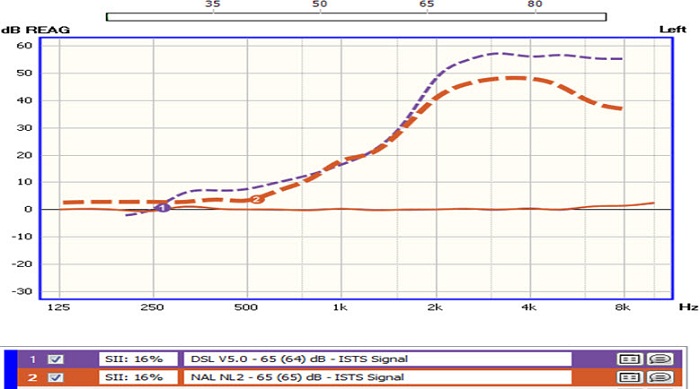

To give you an idea from a practical standpoint, I used the probe microphone equipment in the office to look at the difference in fitting targets between DSL (in purple with the number 1) and NAL-NL2 (Figure 3). You can see for a flatter moderate to moderately-severe loss in the high frequencies that the fitting targets are virtually identical. DSL calls for slightly more gain at 500 Hz through 1,000 Hz. Other than that, there is very little difference between the two for that flatter hearing loss. For a steeply sloping hearing loss, DSL will call for considerably more gain between 2,000 and 4,000 Hz. Again, one is not right or wrong. I am just showing you some of the differences that you have, and if starting point is important, this is something that you want to take into consideration.

Figure 3. Differences in fitting targets (DSL and NAL-NL2) for a flat, moderate hearing loss.

To summarize, for most hearing loss configurations, the prescribed insertion gain, the perception of aided loudness, and the speech intelligibility are very similar between NAL-NL2 and DSL i/o v5. Where you start to see some significant differences are when you have air-bone gaps or when you have a very steeply sloping hearing loss. Also, keep in mind that even though there are some differences, they both have a similar goal in mind, which is to optimize intelligibility and maintain overall comfort.

Do They Work?

Let’s look at some of the evidence that would suggest that these independently-derived fitting formulas are actually effective. Back in 2005, Mueller did an evidence-based review on the question, “Are there real-world measures from adult patients that show a preference for gain prescribed by a specific prescriptive fitting formula?” He found that there were 11 studies that met the criteria for inclusion in his meta-analysis. His findings were that gain similar to or about 3 dB less than the old NAL formula was preferred by patients. Since then, there have been other studies that would support his conclusion.

Elizabeth Convery, who is an audiologist at NAL in Australia, conducted her own meta-analysis of gain preferences over time with her colleagues (Convery, Keidser, & Dillon, 2005). They found very little support for gain adaptation in new users. They compared 98 new hearing aid users to 77 experienced hearing aid users and found that the average difference in preferred gain between the two groups was no more than about 2 dB, with new users preferring slightly less gain than experienced users. She also found that these differences in preferred gain did not change over a one-year period. If you preferred less gain a day after the fitting, chances were you preferred less gain a year after the fitting, which I think is important to consider.

Since then there have been a few other studies that have shown very similar results. Marriage et al. (2004) showed that, on average, new users wanted 2.6 dB less gain than experienced users. In another study by Smeds and colleagues (2006), there were no significant differences in gain preferences for new compared to experienced users. For those of you who are thinking that immediate acceptance requires less gain, there is not a whole lot of evidence to support that point. Two to three dB might be all that there is. Although the evidence shows the effectiveness of these NAL and DSL gain targets as good starting points, there is still a very popular notion by many that new users require less gain than experienced users. I think that is more of a pragmatic view that these patients are less likely to accept it if you match the target; it is not exactly supported by the evidence.

Differing Points of View

Even though that might be happening and may be shown in the research, let’s look at the first-fit acceptance approach. The thesis here is that new users, as I have been talking about, prefer less gain than experienced hearing aid users, and that over time these new hearing aid users will gradually increase gain after the fitting, Give them a little bit less, and over time they are going to get to where they need to be. Is that really true?

Manufacturers have developed adaptation tools to fit into this. Oticon, Beltone, and now Unitron have an automatic adaptation manager. But let’s look at the whole idea. Is there any evidence to support the notion that patients need to be started with a lot less gain relative to these independently-derived prescriptive targets? There are a few studies that might answer this question. In 2009, Keidser described an NAL study that looked at gain preferences for experienced users (Keidser, 2009). All 28 experienced hearing aid users were fitted with the NAL-NL1 target, but with 3 dB less overall gain. This was more in line with the new NAL-NL2 target. These 28 experienced users kept a diary for two weeks. They documented their listening environments, and they were reporting back what their loudness preferences were.

I wanted to point out some interesting things from this study. Fifty-seven percent of the subjects said that speech in quiet was just right when they were fit 3 dB below the NAL target, 35% said that speech in noise was just right. There were some large differences across the listening environments, however, which is important to note. Furthermore, a third of the subjects thought speech in noise was softer than they preferred, about a third of them said it was just right, and about a third said that it is louder than they preferred. There was a lot of individual variability across the board, especially for speech in noise, as far as loudness was concerned. This gets at the notion that perhaps it is okay to start the patient off with their individual preference.

Here is another study that looked at that notion a little more carefully. This study was published in the International Journal of Audiology, the title of which was Variation in Preferred Gain with Experience for Hearing Aid Users (Keidser, O’Brien, Carter, McLelland & Yeend, 2008). In this study, they looked at gain preferences and if they change over time with hearing aid experience. They compared a group of experienced users who had more than 3 years of hearing aid use to a group of 50 new users. All parameters were all very well controlled in this particular study. All the subjects, both the experienced and the new users, were fitted with the Siemens Music Pro hearing aid, which is a multichannel wide-dynamic range compression (WDRC) product that was popular several years ago. They programmed this product for all of the participants. Program 1 was the gain requirements matching the NAL-NL1 response. Program 2 was that response minus 6 dB in the high frequencies. Program 3 was that response with 3 dB reduction in the low frequencies. Patients were all sent out the door with three distinct programs, and the patients were asked to come at 1 month, 4 months, and 13 months post-fitting for some testing. One of the things they looked at was aided loudness for the NAL-NL1 targets in program 1; this was obtained at all of these intervals. It was looking at changes in gain preference and overall loudness perception over time.

The summary of the study indicated that there were not a lot of changes in preference over time. For example, of the patients who were experienced users at 1 month, 42% preferred the NAL-NL1 program, 50% preferred the high-frequency cut, and 8% preferred the low-frequency cut. For new users, there was not a huge difference; 35% preferred the NAL-NL1 program, 56% preferred the high-frequency cut and 10% preferred the low-frequency cut. New users at 4 months showed very little change from their 1-month report. The 35% that preferred the NAL-NL1 went to 31% and the 56% that preferred the high-cut went to 62%. The big picture here is that there is not a whole lot of change in preferences over time.

Patients with a milder hearing loss preferred about 4 dB less than the NAL target at 1 month, 4 months, and 13 months. That preference did not really change from 1 month to 13 months. Patients who had a greater than 43 dB high-frequency average preferred less gain relative to the NAL, and there was a slight up-tick in their gain preferences over time, about 2 dB. So they started off liking the response, with the majority of them liking it 8 dB less than the NAL target in the high frequencies. Over one year’s time, there was a 2 dB change in that.

This study also looked at changes in comfortable loudness over time. Over time, the comfortable loudness level only changed and went up about 2 dB. So one month after the fitting, the new users, on average, rated comfortable loudness at about 55 dB, and at 4 months it went up to just over 56 dB. There was about a 1 or 2 dB change in aided comfortable loudness over time. I think the bottom line is that there is not a whole lot of change over time for these new users.

Some of the conclusions from this study by Kiedser et al. (2008) was that new users prefer less overall gain than experienced hearing aid users. After 13 months, the gain adaptation was no more than 3 dB for those that had a more significant hearing loss. Change in comfortable loudness among new users after 4 months was only about 2 dB. One of their conclusions was that the NAL-NL1 over-prescribed gain by about 3 dB for average-level inputs. It is important to note that the NAL-NL2 takes that into consideration and is already prescribing less gain.

Alternatives to Prescriptive Fitting Approaches

I wanted to spend a little bit of time here towards the end talking about some alternatives to prescriptive fitting approaches. Remember one of the main points I wanted to make was that no matter what your core philosophy might be, immediate patient acceptance or long-term benefit, you are still likely to use a prescriptive fitting target as your starting point. There are actually some alternatives out there that are worth some consideration. One article by Walden et al. (2009) took a slightly different approach, as far as looking at preferences in gain. They looked at everyday listening situations that are most frequently reported by patients seeking an evaluation of their hearing. Some of those include listening to a child in quiet, conversation with the TV in the background, talking in a restaurant and conversation with someone in another room. These are the places where people report a problem with communication. What the authors did was have the patient listen and then rate the gain preferences through a simulated hearing aid. The patient was actually guiding the approach and telling the clinician what their gain preferences were. They would listen to three options and then choose on a touch screen which option they preferred. There are several examples in the study that show the participant’s audiogram and what their gain preferences were for both the left and right ears.

This is a slightly different approach that might warrant some consideration. It is not clinically available at this time as far as I know, but it is showing you that it is a way to get the patient involved in making decisions about their gain preferences. They found that preferences for amplified sounds were predictive of hearing aid candidacy, and they did not think this approach was sufficient at this point to replace traditional determination of candidates. However, it was a nice way to demonstrate to the patients in an intuitive manner what the potential benefit might be.

That is one approach that differs slightly from prescriptive approaches. Another approach, more popular in Europe, is a patient-driven approach that is very similar to the Walden et al. (2009) study. It is something that was developed in Italy called Amplifit 3. The patient listens to sounds through the speakers, and then there is a simulated environment that they see on a screen. One study that looks at how Amplifit can be used clinically was just published this month in Trends in Amplification (Boymans & Dreschler, 2012). A high number of subjects participated in this study, and it examined audiologist-driven versus patient-driven fine tuning. They found that the audiologist-driven prescriptive approach resulted in higher gain values and overall performance of speech perception, and that the participants actually favored the audiologist-driven approach. The bottom line with this study is if you let the patient take over, in at least one-third of the cases, speech perception is not optimized. However, if you use an audiologist-driven prescriptive approach, over time you are going to get what you need in two-thirds of the patients. Furthermore, satisfaction levels, I think for both groups, were the same. This is a study that supports the use of a prescriptive approach in the fitting process.

Regardless of your fitting philosophy, what you start with is very often different than what is needed to achieve long-term benefit. Some of the Keidser studies (2008; 2009) would show that a reduction in high-frequency gain of 6 to 8 dB leads to patient acceptance, but is nowhere near the prescriptive fitting target. It is important, regardless of your fitting approach or philosophy, to consider that because these approaches are going to result in differences in gain, the patient will have to come in for numerous visits for adjustments and tweaks. For example, if you have a strong belief that patient acceptance is very important at the beginning and you reduce the gain to accommodate that, we know from the Mueller et al. (2008) study that patients have a tendency to train around the initial gain, so you are expecting the patient to come in to have the gain increased if you ever want to optimize audibility and comfort in everyday listening situations. When we do that, however, it takes time away from our schedule and is an inconvenience to the patient.

One thing that I want people to think about is if there is a sensible hybrid approach between the two. Is it okay to go for initial patient acceptance so that the patients keep the devices in their ears? We know that there are a fairly significant number of patients that will not wear their hearing aids if it is too loud. Then over time, could we gradually increase gain so that there is sufficient audibility to hearing the missing sounds of speech? I think with today’s technology, you can really have the best of both worlds. That really comes about with an automatic adaptation manager. Instead of relying on the patient to come in for the visits, we can set the hearing aid so that it does both of these things well. It is set for immediate patient acceptance and over a period of time, the gain is increased to match the prescriptive target so that audibility and comfort can be optimized. I think the key point here is that it is done automatically.

Here is a suggested hybrid approach that I would take. When a new user comes in to my office, I would match the gain to a target using either the DSL or NAL, because we know that those are independently-derived formulas, and their goal is optimize audibility and comfort. So start with one of those. Taking into consideration that many of these patients cannot accept that amount of gain, I would reduce the overall gain by 3 to 10 dB in some cases. In a lot of the new probe microphone equipment, there is a sound simulator that can help you determine by how much you might want to reduce the gain. Then I would set the automatic adaptation manager to transition from optimal gain for patient acceptance to optimal gain for long-term benefit over about a 6- to 12-week period of time. The reason I picked 6 to 12 weeks is because there is some data out there that would suggest that is the amount of time for the average patient to get fully acclimatized to new sounds (Gatehouse, 1992; Arlinger, Gatehouse, Bentler, Byrne, Cox, et al.., 1996).

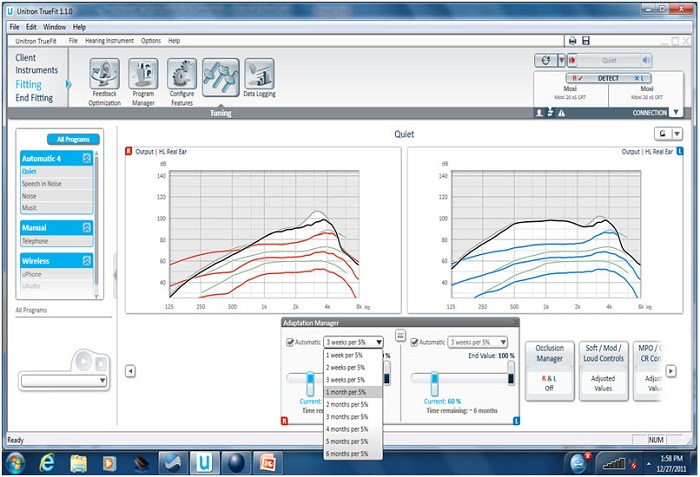

This automatic adaptation manager is a brand new feature to Unitron. Here is a screenshot from the Unitron fitting software (Figure 4). It has several parameters that you can adjust. It has how much you want the gain to be increased and over how many months, 5% per week, 5% over six months, et cetera. It also has the ending value, which you can change. In this particular case (Figure 4), the end value is 100% of the NAL target. You can start with either the DSL version 5 or the NAL-NL1 target in the Unitron software. This is telling us that the end value over several months or weeks is 100% of the target; you can start off anywhere between 50% and 95% of the target. In this particular example, we are starting off at 6 dB below what is called for, and it is showing you how much time it is going take to get to the end value. With an automatic adaptation manager, you really can have the best of both worlds and you can bridge the gap between long-term benefit from optimized audibility and short-term immediate patient acceptance. You can do this without the patient having to come into your office for numerous adjustments. It transitions over time gradually. You set up the parameters to what you think works best, and then the patient can just put the hearing aids in and let the software automatically make the adjustments.

Figure 4. Unitron automatic adaptation manager.

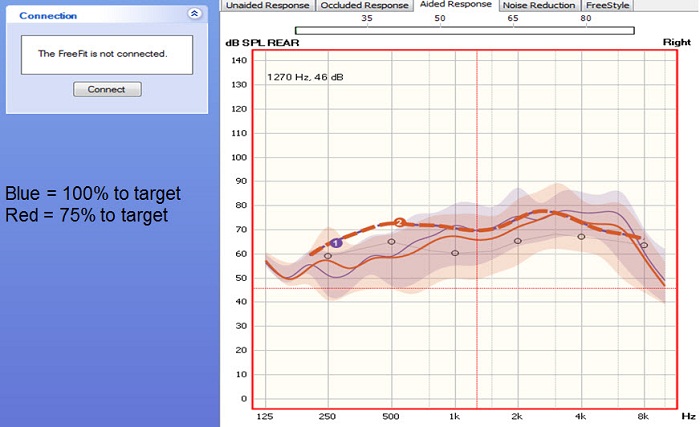

Back to my recommended approach, I would first match the target to 100% and I would confirm that you have the right frequency response across the bandwidth. We are matching first at 100%, verifying that with the probe microphone, and then dropping the response to 75% of the target. In this particular example, we first matched the target to 100% to confirm that things were right. Then we dropped the response to 75% of target, so we knew that loudness would not be an issue, and that is what the patient walked out the door with. That response increased over about a 12-week window of time to 100% of the target automatically, without the patient having to come into the office. Figure 5 is showing you the difference between matching 100% of the target and 75% of the target. This is an open fit product so you do not see much gain in the lows, but the dotted line 1 and 2 is the NAL target. The blue line is when I would match at 100% of the target to make sure things were okay in the office. Then using the automatic adaptation manager, the red line shows you what happens when you drop to 75% of target. We are providing the patient with less gain than required to optimize audibility, but we are doing that in hopes that it will lead to immediate patient acceptance. Over 12 weeks, the response will gradually and seamlessly go up to the blue line, which is 100% of the target.

Figure 5. Matching 75% (red line) versus 100% (blue line) of target in the Unitron fitting software in an open-fit hearing aid.

Conclusions

There is evidence out there to support both an immediate acceptance and a long-term benefit approach for various reasons. Both approaches rely on prescriptive formula. In terms of the immediate acceptance approach, you would use either a proprietary formula or you would reduce the gain, which would hopefully lead to more immediate patient acceptance. Now with an automatic adaptation manager, you can really have it both ways. You can start with immediate patient acceptance, and then set it to optimize audibility over several months if you want to. The literature shows 6 to 12 weeks for most patients is probably okay (Gatehouse, 1992; Arlinger, et al.., 1996.) With that said, I still think it is imperative that you use probe microphone measures to verify that your target is right. You can see if there are any dips or peaks in the frequency response. When you do drop below that target, you can see exactly how much below the target you are dropping. You are taking into consideration the individual geometry of the ear canal, and I think those are all very important reasons to still use probe microphone measures more than ever with automatic adaptation managers. The point is that you can have the best of both worlds with this feature, with some science behind both a long-term benefit and immediate acceptance approach.

References

Arlinger, S., Gatehouse, S., Bentler, R. A., Byrne, D., Cox, R. M., Dirks, D. D., Humes, L., et al. (1996). Report of the Eriksholm Workshop on auditory deprivation and acclimatization. Ear and Hearing, 17(3S), 87S-98S.

Boymans, M. & Dreschler, W. A. (2012). Audiologist-driven versus patient-driven fine tuning of hearing instruments. Trends in Amplification, 16(1), 49-58.

Convery, E., Keidser, G., & Dillon, H. (2005). A review and analysis: does amplification experience have an effect on preferred gain over time. Australian and New Zealand Journal of Audiology, 27(1), 18-32.

Gatehouse, S. (1992). The time course and magnitude of perceptual acclimatization to frequency responses: evidence from monaural fitting of hearing aids. Journal of the Acoustical Society of America 92, 1258-1268.

Johnson, E. E., & Dillon, H. (2011). A comparison of gain for adults from generic hearing aid prescriptive methods : impacts on predicted loudness, frequency bandwidth, and speech intelligibility. Journal of the American Academy of Audiology, 22(7), 441-459.

Kesider, G. (2009). Many factors are involved in optimizing environmentally adaptive hearing aids. Hearing Journal, 62(1), 26,28-31.

Lybarger, S. F. (1963). Simplified fitting system for hearing aids. Canonsburg, PA: Radioear.

Marriage, J., Moore, B. C. & Alcantara, J. I. (2004). Comparison of three procedures for initial fitting of compression hearing aids. III. Inexperienced versus experienced users. International Journal of Audiology, 43, 198-210.

Mueller, H. G. (2005). Fitting hearing aids to adults using prescriptive methods: an evidence-based review of effectiveness. Journal of the American Academy of Audiology, 16(7), 448-460.

Mueller, H. G., Hornsby, B. W. Y., Weber, J. E. (2008). Using trainable hearing aids to examine real-world preferred gain. Journal of the American Academy of Audiology, 19(10), 758-773.

Smeds, K., Keidser, G., Zakis, J., Dillon, H., Leijon, A., Grant, F., Convery, E. & Brew, C. (2006). Preferred overall loudness. II: Listening through hearing aids in field and laboratory tests. International Journal of Audiology, 45(1), 12-25.

Walden, T. C., Walden, B. E., Summers, V., & Grant, K. W. (2009). A naturalistic approach to assessing hearing aid candidacy and motivating hearing aid use. Journal of the American Academy of Audiology, 20(10), 607-620.

Cite this content as:

Taylor, B. (2013, February). Unitron professional development series: Fit to optimize audibility or patient preference? A Review of the evidence. AudiologyOnline, Article 11608. Retrieved from https://www.audiologyonline.com/