Hearing Better in Noise: How ReSound Neuromorphic Technology Uses the Natural Power of the Brain

AudiologyOnline: How is the ReSound approach to directionality in hearing aids different to any other?

Andrew Dittberner: You might expect me to talk about technology only here, but really what makes our approach different is that we have looked for opportunities to combine what the person wearing the hearing aids and their brain do well with what technology does well. The brain could be thought of as a 6 petaflop supercomputer that can process sound very efficiently. Combining the strengths of our artificial technology with biological processes of the human is what creates our solution. This biological and technological combination is called “nonintrusive neuromorphic technologies”, a first in the hearing aid industry.

AudiologyOnline: That sounds very technical! Can you provide a little more detail?

Andrew Dittberner: The point is for the technology to be as useful as possible in the everyday lives of hearing aid users, not just in an artificial lab situation. The brain’s ability to sort through and focus on wanted sounds while ignoring other sounds, and to shift attention effortlessly and at will among different sounds in the environment is simply unmatched and this is true even for people with hearing loss. So, to further illustrate this new type of neuromorphic technology, we take sound input from a person’s two ears, and leverage what the brain does naturally. The artificial side of this system (the hearing aid) is designed to enhance the hearing aid wearer’s ability to do this, not to take over or interfere with how they want to listen. Both biological and artificial processes are used to create an enhanced listening experience. An important implication to this is that we don’t want to use the technology in a way that limits the user’s access to the sounds around them. I like to compare this approach to how a powered exoskeleton works. These are wearable robotic machines that synchronize with the wearer’s movement, providing them with added strength and ergonomic support. The exoskeleton doesn’t take over but rather enhances the wearer’s intended natural motion. So you have the combined power of person and machine.

AudiologyOnline: How did ReSound come up with this idea?

Andrew Dittberner: The key component of our strategy is that it is binaural. This is because our brains naturally compare the signals delivered by the two ears to fulfill our listening goals in any given situation. We saw an opportunity to rethink how directionality, a known technology that is proven to improve signal-to-noise ratio in given conditions, can be applied in a novel way to support the hearing aid wearer’s natural ways of using their hearing. Prior to this, hearing aid manufacturers clearly thought only about the individual device and how to make the directional response stronger in that device from a technical standpoint. Our approach considers what happens when the sound from two hearing aids combines with the user’s auditory processing capabilities.

AudiologyOnline: How long has ReSound been following this binaural strategy for directionality?

Andrew Dittberner: The beginning of this work was originally conceived in the early 2000s when I was working on my dissertation at University of Iowa with Ruth Bentler and other great colleagues. This research direction was against conventional wisdom regarding directional technology, but I was given the freedom to explore this idea. When I moved to GN Hearing, we conceptualized a new binaural noise management system with support from both internal and external researchers. Todd Ricketts, from Vanderbilt University, and our team of research engineers from Eindhoven Technical University and GN Advanced Science provided invaluable support in challenging and developing this completely new concept. We introduced our first iteration of this work in 2008 and haven’t looked back. Every few years since, we have introduced new products that have advanced our ability to support the natural ways people listen in both quiet and noisy real-life situations. The last few years, we have seen other hearing manufacturers begin to talk about the importance of being able to hear in noise without being totally cut-off from your surroundings. This tells us we have been on the right path all along.

AudiologyOnline: Can you explain how the ReSound directional strategy works?

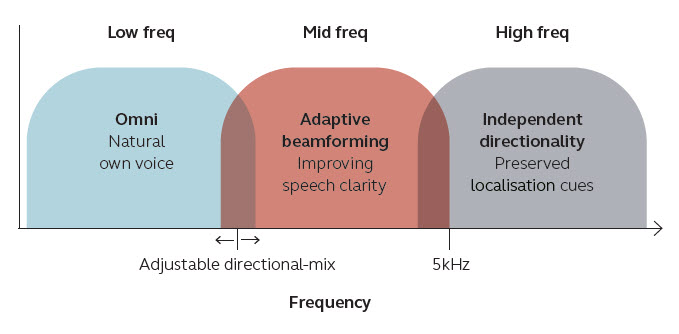

Andrew Dittberner: Yes, regardless of what product generation we are talking about, the way we implemented directional technology aims to support native listening strategies that people naturally use depending on the complexity of the listening situation and what their listening goals are in the situation. In relatively uncomplicated environments, we rely on spatial hearing cues to construct an auditory image of the environment including the type and position of the different sounds in that environment. Natural sound quality is also very important in this kind of situation, so we are careful to make sure we provide that with the hearing aids.

As the environment becomes more complex with additional competing noise sources, we begin to shift over to a better ear strategy where we rely on the ear that has the best representation of what we are interested in listening to. Here it is important that the hearing aid user both has access to a boosted SNR for sound in front, but also audibility for sound all around. The hearing aids can’t know what the signal of interest is or how it dynamically will change; we need to provide the hearing aid user with the appropriate information so their brain can make these decisions. The way we support this listening strategy is to provide directionality on one ear and omnidirectionality on the other. We have historically been challenged on this use of an asymmetric directional response, but in fact this is how we hear the world naturally. Further, peer-reviewed studies confirm our theory that the ear with the best SNR for what the person wants to hear is what the brain uses. Some people may be familiar with the practice of prescribing different strength contact lenses for each eye – one for distance vision and one for close-up vision. While it is a different modality, it is another example of how the brain integrates information and relies on the input which best serves the individual’s intent in a given situation.

AudiologyOnline: But isn’t it the case that activating directional microphones on both ears adds to the improved SNR?

Andrew Dittberner: There are situations with diffuse noise where a hearing aid user is facing others who are talking. If our system cannot detect speech from the rear hemifield, we go ahead and apply the strongest directionality in both hearing aids to maximize directional benefit in this special case. Note that datalogging shows that this typically happens only 10% or less of the total use time because most often the noisy speech environments have conversations going on all around that the hearing aid user might want to be aware of. Therefore, we also make strong directionality bilaterally available as a user selectable feature when they know that they want to focus on the conversation in front of them regardless of what else is going on. With today’s smartphone connected hearing aids and our ReSound Smart 3D app, it’s easy and empowering for users to be able to access special features like this. Our data show that use of sound modifying features like the strong directionality are the most popular app features among users.

AudiologyOnline: You have talked a lot about strategy, but what technologies are necessary to carry out the strategy?

Andrew Dittberner: The default automatic program called “360 All-Around”. The specific technologies we are using include ear-to-ear data communication via our 2.4 GHz radio to analyze the environment and determine the best listening mode to activate, as well as to correct errors that are introduced inherently by compression which tend to distort certain binaural spatial cues; the M&RIE receiver, which stands for “Microphone and Receiver-in-the-Ear”, to preserve personal, ear-specific spectral cues for spatial hearing; and a magnetic induction radio that provides ear-to-ear audio streaming. We use the audio streaming for two purposes. One is to enable binaural beamforming to provide the best possible SNR improvement in noisier situations. The other way we use it is to stream audio from the directional side when asymmetric directionality is applied. This allows the user complete access to sound all around and access to an improved SNR simultaneously.

AudiologyOnline: Is there anything special about the ReSound binaural beamforming?

Andrew Dittberner: Yes, instead of just creating two independent directional beams for each ear, we have formulated a clever software solution in our new technology to adjust both ears synchronously, especially when we formulate different shaped beams on either side of head to maximize head shadow effects and other biological advantages. This bilateral synchronization in our solution provides the right shape of a beam on each side of the head to provide all the important information we need to send to the brain and then the brain can stitch this information together to provide a very natural and enhanced listening experience (i.e. neuromorphic technology). This listening experience is directed by the user who decides what to focus on or what not to focus on. Hence, we provide a solution in which we lose no information about the person’s surrounding soundscape.

Figure 1. The unique 3-band directional system in the ReSound OMNIA improves SNR while still preserving natural sound quality and spatial hearing cues.

AudiologyOnline: Does artificial intelligence come into play in this solution?

Andrew Dittberner: Absolutely, the decision making that must determine what is the signal of interest and what is noise is obviously critical. Here, we formulated an augmented intelligent system in that our hearing aid makes low level decisions to automate certain events. However, high level decisions are then left to the human brain. We strongly believe that this approach of combining artificial intelligence with biological intelligence (i.e. augmented intelligence) is very powerful and is a strong part of what defines ReSound OMNIA and our future product lines.

AudiologyOnline: How does the performance of the technology in ReSound OMNIA compare to previous generations?

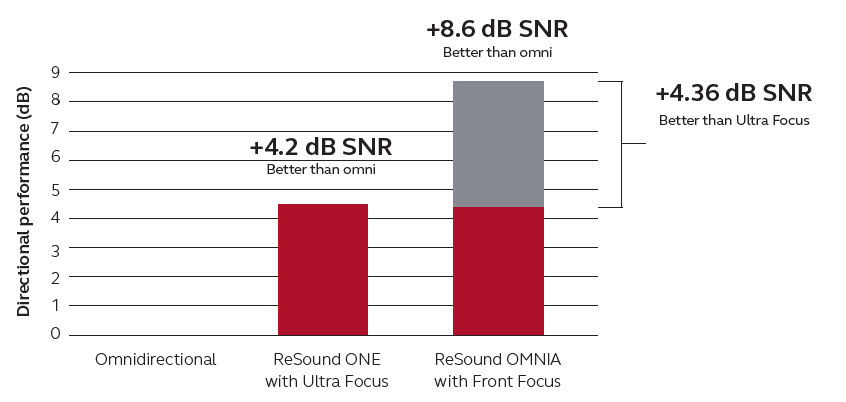

Andrew Dittberner: Each successive generation has offered improvements either in sound quality, spatial hearing, off-axis audibility or better support of native listening strategies via microphone mode control. With ReSound ONE we introduced binaural beamforming that offered improved directional benefit but focused on preserving the spatial cues of sound that are important for the human brain to perform natural noise management functions. Most recently, we introduced a major advancement in this technology with ReSound OMNIA. Briefly, we improved the resolution of the beamforming and bilaterally synchronized it such that it better accounts for the acoustic effects of the hearing aids being worn on the ear and head and mimics closely how we process sounds with our binaural hearing. The result is further improvement in the SNR.

AudiologyOnline: What is the magnitude of the improvement in ReSound OMNIA?

Andrew Dittberner: Traditional and enhanced directional systems that function optimally typically provide about 4 to 4.5 dB DI (Directivity Index). This can be translated into perceived benefit using a rule of thumb from literature that for every 1dB of directional SNR improvement, you can expect 5-10% intelligibility improvement for conditions where speech is 50% intelligible. When we switched to binaural beamforming using microphones on both sides of the head, this opened up the opportunity to use six microphones instead of just two. This, in combination with our strategy to integrate certain known aspects of the binaural auditory system, allowed us to go from a theoretical best of a first order directional system (two microphones) on the head of 4.2 dB DI, to a theoretical best of 8.6 dB DI, which is an astounding improvement. Although this number is impressive, let’s not forget that we are not eliminating sound that exists around our users; we are smartly using the binaural auditory system to provide the best SNR improvement for any sound they look towards while still providing access to sounds that occur all around them so they do not get detached from their natural soundscape.

Figure 2. Directional benefit is vastly improved relative to both an omnidirectional response (reference condition in the graph) and the previous technology in ReSound ONE.

To learn more please visit, ReSound or check out these courses on AudiologyOnline: How to Navigate Naturally in a Noisy World and Introducing ReSound OMNIA.

References

Jespersen, C. T., Kirkwood, B. C., & Groth, J. (2021). Increasing the effectiveness of hearing aid directional microphones. In Seminars in Hearing 42(3), 224-236. Thieme Medical Publishers, Inc. Available at: https://www.thieme-connect.com/products/ejournals/html/10.1055/s-0041-1735131