Abstract

Studies on the effect of hearing aid compression time constants and compression ratios indicate that while fast regulation times may increase objectively measured speech intelligibility scores, slow regulation times seem to have a positive effect on subjectively perceived sound quality. However, the majority of studies have been conducted using hearing aid platforms with only one to four Compression channels. Today, hearing aids are available with significantly more compression channels. The question is therefore whether the results found with wide channel bandwidths (i.e. fewer compression channels) can be generalized to state-of-the-art hearing aids with narrower channel bandwidths (i.e. more compression channels).

The present study investigated the subjectively rated sound quality of 111 pre-processed sound recordings differing in the four parameters of compression ratio, compression speed, signal-to-noise ratio and channel bandwidth. Ten normal-hearing persons served as listeners. The results indicate that channel bandwidth has an increasingly stronger negative impact on perceived sound quality as compression ratio and compression speed increase. From a sound quality preservation perspective, this suggests that the use of short compression time constants and high compression ratios should be kept to a minimum in modern multi-channel compression hearing aids.

Introduction

The effect of hearing aid compression parameters, such as compression time constants and compression ratio, on speech perception and the subjectively perceived sound quality of hearing aids has been investigated in several studies (e.g., Gatehouse, Naylor & Elberling, 2006; Hansen, 2002; Moore, Stainsby, Alcántara, & Kühnel, 2004; Neuman, Bakke, Mackersie, Hellman, & Levitt, 1998). However, conflicting results have been obtained, and at present there is no consensus as to what constitutes the "best" compression system. A literature review by Gatehouse et al. (2006), for example, reveals that some researchers (e.g., Moore et al., 2004) have found no effect of time constants, while others have found fast-acting compression to be superior to slow-acting compression (e.g., Nabelek & Robinette, 1977), while yet others have found slow-acting compression to be superior to fast-acting compression (e.g., Hansen, 2002).

One possible explanation for why such different results have been found is that the studies were designed to investigate different outcomes. Some of the studies in the literature review by Gatehouse et al. (2006) focused on the effect of time constants on speech intelligibility (e.g., Nabelek & Robinette, 1977; Moore et al., 2004), while others were designed to examine the effect of time constants on subjectively perceived sound quality (e.g., Neuman et al., 1998; Hansen, 2002). As pointed out by Moore et al. (2004), fast-acting compression may result in both an increase in speech intelligibility and annoyance. More specifically, in situations where both the target speech signal and a competing background noise have spectral and temporal dips, the amplification of soft portions of the target speech falling in the temporal/spectral dips of the background noise may be beneficial for the listener's speech intelligibility. However, soft background noise will also be amplified during brief pauses in the target speech signal, which may be perceived as annoying by the listener. Both outcomes are thus possible within the same study.

It could be argued that the compression setting determined to provide the highest possible objectively measured speech intelligibility should be the one implemented in commercially available hearing aids, since the primary motivation for wearing hearing aids is to improve speech intelligibility for most hearing aid users. However, research (e.g., Hickson, Clutterbuck, & Kahn, 2010; Kochkin, 2000) has shown that sound quality has a significant impact on hearing aid users' satisfaction with their devices, and consequently on their inclination to wear them. For example, Kochkin (2000) identified the 32 most common reasons why hearing aid users can become so dissatisfied with their hearing aids that the hearing aids end up in a drawer (i.e. not used). Poor sound quality figured among the top ten reasons. So although few would dispute the importance of optimizing speech intelligibility, it is clear that speech intelligibility is not the only aspect to warrant investigation. The subjectively perceived sound quality of the hearing aid may make an equally important contribution towards improving the outcomes of hearing aid use.

While several studies have investigated the effect of increasing the compression ratio and shortening the compression time constants on subjectively perceived sound quality (e.g., Gatehouse, 2006; Hansen, 2002; Schmidt, 2006; Neuman et al., 1998), the parameter of compression channel bandwidth (or number of compression channels) has not received much attention in the literature. An examination of the existing literature reveals that the majority of the experiments to have examined subjectively perceived sound quality used platforms with only one to four compression channels. It might therefore be problematic to extend these results to the modern multi-channel compression hearing aids of today. Hansen (2002) investigated subjectively perceived sound quality as a function of compression time constants and compression threshold using a 15-channel compression hearing aid. He found a strong preference for long time constants and low compression thresholds. Keidser and colleagues (2007) investigated the preferred compression ratio in a fast-acting multi-channel device by listeners with severe hearing loss. The listeners generally preferred lower compression ratios than are typically prescribed for that degree of hearing loss. However, none of the investigations examined the effect of varying the number of compression channels. As far as can be ascertained, no investigation of the effect of varying the number of compression channels on subjectively perceived sound quality has yet been conducted.

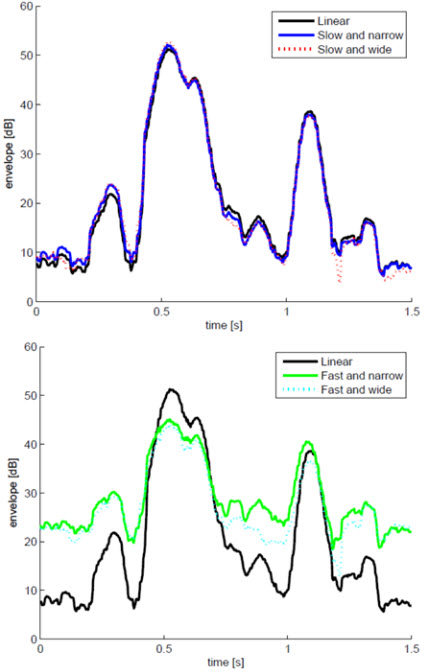

Short compression time constants have commonly been argued to increase the audibility of weak parts of the signal (e.g., Dillon, 2001; Moore et al., 2004), potentially leading to better speech intelligibility. However, as illustrated in Figures 1a-b, fast-acting compression functions very differently in wide compression channels and narrow compression channels, respectively. Figures 1a-b show a simulation of the effect of combining broad and narrow bandwidths with slow and fast regulation times when the hearing aid output is heard through a 1000 Hz auditory filter (simulated by means of a gammatone filter (Patterson, Allerhand, & Giguère, 1995)). Figure 1a shows the output generated with slow-acting compression (700/7000 Attack time, AT /Release Time, RT; CR=2) combined with wide (3-channel) and narrow (15-channel) frequency bandwidths. Figure 1b shows the output when fast-acting compression (10/105ms AT/RT, CR=2) is used in combination with wide (3-channel) and narrow (15-channel) frequency bandwidths, respectively. It may be observed that the output obtained with slow-acting compression is very similar to that of linear processing, regardless of the bandwidth of the filters used in the hearing aid (Figure 1a). By comparison, a reduction in the fluctuations of the envelope, resulting in smaller peaks and louder dips, is seen relative to the linearly processed signal when fast-acting compression is used (Figure 1b). Moreover, in this example, the difference in filter bandwidths results in a difference in the size of the dip in the mid-section and of the second peak when fast-acting compression is used (Figure 1b).

Figures 1a-b.Output envelope of a simulated 1000 Hz auditory filter generated from the Danish sentence "Nu kan en ny jagt begynde" (translation: "Now a new hunt can begin") using slow-acting compression with wide (3-channel) and narrow (15-channel) frequency bandwidths (Figure 1a), and fast-acting compression with wide and narrow frequency bandwidths (Figure 1b). Linear processing is shown in black. Click Here to View Larger View of Figure 1 (PDF)

The simulation above illustrates the fact that fast-acting compression in narrow channels leads to less contrast between peaks and valleys in the frequency spectrum and thereby more smearing in the temporal and spectral domain (Plomp, 1988). Slow-acting compression, on the other hand, functions in the same way in both wide and narrow channels, and has the advantage of not distorting the signal in the temporal domain. Moreover, the fact that the signal is only adjusted slowly in the temporal domain will also result in a better preservation of the spectral contrast in the signal. However, it should be noted that weak parts of the signal following stronger parts may not be amplified to the appropriate level, or, in the worst case, may remain completely inaudible, because the system adapts too slowly to rapid changes in level.

As indicated in the example in Figure 1, when fast regulation times are used, the number of channels in the hearing aid may have a significant impact on the hearing aid processing. However, although illuminative, using an auditory filter to simulate the effects of different hearing aid processing strategies will only provide an indication of where differences in the percept of the sound may be found, not whether these differences will have a positive or negative impact on the perceived sound quality. This must be determined empirically in listening experiments.

The present study therefore investigates the influence of channel bandwidth in combination with other relevant parameters, including compression time constants, compression ratio, and signal-to-noise ratio, on subjectively evaluated sound quality parameters. Previous studies by Hansen (2002) and Schmidt (2006) revealed a clear preference for low compression ratios among both normal-hearing and hearing-impaired listeners. Furthermore, a study by Neuman et al. (1998) found a compression ratio × regulation times interaction which suggested that the negative impact of increasing the compression ratio is exacerbated by short compression time constants. The expectation is therefore that perceived sound quality will deteriorate as a function of decreasing channel bandwidth and increasing compression ratio when fast regulation times are used.

Method

Ten normal-hearing native speakers of Danish (5 Female, 5 Male, mean age 42 years, mean hearing thresholds across left and right ear at 0.5, 1, 2 and 4kHz was 7, 5, 6 and 4 dB HL) rated the perceived sound quality of 111 pre-processed sound recordings differing in the four parameters of compression ratio, compression speed, signal-to-noise ratio, and channel bandwidth.

The recordings were made by a male speaker in dinner party noise at three different signal-to-noise ratios: + 25 dB SNR; + 15 dB SNR; and + 5 dB SNR. Each stimulus had a duration of 27 seconds. Favorable SNRs were used in the study because the aim was to investigate sound quality when the compression system primarily follows the speech signal. In situations with poor SNRs, the high noise levels will dominate over the speech signal most of the time, and the compression system will therefore fluctuate with the noise signal.

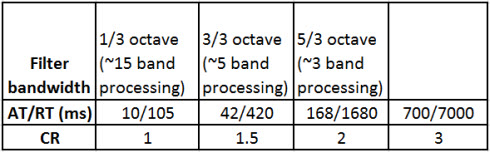

The recordings were processed throgh a compressor which made it possible to vary the different parameters. Three different filter bandwidths and four different compression ratios and attack/release times were investigated. All were within the range of commercially available hearing aids. These are summarized in table 1. No compression threshold (CRT) was used, to ensure that the entire dynamic range of the signals were compressed .

Table 1. Values of the filter bandwidths, attack/release times, and compression ratios investigated in the study.

The compressed stimuli were equalized to the same 1/3 octave RMS spectrum (62 dB SPL overall) as the input signals and presented to the listeners with the NAL-RP linear rationale in accordance with their measured thresholds. Stimuli were presented binaurally to the listeners over headphones in a sound-treated room. Experimental sessions typically lasted 30 minutes.

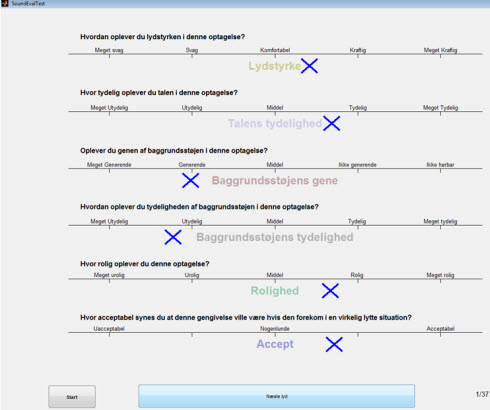

Sound quality was measured by means of an adaptation of the categorical scales used by Neuman et al. (1998) and Schmidt (2006). The choice of scale was motivated by the wish to obtain an indication of the intrinsic acceptability of each individual sound stimulus as perceived by the users. This would not have been possible with an A-B type test, in which the sound quality of one stimulus is evaluated as 'better' or 'worse' relative to that of another.

Six sound quality-related parameters were evaluated: loudness, speech clarity, annoyance from background noise, clarity of background noise, calmness, and overall acceptance.

Danish equivalents of the following response categories were used: Loudness: very soft, soft, comfortable, loud, very loud. Speech clarity: very unclear, unclear, average, clear, very clear. Annoyance of background noise: very annoying, annoying, average, not annoying, not audible. Clarity of background noise: very unclear, unclear, average, clear, very clear. Calmness of the recordings: very uneasy, uneasy, average, calm, very calm. Overall acceptability: unacceptable, tolerable, acceptable.

Figure 2. A sample of the Danish response form used for rating the stimuli on the six parameters of loudness, speech clarity, annoyance from background noise, clarity of background noise, calmness, and overall acceptability.

Results

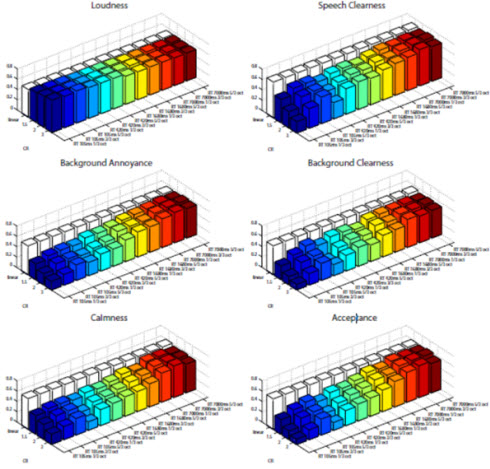

To obtain an overall indication of the impact of the various signal processing implementations differing in compression ratio, time constants, and frequency channel bandwidth on the six sound quality-related parameters, a mean score was calculated for each implementation and sound quality parameter across all stimuli. The results are displayed graphically as bar plots in Figure 3, below. Scores are plotted as numbers between 0 and 1 mapping the input scale linearly (ie., 0.1 = unacceptable, 0.5 = tolerable, 0.9 = acceptable).

Figure 3. Mean scores for the six parameters of loudness, speech clarity, annoyance of background noise, clarity of background noise, calmness of the recordings, and overall acceptability as a function of compression ratio, regulation time and channel bandwidth across stimuli.

The loudness parameter was merely included as a control question to rule out that subjects rated stimuli as poor on the acceptance scale because they were perceived as being too loud or too soft. The results for the loudness parameter (top panel, left) indicate that all of the processed sound stimuli were within a comfortable range on the loudness scale. This was to be expected since all files were processed and equalized to the same spectrum (62 dB SPL overall) and amplified according to the measured thresholds using NAL-RP. However, close inspection of the plot reveals that perceived loudness increased slightly as a function of fast regulation times and high compression ratios. This is most likely the effect of an increased tendency for background noise to be amplified during brief pauses in the speech signal, leading to a greater overall perceived loudness.

An examination of the remaining five plots in Figure 3 reveals a highly similar pattern in the results for perceived speech clarity, annoyance of background noise, clarity of background noise, calmness of the recordings, and overall acceptability. It appears that when fast-acting compression is used, the subjectively perceived sound quality decreases as a function of increased compression ratio and narrower channel bandwidth, while compression ratio and channel bandwidth seem to have minimal effect when slow-acting compression is used. As the pattern is so similar for all five sound quality-related parameters, only the results for overall acceptability will be analyzed in detail in the following.

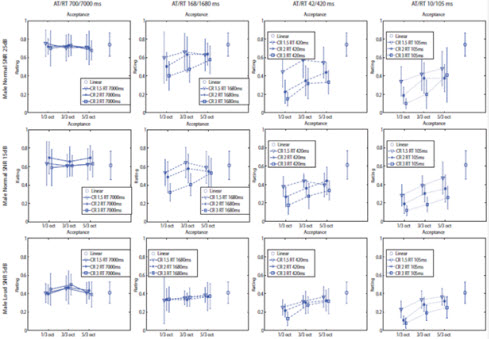

Figure 4 shows the overall acceptance scores as a function of bandwidth for the three compression ratios across all stimuli obtained with 25 dB SNR (top panel), 15 dB SNR (middle panel), and 5 dB SNR (bottom panel). Attack/release times are shown in order of decreasing duration, starting from 700/7000ms (leftmost column).

A statistical analysis (Within-subjects repeated-measures ANOVA) was conducted in order to determine the effect of bandwidth, compression time constants, compression ratio, signal-to-noise ratio, and their interactions.

Two general observations can be made from the results displayed in Figure 4, namely that increased noise level and short time constants seem to have had a detrimental effect on subjectively perceived sound quality. A comparison of the ratings obtained at the three SNRs of 25, 15, and 5 dB reveals an overall decrease in acceptability as a function of increased noise. The statistical analysis indicated that the main effect of SNR was significant (p<0.01). When stimuli were presented at the poorer SNR of 5 dB, the vast majority of the ratings fell below the 0.5 mid-point on the scale (labeled "tolerable"), regardless of regulation time constants. This averseness to increased noise levels was to be expected.

Figure 4. Overall acceptance scores as a function of bandwidth for the three compression ratios across all stimuli at 25 dB SNR (top panel), 15 dB SNR (middle panel), and 5 dB SNR (bottom panel). Attack/release times increased from 700/7000ms (leftmost column) to 168/1680ms, 42/420ms, and 10/105ms (rightmost column). All results are plotted as the mean and standard deviation of the responses of the ten subjects.

A general tendency for increasingly lower ratings to be assigned as time constants became shorter is observable at all three SNRs. The effect was clearest at the better SNRs (top and middle panels), where the majority of the acceptability ratings obtained with the longest time constants (leftmost column) were located well above 0.5 ("tolerable"). The ratings increasingly dropped as time constants grew shorter, and the majority were located below 0.5 when the shortest (rightmost column) regulation times were used. The statistical analysis confirmed that the main effect of time constants was significant (p<0.001).

The results also indicated that compression time constants interacted with compression ratios and channel bandwidth. The plots for the longest AT/RT investigated display very little variation in the overall acceptability ratings as a function of bandwidth and compression ratio at any of the three SNRs. However, a general tendency for the listeners to assign increasingly higher acceptability scores as the compression ratio became lower is apparent when the regulation times were shortened to 168/1680 ms (second column from the left) at the better SNRs of 25 and 15 dB (top and middle panels). The tendency was observable at all three SNRs when the faster regulation times of 42/420 ms and 10/105 ms were used. The statistical analysis confirmed the main effect of CR was significant (p<0.001) and that there was a significant (p<0.001) interaction between regulation times and CR.

Moreover, with the faster regulation times, there was a strong preference for the sound quality generated by linear amplification when the stimuli were presented at SNRs of 25 dB and 15 dB. The preference for linear amplification is much less clear at the poorer SNR of 5 dB, presumably because the overall increase in noise level caused a general reduction in the acceptability of all the stimuli. However, the preference for low compression ratios was also observable at the 5 dB SNR when the faster regulation times (42/420 and 10/105 ms) were used. . The statistical analysis confirmed that the main effect of SNR was significant (p<0.001).

Narrowing the frequency channel bandwidth seemed to further reinforce the detrimental effect of a high compression ratio and short regulation times. No effect of bandwidth was observed at the slowest regulation times (700/7000 ms). However, a negative effect of narrowing the bandwidth was already seen at the second-slowest AT/RT of 168/1680 ms for stimuli presented at the 25 and 15 dB SNRs. The preference for the wider bandwidth became increasingly strong as time constants became shorter, and was seen most clearly at the shortest AT/RT investigated (10/105 ms). When short regulation times were used, the sound quality ratings were centered below the 0.5 mid-point on the rating scale. However, poorer scores were consistently assigned to the stimuli generated with the highest compression ratio (3) and the narrowest bandwidth (1/3 octave), while higher scores were assigned to the stimuli generated with the smallest compression ratio (1.5) and the widest bandwidth (5/3 octave), regardless of SNR. The statistical analysis showed the main effect of bandwidth was significant (p<0.001) and that there was a significant bandwidth × time constants interaction (p<0.001), while no significant interaction of bandwidth × CR was revealed (p>0.05).

Summary and Discussion

The main purpose of the present study was to investigate the influence of channel bandwidth in combination with compression time constants, compression ratio, and signal-to-noise ratio on subjectively perceived sound quality.

A number of interesting effects were observed. With slow regulation times, acceptance was independent of channel bandwidth and compression ratio. Overall acceptance did, however, decrease significantly with decreasing SNR (albeit to no greater extent than was seen with linear processing). When the time constants were faster than 700/7000ms, scores on subjectively evaluated acceptability decreased as a function of shorter regulation times, increased compression ratio, and narrower bandwidth. Statistical tests revealed a significant interaction between regulation times and compression ratio, and between regulation times and bandwidth. These results are consistent with previous results obtained by Neuman et al. (1998), Hansen (2002), and Keidser et al. (2007), who found an overall preference for low compression ratios (Neuman et al., 1998; Hansen, 2002, Keidser et al., 2007), and a compression ratio × regulation times interaction (Neuman et al., 1998), indicating that the negative impact of increasing the compression ratio is stronger the shorter the regulation times used. None of these studies investigated the effect on subjectively perceived sound quality of varying the channel bandwidth. However, the present results indicate that the degradation in subjectively perceived sound quality as a function of compression ratio and shorter regulation times is further exacerbated by narrowing the channel bandwidth.

The results of the present study imply that in order to achieve an acceptable sound quality at favorable signal-to-noise ratios in modern multiple frequency channel devices, compression parameters should be implemented with care. Fast-acting compression should ideally only be applied with very low compression ratios in hearing aids with narrow frequency channel bandwidths.

Although short regulation times may provide more audibility at soft input levels, the results reported here indicate that the audibility achieved will come at a considerable cost in terms of degraded sound quality. As mentioned in the introduction, poor sound quality has an important bearing on hearing aid user satisfaction, and may ultimately result in non-use of the hearing aids. In this light, although short time constants may provide optimal speech perception in some situations, most hearing aid users would probably be best served with a predominantly slow compression system. In specific situations, such as abruptly changing sound environments, where slow-acting compression may not provide sufficient audibility of soft sounds and protection from sudden loud sounds, secondary systems could temporarily allow for faster regulation. In stationary sound situations, fast regulation should be applied after careful consideration of the balance between sound quality and possible intelligibility advantages.

It should be noted that this study was conducted with normal-hearing subjects. Hansen (2002) found some differences in the scoring of hearing-impaired and normal-hearing subjects as a function of compression threshold, possibly related to the audibility of soft sounds. Thus, whether the results are generalizable beyond the mildly-impaired segment of the hearing-impaired population, or indeed, to hearing-impaired listeners at all, must be determined by future research.

Ole Hau is a digital signal processing specialist and holds an MSc in electrical engineering. He has worked with sound quality optimization in top-range DECT telephones at Bang & Olufsen Telecom, and as an independent digital signal processing and embedded software consultant, before joining Widex in 2005. He is currently working as a designer of fitting and processing algorithms for hearing aids at the Widex Innovation Lab.

Hanne Pernille Andersen holds a PhD in adult language acquisition and phonetics, and has taught language acquisition, phonetics, and sociolinguistics at the University of Copenhagen, Denmark. She joined the Widex Audiological Research Department in 2009, where she is currently working as a product specialist and scientific writer.

Correspondence may be addressed to Ole Hau at [email protected]

References

Dillon, H. (2001). Hearing aids. New York: Thieme.

Gatehouse, S., Naylor, G., & Elberling, C. (2006). Linear and nonlinear hearing aid fittings: 1. Patterns of benefit. International Journal of Audiology, 45(3), 130-152.

Hansen, M. (2002). Effects of multi-channel compression time: Constants on subjectively perceived sound quality and speech intelligibility. Ear & Hearing, 23(4), 369-380.

Hickson, L., Clutterbuck, S., & Kahn, A. (2010). Factors associated with hearing aid fitting outcomes on the IOI-HA. International Journal of Audiology, 49(8), 586-595.

Keidser, G., Dillon, H., Dyrlund, O., Carter, L., & Hartley, D. (2007). Preferred low- and high-frequency compression ratios among hearing aid users with moderately severe to profound hearing loss. Journal of the American Academy of Audiology, 18(1), 17-33.

Kochkin, S. (2000). MarkeTrak V: Why my hearing aids are in the drawer: The consumer's perspective, The Hearing Journal, 53(2), 34-42.

Moore, B. C. J., Stainsby, T. H., Alcántara, J. I., & Kühnel, V. (2004). The effect on speech intelligibility of varying compression time constants in a digital hearing aid. International Journal of Audiology, 43(7), 339-409.

Nabelek, I. V., & Robinette, L. N. (1977). A comparison of hearing aids with amplitude compression. Audiology, 16(1), 73-76.

Neuman, A.C., Bakke, M.H., Mackersie, C., Hellman, S., & Levitt, H. (1998). The effect of compression ratio and release time on the categorical rating of sound quality. Journal of the Acoustical Society of America, 103(5), 2273-2281.

Patterson, R. D., Allerhand, M., & Giguère, C. (1995). Time-domain modeling of peripheral auditory processing: A modular architecture and a software platform. Journal of the Acoustical Society of America, 98(4), 1890-1894.

Plomp, R. (1988). The negative effect of amplitude compression in multichannel hearing aids in the light of the modulation-transfer function. Journal of the Acoustical Society of America, 83(6), 2322-2327.

Schmidt, E. (2006). Hearing aid processing of loud speech and noise signals: Consequences for loudness perception and listening comfort. Unpublished PhD thesis. Technical University of Denmark, Lyngby.

Hearing Aid Compression: Effects of Speed, Ratio and Channel Bandwidth on Perceived Sound Quality

April 2, 2012

This article is sponsored by Widex.

Related Courses

1

https://www.audiologyonline.com/audiology-ceus/course/prioritizing-end-user-empowerment-and-39696

Prioritizing End-User Empowerment and Why That Matters

As hearing aid wearers engage with technology more than they have in decades past, hearing care providers (HCPs) must remain at the forefront of the field, providing wearers with solutions that will enhance their listening experience, both within the hearing aid – and beyond. This course will explore the ecosystem of Widex solutions designed to empower wearers to live confidently with their devices and hear their best in every situation.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Prioritizing End-User Empowerment and Why That Matters

As hearing aid wearers engage with technology more than they have in decades past, hearing care providers (HCPs) must remain at the forefront of the field, providing wearers with solutions that will enhance their listening experience, both within the hearing aid – and beyond. This course will explore the ecosystem of Widex solutions designed to empower wearers to live confidently with their devices and hear their best in every situation.

39696

Online

PT30M

Prioritizing End-User Empowerment and Why That Matters

Presented by Taylor DeVito, AuD

Course: #39696Level: Intermediate0.5 Hours

AAA/0.05 Intermediate; ACAud inc HAASA/0.5; AHIP/0.5; BAA/0.5; CAA/0.5; IACET/0.1; IHS/0.5; Kansas, LTS-S0035/0.5; NZAS/1.0; SAC/0.5

As hearing aid wearers engage with technology more than they have in decades past, hearing care providers (HCPs) must remain at the forefront of the field, providing wearers with solutions that will enhance their listening experience, both within the hearing aid – and beyond. This course will explore the ecosystem of Widex solutions designed to empower wearers to live confidently with their devices and hear their best in every situation.

2

https://www.audiologyonline.com/audiology-ceus/course/recommending-benefit-focused-solutions-from-39192

Recommending Benefit-Focused Solutions from Widex

In an industry with so many products and features to choose from, hearing aid selection can be overwhelming – both for the wearer and the Hearing Care Professional (HCP). This course is designed for HCPs aiming to enhance the ease and efficiency of their hearing aid recommendations. With implementable suggestions and unique counseling tools, this course will equip providers with the knowledge and skills necessary to accurately align the goals and priorities of the wearer with the most optimal Widex hearing solution.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Recommending Benefit-Focused Solutions from Widex

In an industry with so many products and features to choose from, hearing aid selection can be overwhelming – both for the wearer and the Hearing Care Professional (HCP). This course is designed for HCPs aiming to enhance the ease and efficiency of their hearing aid recommendations. With implementable suggestions and unique counseling tools, this course will equip providers with the knowledge and skills necessary to accurately align the goals and priorities of the wearer with the most optimal Widex hearing solution.

39192

Online

PT30M

Recommending Benefit-Focused Solutions from Widex

Presented by Anna Javis, AuD

Course: #39192Level: Intermediate0.5 Hours

AAA/0.05 Intermediate; ACAud inc HAASA/0.5; AHIP/0.5; BAA/0.5; CAA/0.5; IACET/0.1; IHS/0.5; Kansas, LTS-S0035/0.5; NZAS/1.0; SAC/0.5

In an industry with so many products and features to choose from, hearing aid selection can be overwhelming – both for the wearer and the Hearing Care Professional (HCP). This course is designed for HCPs aiming to enhance the ease and efficiency of their hearing aid recommendations. With implementable suggestions and unique counseling tools, this course will equip providers with the knowledge and skills necessary to accurately align the goals and priorities of the wearer with the most optimal Widex hearing solution.

3

https://www.audiologyonline.com/audiology-ceus/course/improved-speech-intelligibility-in-noise-40494

Improved Speech Intelligibility in Noise with Ultra-Low Delay

Widex introduced ZeroDelay™ technology to reduce processing time and the negative comb filter effect in open fits. With the addition of new directional microphone design, the wearer can achieve the benefits of directional technology without notable increases in processing time. This study examines whether this new directional processing provides improved speech intelligibility in noise and evaluates the sound experience compared to other products with ZeroDelay processing.

textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Improved Speech Intelligibility in Noise with Ultra-Low Delay

Widex introduced ZeroDelay™ technology to reduce processing time and the negative comb filter effect in open fits. With the addition of new directional microphone design, the wearer can achieve the benefits of directional technology without notable increases in processing time. This study examines whether this new directional processing provides improved speech intelligibility in noise and evaluates the sound experience compared to other products with ZeroDelay processing.

40494

Online

PT30M

Improved Speech Intelligibility in Noise with Ultra-Low Delay

Presented by Jennifer Weber, AuD, CCC-A, Eric Branda, AuD, PhD

Course: #40494Level: Intermediate0.5 Hours

AAA/0.05 Intermediate; ACAud inc HAASA/0.5; AHIP/0.5; BAA/0.5; CAA/0.5; IACET/0.1; IHS/0.5; Kansas, LTS-S0035/0.5; NZAS/1.0; SAC/0.5

Widex introduced ZeroDelay™ technology to reduce processing time and the negative comb filter effect in open fits. With the addition of new directional microphone design, the wearer can achieve the benefits of directional technology without notable increases in processing time. This study examines whether this new directional processing provides improved speech intelligibility in noise and evaluates the sound experience compared to other products with ZeroDelay processing.

4

https://www.audiologyonline.com/audiology-ceus/course/widex-allure-balanced-focus-and-41265

Widex Allure: Balanced Focus and Awareness in Real Life

This course discusses the balance between focus and awareness for hearing aid users, arguing that this should not be reduced to the simple metric of signal-to-noise ratio. It then considers the results of a survey of 57 experienced hearing aid wearers and their implications for the balance of focus and awareness in real life.

textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Widex Allure: Balanced Focus and Awareness in Real Life

This course discusses the balance between focus and awareness for hearing aid users, arguing that this should not be reduced to the simple metric of signal-to-noise ratio. It then considers the results of a survey of 57 experienced hearing aid wearers and their implications for the balance of focus and awareness in real life.

41265

Online

PT60M

Widex Allure: Balanced Focus and Awareness in Real Life

Presented by Laura Winther Balling, PhD, Sonie Harris, AuD, Fei Leung, BSc, Kathy Pineo, M.Cl.Sc, Dana Helmink, AuD, Bertrand Philippon, MSc

Course: #41265Level: Intermediate1 Hour

AAA/0.1 Intermediate; ACAud inc HAASA/1.0; AHIP/1.0; BAA/1.0; CAA/1.0; Calif. SLPAB/1.0; IACET/0.1; IHS/1.0; Kansas, LTS-S0035/1.0; NZAS/1.0; SAC/1.0; TX TDLR, #142/1.0 Manufacturer

This course discusses the balance between focus and awareness for hearing aid users, arguing that this should not be reduced to the simple metric of signal-to-noise ratio. It then considers the results of a survey of 57 experienced hearing aid wearers and their implications for the balance of focus and awareness in real life.

5

https://www.audiologyonline.com/audiology-ceus/course/strategies-for-crafting-more-natural-39990

Strategies for Crafting a More Natural Hearing Experience

In the pursuit of providing the most authentic and natural hearing experience for the wearer, artificial manipulation of the amplified sound should be minimized as much possible, but that is not easy to do. In this course, we will delve into the distinctive features of the Widex Sound Philosophy, examining how they work and the impact on both providers and wearers. The substantial, evidence-supported advantages will also be explored.

auditory, textual, visual

129

USD

Subscription

Unlimited COURSE Access for $129/year

OnlineOnly

AudiologyOnline

www.audiologyonline.com

Strategies for Crafting a More Natural Hearing Experience

In the pursuit of providing the most authentic and natural hearing experience for the wearer, artificial manipulation of the amplified sound should be minimized as much possible, but that is not easy to do. In this course, we will delve into the distinctive features of the Widex Sound Philosophy, examining how they work and the impact on both providers and wearers. The substantial, evidence-supported advantages will also be explored.

39990

Online

PT60M

Strategies for Crafting a More Natural Hearing Experience

Presented by Amanda Albertsen, AuD

Course: #39990Level: Intermediate1 Hour

AAA/0.1 Intermediate; ACAud inc HAASA/1.0; AHIP/1.0; BAA/1.0; CAA/1.0; IACET/0.1; IHS/1.0; Kansas, LTS-S0035/1.0; NZAS/1.0; SAC/1.0

In the pursuit of providing the most authentic and natural hearing experience for the wearer, artificial manipulation of the amplified sound should be minimized as much possible, but that is not easy to do. In this course, we will delve into the distinctive features of the Widex Sound Philosophy, examining how they work and the impact on both providers and wearers. The substantial, evidence-supported advantages will also be explored.