Editor’s Note: This text course is an edited transcript of a live webinar. Download supplemental course materials.

Dr. John Nelson: The title of today's course is based on what I learned as part of my audiology education: hearing aids have four components: a microphone, an amplifier, a receiver, and a battery. This made it simple to talk to classmates about hearing aids and also to consumers. When a patient came in and was unfamiliar with hearing aids, the technology itself was straightforward.

Hearing aids have come a long way since I finished my Masters degree in 1992, when linear amplification was fit almost exclusively. Hearing aids were adjusted with potentiometers, which were small screws on the faceplate of the hearing aid. This was on the cusp of digital programming, but nothing was done through a computer. How do we start to discuss how the hearing aid works to an end user today, especially if they have no experience with amplification? We need to have multiple levels of communication to be able to talk about technology. Our patients cannot remember all of the information that we tell them, so we need to have a way to organize it, make it straightforward, and ask to questions.

There are different stories that professionals use when talking about hearing aids. Some focus on end-user benefit or listening environments. However, there are a lot of people that want to know about the technology, and that is what our story is geared toward today.

A Five-Chapter Story

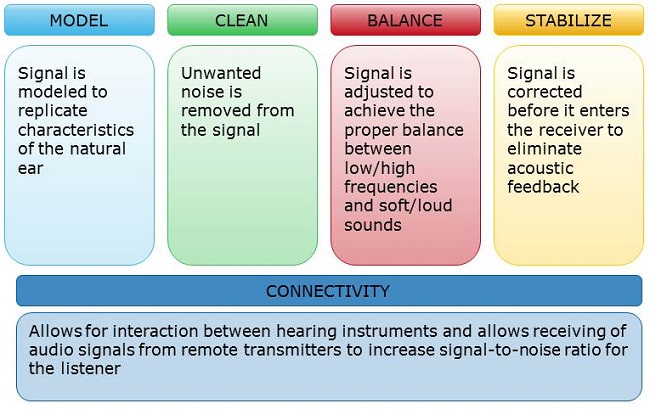

I started this story as a four-chapter story: model, clean, balance, and stabilize (Figure 1). I felt like the first part of a hearing aid, through the microprocessor, models the signal that is coming into the ear. First, start talking to your patients about the hearing aid having a microphone, microprocessor, receiver, and a battery source. In terms of the microprocessor and what a hearing aid can do differently, you will talk about cleaning, balancing and stabilizing.

Figure 1. What was once a four-chapter talking strategy now extends to five: model, clean, balance, stabilize, and connectivity.

Model means that the incoming signal is replicated. The signal should be analyzed, with thought given to how the signal will be processed in the future. Clean is where we want to remove unwanted noise from the signal and make the signal as clean and natural as possible. Balance makes sure that soft sounds are heard, but loud sounds are not uncomfortable; there should be a balance between the right ear and the left ear, and between speech coming from the front compared to noise coming from the back. Stabilize means making sure that the hearing aid does not have any acoustic feedback or artifact while ensuring audibility.

Hearing aids today are much more advanced than they were before. The research and development that go into the technology of hearing aids today looks more to model the signal coming in, clean it, balance it for hearing loss, and to make sure the hearing aid does not whistle. At this point in the conversation, we could wait and see how the patient responds or what questions they have. Maybe they understood that the hearing aid is more than just an amplifier, and that met their needs. Maybe they are more interested in the price, color, fit, or how to change the battery. You have an opportunity here to give a brief introduction to the technology through these four chapters and then expand on the needs of your patient.

I did add a fifth chapter to this story, and it is connectivity. Connectivity has been available for decades, but it has only been in the last five to six years where we have seen hearing aid wireless connectivity for accessories within the hearing aid. We could do FM systems, direct audio input, neck loops, infrared and more, but patients were not always interested in those options because they were a separate system. It may have been very expensive. Today, we should start thinking about hearing aids and connectivity as a package that can be offered to the individual.

Model

Since I work for ReSound, I will be talking about the feature names within ReSound products, but most of the hearing aid companies have similar strategies. In the model section, we want to look at the input signal and analyze it in the same way the natural ear analyzes the sound. All manufacturers do this in one way or another, and they may do it with filtering or digital processing equations. At ReSound, we do WARP processing, which is a filter analysis based on a fast Fourier analysis (FFA) transform. WARP processing takes the input signal and does mathematical computations to determine the energy in 17 different bands in our top products. Those 17 different bands and channels are nonlinear, spaced across the cochlea. This allows us to make decisions about processing in different channels.

The other part of the model section is the Environmental Classifier. This analyzes the input signal and determines what parts of the signal are soft, comfortable, loud, speech, noise, and speech in noise. We know that the cochlea processes sounds tonotopically, or high frequencies at one end and low frequencies at the other end. We also know that those frequency spacings are nonlinear. WARP processing spaces the 17 bands or channels in the same way that the cochlea does. We want to have auditory spacing in the hearing aid that is similar to the way that the auditory system processes sounds. WARP mimics the natural characteristics of the cochlea into the core processor of the hearing aid.

The Environmental Classifier has an understanding of what type of signal is coming into the hearing aid. We can look at a sound is soft, moderate or loud, and then provide different amounts of gain based on that input level. We could also look at other dynamics of the hearing aid signal, whether it would be speech-like, speech in noise, or noise. The ReSound system looks first at the loudness of the signal. If the input is less than 54 dB SPL, it is considered quiet. However, if that input is soft and has amplitude characteristics similar to speech, instead of being classified as a quiet input signal, it will be classified as a speech input signal. This is an important strategy to have available in hearing aid processing, because automatic decisions in the hearing aid are more correct and precise.

Classification

We can do something different for quiet non-speech compared to quiet soft speech. We do this by looking at the characteristics of the input signal. We look at the amplitude characteristics. We look at how the frequency characteristics change between low frequency and high frequency, because we know that some of those characteristics of the speech signal are input in day-to-day situations. For example, we know that the low frequencies have periodicity to them, which comes from the fundamental frequency of voicing upper vowels. There is an alternation of low frequencies and high frequencies in speech as you go from vowel sounds into consonant sounds. We use that information to determine if the input signal is speech-like.

On the other hand, background noise fills in the valleys of the temporal time waveform. Our engineers investigated the peak-to-valley ratios of signals and compared them known signal-to-noise ratios (SNRs). If you have a known SNR, you know the energy of the signal and of the noise, and then you can calculate the combined signal, the peak-to-valley ratio. With hearing aids, we do not know the intensity of the speech and we do not know the intensity of the noise. The only thing we have is the relationship between the peaks and the valleys. When the peaks and valleys become farther apart, the speech signal is louder than the noise signal. In that way, we can estimate that there is a very good SNR. If the peaks and the valleys of the signal come closer together, we can estimate that the SNR is very poor. This allows us to decide if it is a speech-in-noise signal compared to a noise-only signal. It is based on research to determine what would happen in the situation of a known SNR compared to the estimated SNR that we do on the hearing aids.

In order for the hearing aid to make decisions about processing on behalf of the user, we have to make sure that our classifier is ranking situations in the same way that people with normal hearing and hearing impairment would rank those situations when listening to samples. We went to an external research site in Europe and played 40 different sound files to people with normal hearing, to people with hearing losses, and also to three different hearing aid brands that have environmental classification and data logging. We had the test subjects rank where they thought the presented signal would fall in seven different categories. Then we looked at the hearing aids and determined how the hearing aid classified the sounds.

We found that there was very good correlation between the responses of the test subjects with normal hearing and with hearing loss and the categorization by the hearing aids. Overall, the test subjects ranked the signal being presented in the same way as the hearing aid algorithms close to 88% of the time. I would not expect a 100% correlation because we have human test subjects that have individual, subjective perceptions. The ReSound hearing aid correlated with the user perception about 98% of the time. We had very good correlation between what we wanted the hearing aid to do and what the hearing aid would do in a real-life situation.

One of the questions I get on regarding our classification system is why we do not have a music classification mode. Music is very difficult to classify because it does not have a standard set of features. Sometimes music is a vocalist. It might be a symphony. It might be a rock band. All of those have different types of long-term dynamics, so it is difficult to come up with a standard set of music features. The other thing makes music classification difficult is being able to classify music in the same way as the person wearing the hearing aid. Music is an emotional listening situation. You cannot have an accurate classification system for a signal that is so dynamic and changes rapidly over time. Furthermore, each individual may have different reactions to music. Both the input signal and individual rankings vary widely.

Clean

The clean chapter is where the hearing aid is trying to clean up the signal that is being delivered to the ear. This is where you would wait to see if the patient had more questions about this section. If they do, then you can talk about some of the algorithms specific for cleaning.

One of those would be a noise reduction system. Noise reduction systems try to decrease the background noise for increased comfort while not modifying or altering the speech signal to which they are trying to listen. Another algorithm that is part of the clean section would be expansion. Expansion tries to lower the noise floor or very soft input levels of the hearing instrument to make sure that you have the best sound quality. Next would be wind reduction, which works to decrease the negative effects and loud sounds of turbulence at the microphones.

Noise Reduction

The noise reduction algorithms work quite differently across manufacturers. While there is an abundance of research on how the different systems work, one of the things on which most people agree is that it is very difficult to document an improvement in SNR understanding with noise reduction. However, you can get increased comfort, which possibly leads to the increased ability to focus in those listening environments. ReSound’s noise reduction algorithm is more of spectral subtraction, estimating the noise between the speech segments so that you know what to cancel over the long term and enhance the envelope characteristics of that signal. The goal would be to enhance the amplitude characteristics of the signal by decreasing the background noise between the speech samples.

Expansion

Expansion has been available for many years. It was even more important when we had a higher equivalent input noise level on hearing aids. Now that hearing aid processors are quieter, being able to adjust the expansion kneepoint is not as critical. You might need to adjust it for patients who can hear the soft input levels very well, but there are other ways to compensate for that with current technology.

Windguard

The wind noise algorithms have been different over time as well as manufacturer. These have become much better at representing the wind turbulence. In the past, going over a speed bump in the road could cause just enough turbulence on the microphones that the hearing aids would turn down the gain, and it sounded like the hearing aid went dead. The Windguard in the current ReSound products is using the advantage of being able to remember what has happened in the past on the output of the hearing aid. When there is no wind, the hearing aid analyzes the input signal to determine the intensity variations over time in each one of the channels. As soon as the turbulence is detected at the microphones, the Windguard is activated.

No wind program can completely eliminate the turbulence and noise that comes from wind because it is originates at the microphone level. However, we can make it more comfortable. In the past, that meant turning down the gain so much that people did not hear any amplification. ReSound’s Windguard algorithm is written such that the gain of the hearing instrument is turned down so that the output matches what the hearing aid is doing before the wind was detected. In this way, instead of having a huge increase in output intensity because of the wind, the intensity at the ear remains the same. Now the SNR is reduced because of the turbulence at the microphone, but the comfort and the audibility of the signal is maintained, so it is not as disruptive.

Not all Algorithms are Created Equal

One of the things I mentioned is that not all algorithms are the same. A study came out of Germany last year that looked at four different manufacturers’ noise reduction algorithms, labeled A, B, C and D. Measurements were made with a -4 dB SNR and a +4 dB SNR. Those signals were presented to the hearing aids both with the noise reduction algorithms turned off and then turned on. If the noise reduction algorithm did nothing to the signal, that would mean that the noise-reduction-off condition equaled the noise-reduction-on condition, and there would be no difference, or a zero value.

Products C and D showed little difference between noise reduction on and off for both SNRs. That is quite unfortunate, because if there is no difference, there can be no benefit. Products A and B showed different results, in that the algorithm is doing something different to the signal when it is turned on. Unfortunately, we have not come to the point where we can document the differences for increased understanding in background noise, but there is definitely a reduction in the amount of energy causing an increased comfort in these listening situations.

Balance

The balance chapter includes a number of different applications that will accommodate for hearing loss as well as directional benefit. There will probably be a lot of questions in this section, because we often spend time talking about our directional systems and how the directional hearing aids can improve understanding in background noise.

Wide Dynamic Range Compression

Under the balance section, we included a number of different features other than directionality. One of the features is wide dynamic range compression (WDRC). We are familiar with that as being able to provide more gain for soft sounds and less gain for loud sounds so they are not uncomfortable. This can be personalized based on the hearing loss.

Directional Mix Processing

Directional Mix Processing is a bandsplit processing technology; some companies call it pinna restoration. This applies a low-frequency omnidirectional pattern and a high-frequency directional pattern. This allows for a more seamless transition between directional/omnidirectional modes so you maintain the same loudness characteristics.

We wanted to look at how we might be able to apply this to people wearing hearing aids, and we found that there was a benefit to sound quality and comfort by applying this bandsplit processing of omnidirectional in the lows and directional in the highs. As you go to a directional mode, there is a low-frequency rolloff that occurs as a function of physics, and we cannot change it.

In the past, we had to give more gain in the low frequencies for directional mode than we did in omnidirectional mode so that the loudness of the two programs sounded the same. This was especially important for our automatic switching. During this switch, the person could hear that the directional mode was softer than the omnidirectional mode. We did not want people to be distracted by those differences. When the low frequency boost was applied for equalization, the hearing aid sounded boomy in quiet, especially when the user was talking. It also had a low tolerance for wind noise. When you are in a quiet situation, you might hear the circuit noise of the hearing aid.

Instead of having a low-frequency bass boost, we applied omnidirectional processing in the low frequencies and directional processing in the high frequencies. This provided high sound quality to the signal for many different situations. It gives the omnidirectional response in the low frequencies, which is the gold standard of how the hearing aid should sound, but provided the improved SNR for signals from the front and noise from the back in the high frequencies, which is critical for understanding high-frequency speech sounds. Further, it was as similar to the natural ear as we could get mechanically.

Environmental Optimizer

Environmental Optimizer is a section in the balance chapter that focuses on the listening situation, as determined by the Environmental Classifier, to determine the amount of volume that the person might want to have. It uses seven different environments, which we talked about in the model section, to be able to make decisions on how to adjust the hearing aid.

First, we want to set the WDRC characteristics so that you have audibility for soft sounds and comfort for moderate and loud sounds. Furthermore, Environmental Optimizer takes care of some of both soft and loud situations where someone might want a volume control by automatically adjusting the volume depending on the environment, increasing the ease of use and satisfaction for the person with the hearing aid.

Directionality

Also in the balance chapter is directionality. We have developed several types of directional modes over the years, which makes it confusing to choose one or describe them for your patient. We often have the ability to do omnidirectional or fixed directional, and some systems have adaptive directionality or the ability to change the listening scope from wide to narrow, depending on singular or group listening situations.

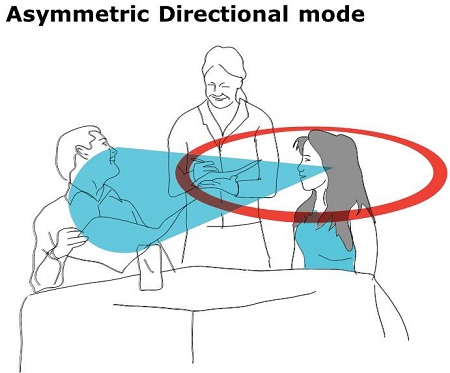

Soft switching will switch automatically between omnidirectional and directional depending on the style of the listening environment. You have natural directionality options, such as an asymmetric mode that provides an omnidirectional response on one ear and a directional response on the other ear to allow the person to get an improved SNR from the front while monitoring the listening situation from the sides and back. This would allow one of the ears to be able to monitor the listening environment and understand what is going on in the situations around them.

Binaural Communication

We can start to take these directional options to another level, such as device-to-device binaural communication. Binaural fusion is one of those options, and it looks at the listening situation as analyzed by the four microphones to determine if both hearing aids should be in omnidirectional or directional, of if there should be some sort of asymmetrical listening pattern. The other part of binaural fusion is the ability of both hearing aids to make a decision on the listening environment. The Environmental Optimizer will analyze and compare the environments on both sides to determine one listening environment. The decision-making process on the hearing aids is not based on what is happening on each side of the head, but on what the listening situation is as a whole.

We are one of the only manufacturer with the ability to change the cut-off frequency between omnidirectional and directional processing between the two devices. This means that sometimes the cutoff frequency would be at a low frequency and sometimes it would be at a high frequency. Every one of our fittings determines the cutoff frequency for that person based on their hearing loss and style of the hearing aid. We wanted to show some of the data that came along with some of these different cut-off frequencies.

Moller & Jespersen (2013) did a study on speech understanding in noise for 10 test subjects for the 4 different cutoff frequencies, as well as for an omnidirectional situation. A very low bandsplit cutoff frequency means that you will have very little omnidirectional processing and a high directional processing. In the open fittings for these 10 test subjects, all 4 frequency settings had an improved understanding in background noise compared to omnidirectional condition. There was so difference or benefit for objective testing, no matter where the frequency was set.

Based on this, you could set a very high directional-mixed setting or a high cut-off frequency and get nearly the same speech understanding in noise. However, by going to a very high cut-off frequency, we are providing more omnidirectional processing in the low and mid frequencies, which will improve the sound quality for people with open fittings. The input signal coming in from the low frequencies to the open ear is omnidirectional, and the signal coming out of the hearing aid is omnidirectional. These listeners will have fewer problems with the sound quality of their own voice and circuit noise. The trade-off is beneficial, in that they do not have any change in understanding of speech across the frequencies, but if we pick that high-frequency cutoff, they will have an improvement in sound quality in all sorts of different listening situations.

On the other hand, they also tested people with moderate to severe hearing losses and closed hearing aid fittings. These people will benefit from having an improved SNR in the mid frequencies. With a low cutoff frequency, there was more directionality in the mid frequencies and an improved SNR score compared to the other cutoff frequencies. We would want to give people with a moderate hearing loss the low cutoff frequency. By doing this, we give less omnidirectional in the mid frequencies and reduce the potential for problems with their own voice.

People with moderate to severe hearing losses greater than 40 dB HL in the low frequencies are not bothered by increased amplification in the low frequencies. They do not complain about the sound quality of their own voice. The trade-off is that they do not have the sound quality problems that people with mild hearing loss have, but they will get the benefit of having improved understanding in background noise.

Binaural Directionality

One analogy for binaural directionality is that it is like a moving walkway at the airport. Once you get on, you cannot get off. You could climb over the side rails, but then a security guy would run after you. The hearing aids are very similar. They choose in what direction you are going to hear. ReSound wanted to give the individual the choice to be able to focus on what it is they want to listen to. We know that the brain processes sound in a similar way.

We have an auditory system that detects signals, and then we choose whether or not we want to attend to them. For example, if children are playing in the background, you may want to not attend to them, so you ignore them. Our brain does that automatically. However, if you hear your name from the children playing in the background, all of a sudden our brain focuses on something that was novel, and you will choose to attend to it if you deem it to be important.

With binaural directionality, the brain needs to have the opportunity to decide what it wants to listen to, and we need to give that input to both sides of the auditory system. We do this from a number of different parameters. We look at whether speech or noise is present. We look at the direction of the speech, the intensity of the speech, and the SNR. All of these things are used to determine the directional mode and whether there should be a change in the Environmental Optimizer.

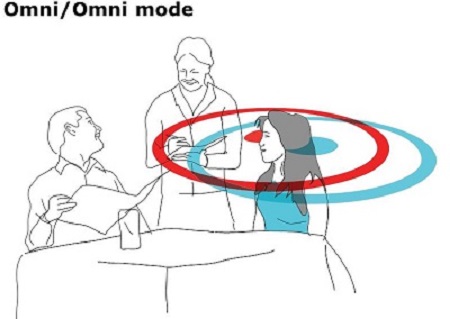

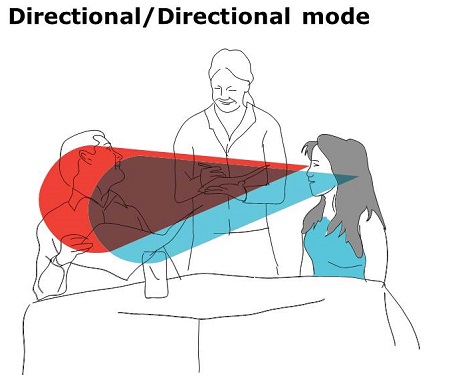

Figure 2 shows a couple at a table in a restaurant; we will assume that there are other people sitting, talking and making noise around them. In an omnidirectional mode, this woman would have difficulty understanding the gentleman in front of her because she has to compete with the background noise on the sides and back. Both hearing aids in directional mode would help her to be able to hear the gentleman in front of her. However, she would have difficulty understanding the waitress who is to the side of her. Asymmetrical mode, or natural directionality in our products, puts one hearing aid in directional mode for an improved understanding from the gentleman’s voice in front and an omnidirectional mode that is able to monitor and listen to sounds on the sides and in the back, which in this case is the waitress. One thing to note about natural directionality is that the person of interest has to be on the side that will go into omnidirectional mode or the listener will experience a head-shadow effect from the other side.

Figure 2. Omnidirectional (top), directional (middle) and asymmetric directional modes (bottom) in a listening situation.

Binaural directionality allows the hearing aids to communicate and make decisions for the optimal listening pattern based on the signal, noise, front and back directions, and SNR. It will go into omnidirectional on both sides if there is a quiet environment with no competing background noise. It will go to an asymmetrical pattern when there is background noise with a strong speech signal, which will change depending on the location of the speech signal.

In an extremely noisy situation where you cannot understand what is coming from the sides and the back situations, the device-device communication would provide a directional mode on both sides to get the optimum understanding.

Directionality Case Studies

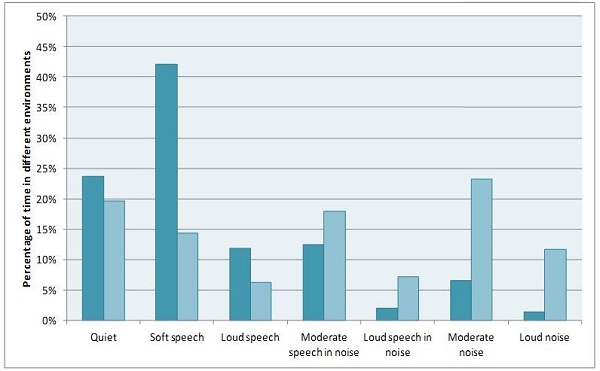

I have two cases to share with you. In Figure 3, you see the seven environmental classifier settings and the percentage of the time each subjects spends in those environments; dark blue is for the first subject, and light blue is for the second subject. The dark blue bars show that this subject spends a lot of time in quiet and a lot of time in soft speech, but not very much time in noise and loud speech. The light blue bars indicate that the other subject spends time in quiet soft speech, noise, moderate speech in noise, loud speech in noise, moderate noise, and loud noise. This subject has a much more varied listening environment.

Figure 3. Percentage of time spent in eight different listening environments for two test subjects (dark blue and light blue).

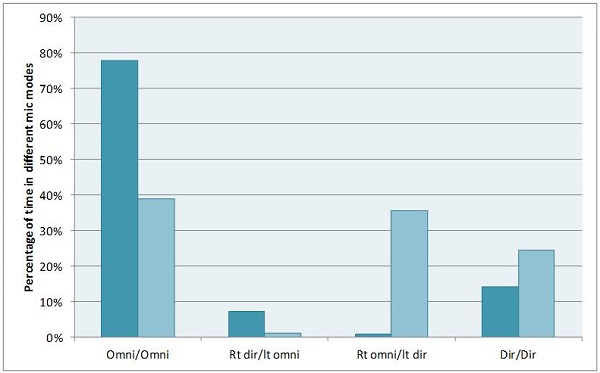

We also looked at the data logging results and the first person, who spent a lot of time in quiet and soft speech situations, spent nearly 80% of the time omnidirectional mode in both ears (Figure 4). However, when they were in a directional need, the hearing aid switched most of the time to right ear directional/left ear omnidirectional or to directional in both ears.

Figure 4. Percentage of time spent in binaural directional arrangements or omnidirectional mode.

The second subject with a variety of listening situations spent almost an equal amount of time in omnidirectional and asymmetrical directionality, with the right ear omnidirectional and the left ear directional. I would have expected more of a split between the asymmetrical modes. We found that this person is a truck driver and spends their time in in the driver’s seat on the left hand side of the truck with a passenger on the right side of the truck. This person likely spends a lot of time with speech coming from the right and more noise from the window on the left.

Binaural Environmental Optimizer II

As a note, the Environmental Optimizer II in our high-end products can change the volume noise reduction of the system when depending on the environment. The benefit of this is that people may want different volume settings for different listening situations, but we also found that in listening tests, people preferred being able to have the strength of their noise reduction change. In our programming, instead of having a fixed, mild, moderate, considerate, strong for all situations, you can change the strength of the noise reduction per listening situation. In a quiet or speech situation, you do not need aggressive noise reduction. However, for speech in noise or full noise, you can make stronger reductions in noise if you want.

Stabilize

The fourth chapter is stabilize. Stabilization is how the feedback cancellation system works in the hearing aid. At ReSound, we first apply a feedback cancellation system that employs a filter to determine the frequency characteristics of the feedback signal, which then cancels out that signal so you have the gain you need without the acoustic feedback. It is important with the ReSound products to do a feedback calibration. Not all systems work the same. Some companies do not recommend a feedback calibration unless necessary. Our system uses a calibration to fit the acoustics to the individual’s ear instead of a generic fitting of an average individual’s ear.

The DFS Ultra works by focusing on the correlation between how much cancellation is needed and how much gain is being provided from the instrument. When it predicts that the hearing aid is going into acoustic feedback, it will take the gain of the instrument and put it back to where it was prescribed for that individual. In that way, the person gets the amplification that they need for the listening situation, but they do not get the acoustic feedback and the whistling when the feedback cancellation system is not able to engage. We can provide more gain before feedback in those listening situations.

Connectivity

The fifth chapter is connectivity. I think it is important to remember that there are different types of connectivity setups. We have a history of telecoils and FM systems, which are still important and used in different situations, but we have a number of other situations that we can use for wireless connectivity within hearing aids. Be mindful that not all hearing aids use the same transmission frequency band, and that transmission band does not always follow the same rules in different parts of the world. ReSound chose to use the 2.4 GHz frequency band, which is available for use in all countries.

The 2.4 GHz can be used for connectivity with wireless devices and also for communication between the devices. Many companies, including ReSound, have the ability to use synchronized volume and push buttons, where you change the volume or the program on one side and it automatically changes it on the other side. It decreases the amount of thought required by the user to manually change settings on two sides. You can also use it with a remote control.

Comfort Phone

Comfort Phone in ReSound works when the hearing aid detects that a phone has been put to the ear. It will turn the gain of the opposite ear down by 6 dB, which improves the SNR. The ear that the does not have the telephone input has less amplification of environmental sounds, but it is still able to actively monitor the environment.

Most hearing aids have available accessories, including remote microphones, FM systems, and TV and telephone connections. As I mentioned, the ReSound devices use a 2.4 GHz transmission, which eliminates the need to have something worn around the neck. This arrangement easily allows you to be eight yards from the transmitter, instead of three feet.

Unite Mini Microphone

One thing that is nice with our Unite mini microphone is that you can have the same benefit of increased SNR across distance as you would have with an FM system. When listening through hearing aids with directional microphones only, listeners have more difficulty understanding in background noise as they move from 1.5 meters to 6 meters away, purely because of the distance. If you use an FM system or a mini microphone system on the 2.4 GHz band, you can maintain the understanding in background noise even with greater distances away from the person who is speaking. The great benefit with the Unite Mini Microphone is that you can provide an FM benefit of increased SNR in a poor listening environment, with better cosmetics and usually a much lower cost than an FM system.

Made-for-iPhone Hearing Aids

Another recent advance in connectivity is made-for-iPhone hearing aids. This requires a 2.4 GHz antenna and transmission within the hearing aid, because the iPhone has a 2.4 GHz antenna, which is used for Bluetooth. If there is a 2.4 GHz antenna in the phone and in the hearing aid, you can communicate directly without needing a telephone accessory between the hearing aid and the phone. Almost all hearing aids with wireless connectivity can connect directly to other hearing aids, smart phones and other electronics with some sort of intermediate device that translates the hearing aid signal into a Bluetooth signal. However, with the made-for-iPhone, we are able to put the Bluetooth language into the hearing aid so that it communicates directly with the iPhone. In this case, you can also use the phone as a remote to control or communicate directly with the hearing aids.

Demo

We highly recommend providing a demonstration of these different devices. The demo may seem challenging at first, but once you figure it out, it only takes about 15 minutes, especially when you are working with a system that has a good feedback cancellation system and will show benefits with wireless technology. If you fit the hearing instruments based on the hearing loss and calibrate the feedback cancellation system, you can do a demo right there at the first fit and get people comfortable with the wow factor they can experience. They will notice a difference in audibility, but you might also want to demo some accessories with the wireless systems.

You could explain the audiometric results to the person, but I also recommend going in and doing some sort of FM microphone or TV streaming demonstration so they know what their hearing aids are capable of. We need to change how we approach fitting and selling hearing aids. When you go to buy a car, you are getting a feel for the vehicle that you are going to buy that day, and you know that it is a big investment. I think it is important that we start showing hearing aids the first time and putting them on the ear so they can hear the improvement with all of the wireless accessories that are available. It can make a difference in what they feel about the benefits of hearing aids, and they will be more comfortable during those weeks while they are waiting for the final fittings.

Summary

To summarize, I looked at the old way we described hearing aids with the microphones, amplifier, receiver, and battery, and changed this into a microphone, microchip, receiver and battery, and looked at what is going on with the microchip in a way that people can start to understand the technology that we are able to provide today.

Questions and Answers

Does the environmental optimizer adjust volume or apply noise reduction, or is it just based on environmental changes?

When the environmental optimizer classifies that situation, it will change the volume and change the noise reduction. That is our Environmental Optimizer II in our premium products.

References

Møller, K. & Jespersen, C. (2013). The effect of bandsplit directionality on speech recognition and noise perception. The Hearing Review. Retrieved from https://www.hearingreview.com/all-news/21682-the-effect-of-bandsplit-directionality-on-speech-recognition-and-noise-perception

Cite this Content as:

Nelson, J. (2015, February). Hearing aids: not just four components anymore. AudiologyOnline, Article 13183. Retrieved from https://www.audiologyonline.com.